High-Performance Vector Search at Scale

Qdrant helps you build the AI retrieval you want. Ship high performance, full-feature vector search at any scale and with any deployment model.

Build for Production-Grade AI Search

Engineered for real-time retrieval with the speed, accuracy, and scale that modern AI demands.

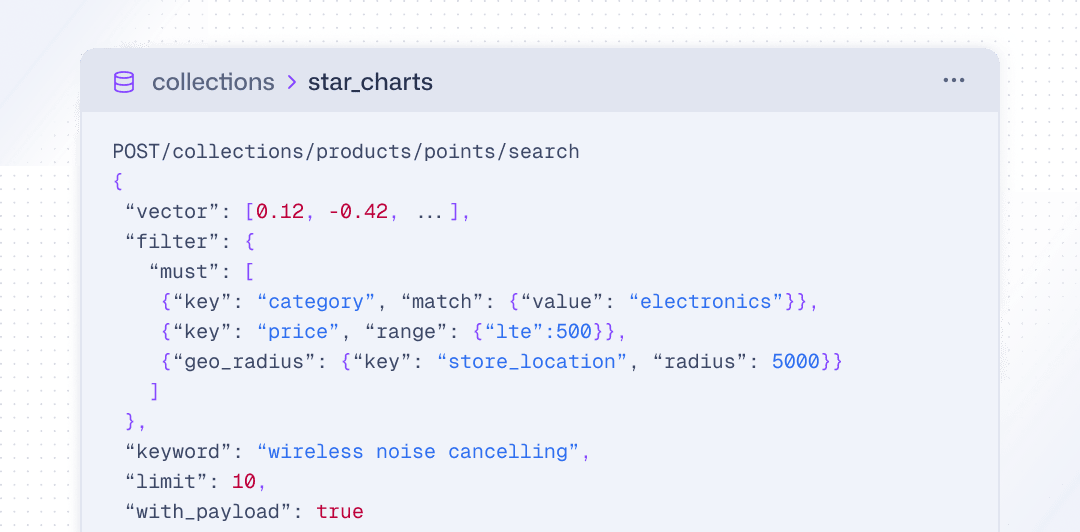

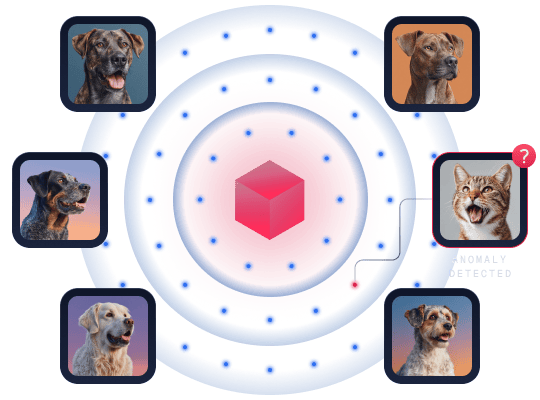

Expansive Metadata Filters

Store metadata in JSON and use advanced filters, such as nested, text, geo, has_vector, and more.

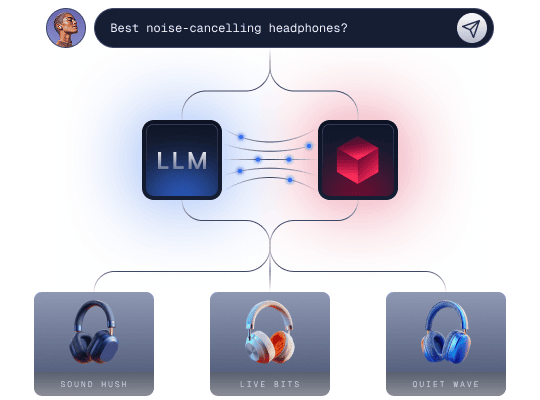

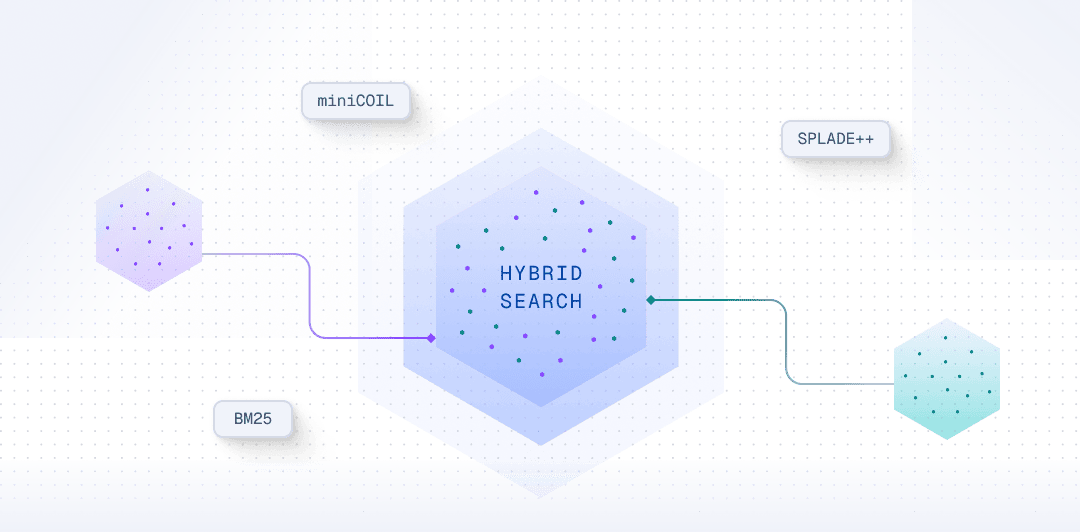

Native Hybrid Search (Dense + Sparse)

Blend keyword and vector search in one query – use dense or sparse vectors. Supports BM25, SPLADE++, and miniCOIL.

Explore Hybrid Search

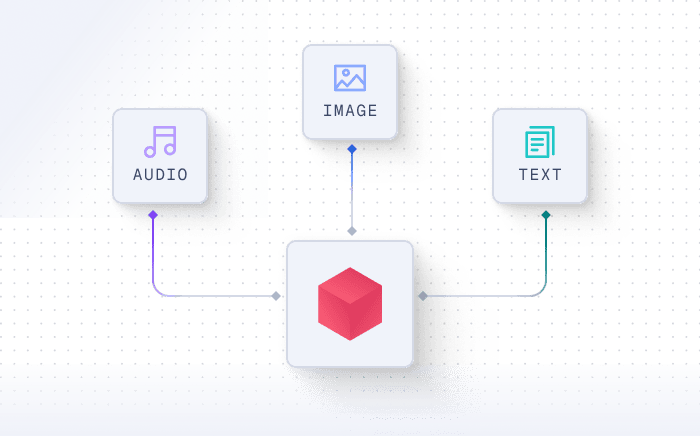

Built-in Multivector

Set new standards for relevance; make the retrieval layer more expressive, flexible, and multimodal with multiple vectors per object.

See Documentation

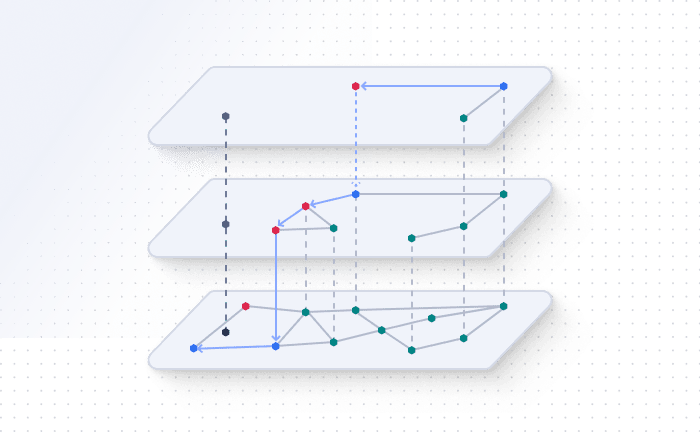

Efficient, One-Stage Filtering

Filters are applied during HNSW traversal — no pre- or post-filtering. High recall with low latency, even under complex conditions.

See Documentation

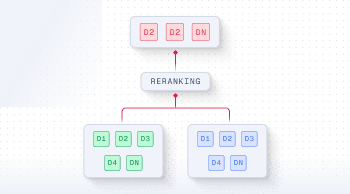

Full-Spectrum Reranking

Infuse business logic with score boosting, achieve token-level precision with late interaction models (e.g. ColBERT), diversify results with Maximum Marginal Relevance (MMR)

See DocumentationOne Engine, Endless Applications

Deploy Anywhere at Enterprise Scale

Open-source DNA with enterprise-grade security and flexibility — run on-prem, hybrid, edge, or move seamlessly to Qdrant Cloud.

Qdrant Cloud

Fully managed with high availability and auto-sharding on AWS, GCP, or Azure.

Qdrant Hybrid Cloud

Bring your own Kubernetes with decoupled control and data planes. Scale anywhere with full data control.

Qdrant Private Cloud

Maximum control with air-gapped, compliant deployments.

Qdrant Edge (Beta)

Lightweight, low-latency vector search close to where data is generated.

Enterprise-ready tooling

Deploy on any cloud, hybrid, or edge environment with full data control. Choose the setup that fits your infrastructure and scale securely without compromise.

Qdrant's technical architecture and performance capabilities have proven to be exactly what we need as we scale our AI-powered features across the platform. They are an ideal partner as we standardize our vector search infrastructure to serve millions of users worldwide.

Performance by Design

We research, engineer, and optimize each component from first principles for the fastest, most scalable, and most customizable AI retrieval and search engine.

Highest‑Performance Vector Search Engine

Built entirely in Rust with SIMD and a custom storage engine (Gridstore) — no wrappers, no bolt-ons. Just fast, scalable vector search.

Real‑Time Indexing

Index new data instantly without rebuilding the entire index. Your vectors are searchable the moment they're added.

Memory‑Efficient Storage

Store billions of vectors with minimal memory footprint using our optimized storage architecture.

Asymmetric, Scalar and Binary Quantization

Reduce memory usage by up to 64x while maintaining search quality with advanced quantization techniques.

Engineered for Builders

Intuitive APIs and built-in tools — crafted for developers who demand more.

Developer friendly APIs

Start with a single API call — scale to advanced control over HNSW, hybrid fusion, reranking, and multi-vector retrieval, all via REST, gRPC, or official clients (Python, JavaScript, etc.).

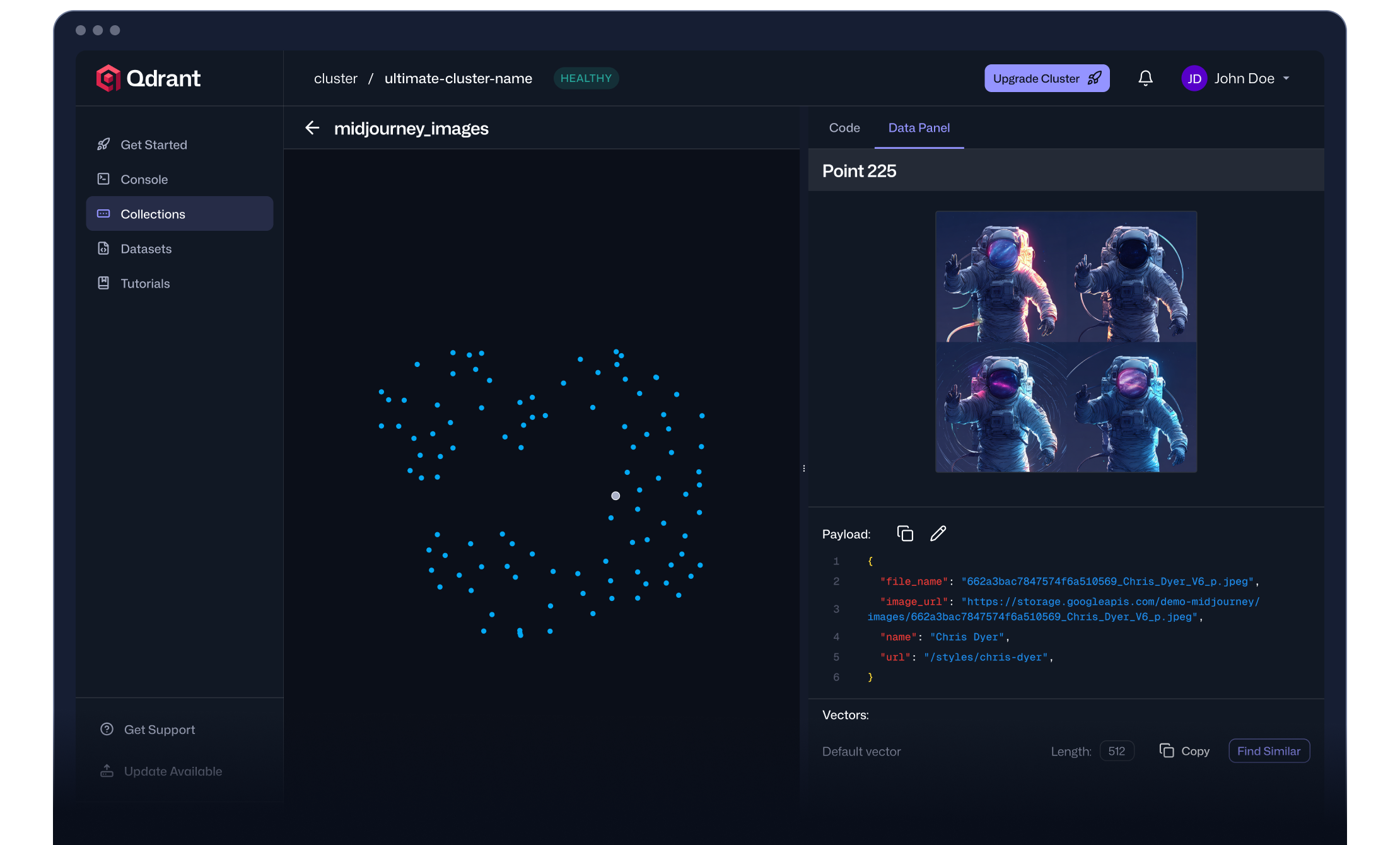

Explore the API DocsBuilt-In Web UI & Visualizations

Explore collections, test vector and metadata queries, apply filters, and inspect results — all from a clean visual interface.

Try Web UINative Cloud Inference

Generate text and image embeddings and run vector search in Qdrant Cloud — no separate pipeline or infrastructure needed.

Learn More About InferenceIntegrates with leading AI tools & frameworks

Build AI Search the Way You Want

From RAG to AI agents, Qdrant delivers hybrid dense–sparse retrieval with advanced metadata filtering and real-time updates.