The Missing Link in Enterprise AI? It’s Probably MCP.

AI can write code. It can summarize documents. It can talk like a human. But it still doesn’t know what’s happening around it. It doesn’t know what tools your team uses. It doesn’t know where the data lives. It doesn’t know the context.

And when it doesn’t know, it can’t help. This gap shows up in real ways. A finance chatbot can’t fetch a report from your CRM. A healthcare assistant doesn’t see a patient’s recent lab results. The answers stop being useful. Right now, most fixes are held together by workarounds. Custom APIs. Isolated agents. Manual sync jobs. All fragile. None built to scale.

What enterprises need is a standard. One that helps AI models interact with tools and systems in a clean, reliable way. That’s what the Model Context Protocol is here to solve.

Why Enterprise AI Is Still Guesswork

AI models are getting smarter. But they’re still stuck in a box.

They generate answers, but those answers are often disconnected from what’s actually happening inside your business. That’s because most models don’t have access to your tools, your data, or your workflows. They operate in a vacuum. You can ask them anything, but they won’t know what’s going on unless you feed it to them.

And that’s the catch.

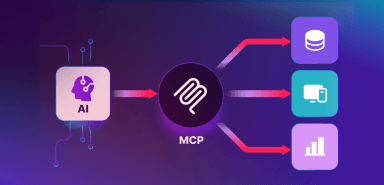

Think of MCP the way we now think of USB-C in hardware. A universal connector that simplifies interaction. It doesn’t solve everything, but it removes chaos. Before USB-C, every device came with its own port and adapter. Things worked, but only with effort. Enterprise AI is in a similar phase. Models are capable, but every integration feels custom.

MCP changes that. It offers a single, open way for systems and models to talk; not through duct-taped APIs, but through defined structure.

In an enterprise setting, context is everything. It’s not enough for a model to "sound" right. It has to be right. It has to pull the latest policy update, check against a system record, or trigger a tool that completes the task.

Today, most of that is hardwired. Teams build one-off integrations. They pass prompts with embedded instructions. Some even train entire models on company data just to make things feel “aware.” It works, until it breaks. Or scales. Or shifts. The more systems you connect, the harder it is to keep things clean. And as AI moves from pilot projects to daily operations, these issues are no longer technical—they’re operational.

The question isn’t “Can AI respond?”

It’s “Does it know what matters when it does?”

A New Standard Emerges

The Model Context Protocol didn’t come out of nowhere. It was built to solve a pattern the AI community kept running into. Models that are powerful but isolated. Impressive, but blind to the systems they need to work with.

MCP changes that.

It’s an open protocol developed by Anthropic. Think of it as a standard way for language models to request information, interact with tools, and respond with awareness. It gives models a structured way to say, “Here’s what I need to do the job right,” and to get that data or function in return. Safely and predictably.

Technically, it’s built on JSON-RPC 2.0. Simple. Language-agnostic. Clean. But the real shift isn’t just in the format. It’s in how this structure helps LLMs behave more like agents.

Not just responders. Here’s the difference.

A responder gives you an answer based on training data.

An agent gets real-time context, acts on it, and adapts.

MCP defines how that handoff works. Between model and machine. Between intent and action. And this isn't just a lab experiment. Companies like Replit and Sourcegraph are already using MCP-style architectures to power intelligent developer environments. Anthropic’s own Claude desktop client uses a local MCP server to interact with your files. It’s not theoretical. It’s happening.

“The future of AI isn’t just generative. It’s interactive. And interaction demands structure.”

— Jared Kaplan, Chief Science Officer at Anthropic

For enterprises, that structure is long overdue. Most have spent the past year building fragile connectors and one-off pipelines just to make models feel usable. MCP offers something better. A stable, open foundation.

One that doesn't need to be rebuilt every time the model or use case changes.

Implications for the Enterprise

Most enterprise AI projects today run into the same issue. The model might be accurate, the use case might be clear, but the output still falls short. Not because the model is weak, but because it doesn’t know enough. It can’t access the right systems. It can’t trigger the next action. It can’t close the loop.

This is where MCP becomes important. It gives AI systems a way to move beyond static responses. With MCP, models can request tools, pull fresh data, or validate actions before offering a response. In regulated environments, this isn’t just helpful. It’s essential.

A McKinsey survey from late 2024 showed that over 68 percent of enterprises experimenting with generative AI struggled with system integration. Not model accuracy. Not user adoption. Integration.

This gap shows up across functions:

- In customer service, where AI needs access to live ticket data and CRM history.

- In finance, where models must validate transactions before surfacing insights.

- In healthcare, where context determines whether a response is informative or dangerous.

MCP doesn’t guarantee intelligence. It doesn’t replace strategy. But it brings structure to how models communicate with your tech stack. It reduces dependency on manual wiring. It creates a known path for models to follow when they need context.

For security teams, this means clear audit trails. For product teams, fewer bottlenecks. For architects, less friction every time the model needs to do something new. The long-term value is this. Enterprises can move from brittle prototypes to operational agents that work with their systems. Not around them.

Coditas in Action

We’ve worked with enterprises long enough to know where AI gets stuck. It’s never just the model. It’s everything around it. The systems. The access. The missing structure.

That’s why we started working with MCP early.

At Coditas, we’re exploring how MCP can help our clients move from experimental models to functioning agents. The goal isn’t to build smarter chatbots. It’s to create AI systems that operate with context, follow rules, and deliver value within real-world constraints.

In healthcare, we've mapped how MCP can enable models to pull drug interaction data or retrieve lab results securely, even from legacy EHR systems, without exposing sensitive records. In BFSI we’ve looked at ways to connect models to internal knowledge bases while staying audit-friendly and compliant. For engineering teams, we’re exploring IDE extensions where models assist with code refactoring based on domain-specific context or surface SDK documentation to guide development decisions.

We’re not doing this in isolation. Our engineers are testing open MCP implementations, our architects are building connector frameworks that adapt to client-specific tools, and our consultants are helping product teams understand when MCP is the right fit.

This work isn’t theoretical. It’s part of the way we design systems now. The moment an AI model needs to do more than answer questions, MCP becomes part of the conversation.

What Leaders Need to Consider Now

You don’t need to rebuild your tech stack to work with AI. But you do need to rethink how your systems speak to it.

Most AI programs today are still designed like a one-way street. The model listens. It answers. That’s it. But for real value, the model needs to ask questions too. It needs to fetch data, call tools, confirm decisions, and adapt to change.

So, the question is no longer “Should we use AI in this workflow?” The question is “Can our systems talk to AI the way they need to?”

MCP isn’t mandatory. But something like it will be. Every major enterprise that plans to scale AI will eventually face the same questions:

- How do we give models access without losing control?

- How do we standardize that access across teams and tools?

- How do we keep visibility into what models are doing in production?

There’s no silver bullet. But waiting isn’t a strategy either. Enterprises that start mapping these answers now will be ready. Not just for pilots or demos. For real systems that learn, act, and improve over time — without breaking everything around them.

Final Perspective

Every enterprise wants smarter systems. The kind that don’t just predict, but understand. The kind that don’t just respond, but take action.

The gap between that vision and today’s reality isn’t intelligence. It’s structure. The Model Context Protocol offers a way to close that gap. It gives AI models a path to interact with tools, pull the right data, follow rules you can trust. And it does this without forcing teams to start from scratch.

At Coditas, we see MCP not as a trend, but as part of a larger shift in how intelligent systems will be designed, deployed, governed. We’re not chasing it. We’re building with it. If you're figuring out how AI should work inside your systems, not just around them; now is the time to start.

Need help with your Business?

Don’t worry, we’ve got your back.