Inspiration

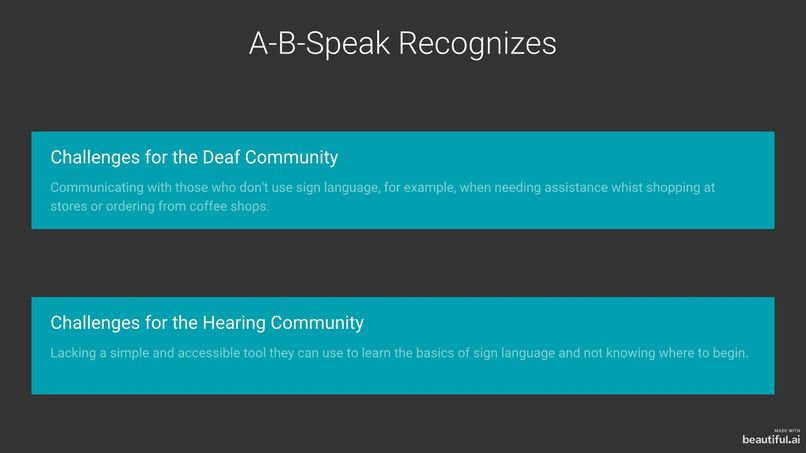

Communion between the hearing community and the deaf community is a frequent challenge. Despite an individual's best intentions, it isn't always possible to understand one another and deaf individuals may sometimes leave a coffee shop or store feeling frustrated and misunderstood by hearing staff. Our team members met for the first time at this hackathon and immediately bonded over a shared passion. Despite one of our team members spending 4 years working in a rehabilitation centre for individuals who are deaf and hard of hearing, it was a different team member who came up with the idea of creating a machine learning model that can translate sign language into auditory words.

What it does

Our model has the ability to identify the sign language letters of the alphabet and translate them into the latin alphabet at a satisfactory level of accuracy.

How I built it

We used over 50 photos of each letter of the latin alphabet and used Google's AutoML Vision API to train our model. We used both an American sign language on kaggle's website for our dataset as well as our own photos. We implemented a camera from our laptop using javascript to take photos and upload to the desktop.

Challenges I ran into

Our camera doesn't function the way we planned. It only uses the upper left hand corner of the frame and we unfortunately ran out of time to fix this issue. Linking front end to back end was a challenge.

Accomplishments that I'm proud of

We learnt how to use google vision for the first time and we challenged ourselves to try something completely new and beyond our comfort zones. We've developed a passion for machine learning and artificial intelligence.

What I learned

We acquired a tremendous understanding of the value of having an abundance of data and an idea of the quantity and quality of data it might require to obtain highly accurate results.

What's next for A-B-Speak

Our goal is to link the front end to the back and learn how to create a complete working application. At A-B-Speak, our vision is to offer a smart-device application which uses computer vision to recognize sign-language and translate it into verbal language in real time, using the device's microphone.

Built With

- googleautoml

- javascript

- python

Log in or sign up for Devpost to join the conversation.