Inspiration

Visualization is often the key to comprehension.

It's true across as diverse topics as mathematics and learning languages -- seeing graphs and pictures helps us immerse ourselves in 'other worlds' faster. We wanted to bring our ordinary textbooks and novels to life, leveraging the power of media and visuals.

We also live in a world where an estimated 5-10% of individuals are grappling with dyslexia. Visualization is an apt tool for accessible communication. AniText is inspired by the belief that every child, regardless of their learning abilities and styles, deserves access to educational materials that cater to their unique needs, ensuring that learning is not a struggle but a joyful journey of discovery.

What it does

AniText harnesses the capabilities of Generative AI, the Manim (mathematical animation) python library, and Stable Diffusion Image Generation to generate visual aides, animations/videos (a la 3Blue1Brown), and scenery to immerse readers in new experiences. You can visualize a dodecahedron in 3D or a triple integral, for example.

For dense, narrative-heavy novels, our tool employs large language models to identify phrases pivotal to the storyline and challenging to visualize, especially for young readers or those with learning disabilities. It then generates static, contextual visual images, strategically embedding them in locations within the text to enhance comprehension without disrupting the reading flow.

In the realm of math textbooks, AniText goes a step further by creating dynamic visual simulations to portray complex mathematical concepts. The AI scrutinizes the textbook, pinpointing sections that are crucial for understanding and potentially challenging for students, particularly those grappling with dyscalculia. It then crafts live simulations and animations, embedding them within the textbook to ensure they are not just visually appealing but also contextually relevant and impactful.

AniText is not merely a visual aid but a carefully designed ally in the learning journey, ensuring that every image and simulation is not just seen but also tells a story, clarifies a concept, and most importantly, adds tangible value to the learning experience of each student.

Check out our demo video! --> https://youtu.be/L-QIHS7Q0eg

How we built it

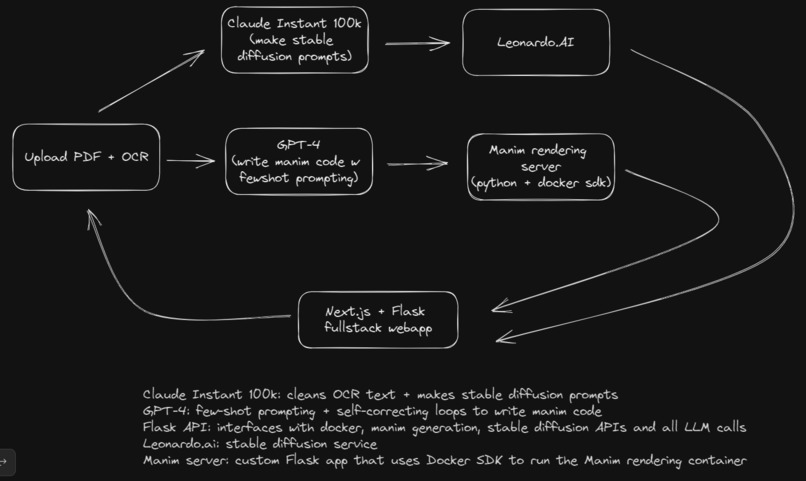

See the flowchart to see the components!

We use Claude by Anthropic to clean the output of PDF OCR, then we also prompt it to generate prompts for stable diffusion, which we generate through a service called leonardo.ai.

We simultaneously use GPT-4 to generate manim code with few-shot prompting and a custom-engineered self-correcting loop, which passes Python code to our custom Docker solution managed by a Flask API. It orchestrates several manim rendering containers in parallel, communicating with the GPT-4 loop if the code fails to render.

Our frontend is Next.js + Flask. We use Python as a middleware between the Anthropic, OpenAI, Leonardo, and Docker SDK APIs, and we call our middleware from JS right in our React app.

We used Git for collaboration and a lot of ChatGPT for testing out prompts. We also heavily relied on example manim projects to prompt GPT-4 with (for the few shot prompting).

Challenges we ran into

Ensuring our Docker container could adeptly manage multiple incoming requests was difficult -- we developed a custom Flask application that uses the Docker SDK to run several rendering containers in parallel. The Flask application then handles reading the file, serving it at static urls for the frontend to access, and mounting/unmounting volumes for the containers.

Another challenge was accurately prompting our large language models to output the results that we wanted -- we worked through several iterations of prompts and tests and engineered a custom solution to actually automatically reprompt GPT-4 if the code it generated was incorrect. This was actually very important since it's knowledge cutoff is 2021, so it only knows manim v0.10.0 and the current version of 0.17 so the code it wrote was often simply wrong. We experimented with several approaches to try and update it's knowledge such retrieval-augmented-generation or giving docs in the prompt, and settled on few-shot prompting (where we gave it several examples in the conversation history).

Closing Note

AniText is not merely a tool; it is a commitment to ensuring that every person, irrespective of learning disabilities or challenges, has access to a world where learning is not defined by barriers but by possibilities, exploration, and joy. In a society where everyone's learning needs are as unique as they are, AniText stands as a beacon of inclusive, accessible, and enriched comprehension.

Built With

- anthropic

- claude

- docker

- flask

- gpt4

- html

- javascript

- manim

- openai

- python

Log in or sign up for Devpost to join the conversation.