The inspiration for this project came from a simple but frustrating reality: modern application security tools are either too static for novel threats, or too naive to catch sophisticated attacks.

After years working in cybersecurity, I've watched countless organizations fall victim to attacks that happened in the critical window between vulnerability disclosure and patch deployment. Traditional WAFs rely on signature-based detection, essentially playing yesterday's game against tomorrow's threats. They wait for attacks to be documented, analyzed, and codified into rules before they can defend against them. By then, the damage is often already done.

The landscape shifted dramatically when I started observing a new pattern: AI-powered attacks were evolving faster than our defenses could adapt. Attackers began leveraging agentic AI systems and large language models to automate vulnerability exploitation at scale. These weren't just automated scanners running predefined scripts. They were intelligent agents capable of reading documentation, understanding context, generating polymorphic payloads, and adapting their techniques in real-time based on defensive responses. I realized we were facing an asymmetric warfare scenario. Attackers could iterate and test their AI-driven exploits in controlled environments, perfecting them before deployment. Defenders, meanwhile, were still relying on reactive approaches that required human analysis of each new attack vector. The time-to-detect and time-to-respond cycles were measured in days or weeks, while attackers operated in milliseconds. The final catalyst came from a specific incident: I witnessed an AI-powered attack chain that used natural language processing to parse API documentation, identify potential injection points, and craft context-aware exploit payloads that bypassed multiple layers of traditional security controls. The entire reconnaissance-to-exploitation cycle took under three minutes. Traditional security tools didn't flag anything suspicious until the data exfiltration phase, and by then it was too late.

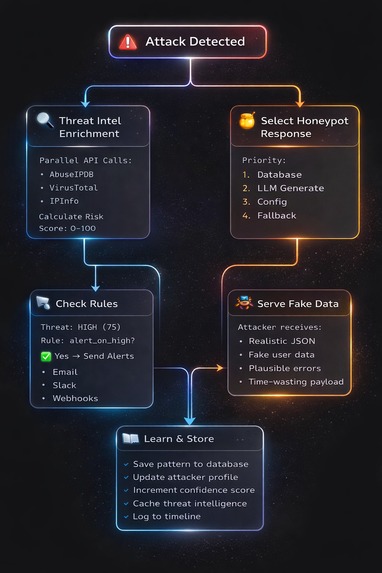

The solution became clear, fighting AI with AI, but add strategic deception to buy time, while continuously enriching our intelligence from public threat feeds. Instead of just blocking or allowing requests, this project would create a third option: engage the attacker in a sophisticated deception operation. Feed them realistic but fabricated data, waste their computational resources, and most importantly, learn from their techniques in real-time.

This approach serves three critical functions. First, it provides active defense by analyzing threats at the speed of AI. Second, it introduces operational friction for attackers by making them question whether they've actually compromised the target or are trapped in a honeypot. Third, it generates actionable intelligence by correlating observed attack patterns with global threat intelligence from sources like AbuseIPDB and VirusTotal (for now).

Built With

- abuseipdb

- gemini

- go

- ipinfo

- mailtrap

- postgresql

- slack

- twilio

- virustotal

Log in or sign up for Devpost to join the conversation.