Inspiration

Assess.AI was born from a desire to simplify the academic grading process while enhancing student engagement. As education and technology continue to merge, grading can still be a labor-intensive and repetitive task, limiting the time educators have for in-depth student interactions. Our goal was to create a solution that lightens the load for graders and TAs by automating assessment tasks, allowing them to focus on the parts of teaching that foster deeper learning—like addressing student queries and supporting their academic growth.

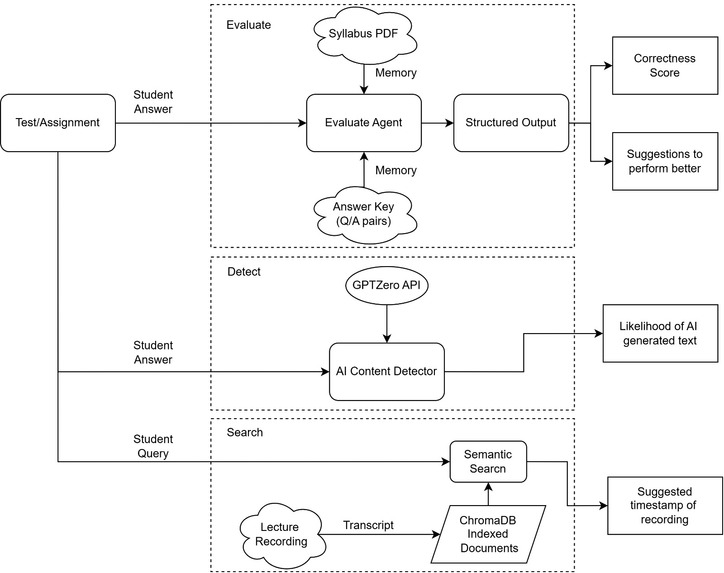

What it does

- Automated Similarity Evaluation: Compares students’ answers to ideal responses provided by faculty on a 0-1 similarity scale, ensuring fair grading.

- AI Detection: Analyzes if a student's response was AI-generated, aiding in maintaining academic integrity.

- Constructive Feedback: Offers personalized suggestions for students to improve their answers for higher accuracy.

- Video Timestamp References: Directs students to specific timestamps in lecture videos that address the question’s topic, supporting in-depth understanding.

- ‘Ask a Query’ Feature: Allows students to submit queries directly to graders and TAs for additional help.

How we built it

- Backend Development: We used FastAPI for building a responsive backend and integrated ChromaDB to store and retrieve embeddings. The generative AI model, managed with LangChain and Llama, evaluates student answers and provides similarity scores and suggestions.

- AI Integration: We leveraged GPTZero for AI detection, ensuring the system could identify AI-generated content and uphold academic integrity. Embedding models like SentenceTransformer helped us capture semantic meaning to evaluate student responses accurately.

- Frontend Interface: A ReactJS frontend allows students and graders to easily interact with the system. The platform is designed to display similarity scores, feedback, and timestamped lecture references, making information readily accessible.

- Data Handling: With PyMuPDF, we parsed course PDFs, extracting and storing necessary material for automated comparison. Using structured prompts, the system interacts with grading models to ensure suggestions are concise, helpful, and easy to understand.

Challenges we ran into

- Balancing AI Precision and Practical Use: Designing a similarity score that accurately reflects understanding without over-penalizing minor deviations was a critical balancing act.

- AI Detection Sensitivity: Ensuring GPTZero accurately detects AI-written content without false positives, which would unfairly impact students.

- User-Centric Feedback: We wanted the feedback system to be meaningful but not overly complex. Creating concise, useful suggestions within two lines required fine-tuning the language model and prompt structure.

- Scalability of Embeddings: Storing and querying embeddings for large datasets while maintaining system responsiveness was a technical hurdle that we overcame with ChromaDB’s efficient structure.

Accomplishments that we're proud of

Creating a fully automated grading system that eliminates the manual work in evaluation, significantly easing the workload for TAs and graders.

What we learned

AI-Driven Assessment: We deepened our understanding of how AI can objectively evaluate student answers, not only comparing content but understanding intent and quality. Natural Language Processing (NLP): We learned how embedding models like SentenceTransformer can assess similarity between student and reference answers, applying NLP to practical scenarios. User Experience in EdTech: Building tools for both students and graders taught us the importance of clear, actionable feedback, which is crucial to a positive user experience in educational applications.

What's next for Assess.AI

- Providing more tailored feedback, such as specific tips or additional resources.

- Adding live Q&A sessions with TAs.

- Offering analytics to TAs and faculty on student performance trends for curriculum improvement.

- Plagiarism detection

- Detect answer and give feedback from handwritten notes

- Deploy it to cloud to make it more scalable

Built With

- chromadb

- fastapi

- github

- gptzero

- javascript

- langchain

- llm

- node.js

- python

- react

Log in or sign up for Devpost to join the conversation.