Inspiration

We wanted to blend robotics, computer vision, and natural-language control into one playful platform. Self-balancing robots are already cool from a controls point of view. Aura-67 pushes that further by adding an on-board camera, real-time tracking, and an interface where you can talk to the robot and watch it act on your words.

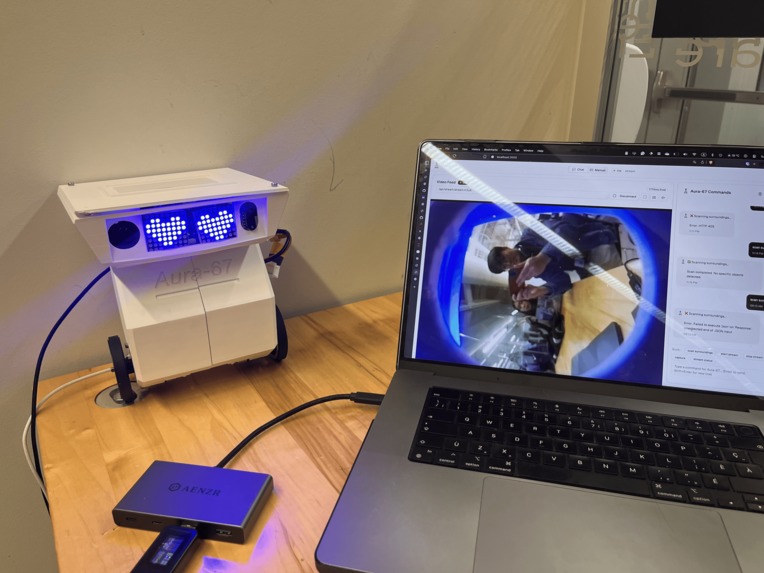

What it does

Aura-67 is a self-balancing robot you can control in two ways.

- Natural language. Say things like “move toward the red cup” or “what’s around me.” A language model interprets the request, the vision system finds the target, and the robot drives to the right place.

- Manual control. A web app gives joystick-style control, live video, and buttons for common actions like follow object, stop, and return to base.

Safety is built in with a time-of-flight distance sensor in the head so it slows and stops when close to obstacles.

How we built it

Hardware

- Raspberry Pi Zero 2 W in the head for camera capture, streaming, and vision/classification.

- ESP32-S3 in the body for motor control and balance.

- IMU/gyroscope for self-balancing.

- TOF distance sensor for proximity and collision avoidance.

Firmware and control

- C on ESP32 for motor drivers, PID balance loop, and motion primitives.

- Python on Raspberry Pi for camera pipeline, OpenCV processing, and target coordinates.

Perception and language

- OpenCV + TensorFlow/PyTorch for object detection/tracking and coordinate extraction.

- OpenAI API to turn natural-language commands into structured actions (goal, target color/object, mode).

Web app and backend

- Next.js + React (TypeScript) UI with live feed and controls.

- FastAPI service that receives user input, calls the LLM, triggers the vision routine, and sends motion commands to the ESP32 over a lightweight API/serial bridge.

Data flow (high level)

- User types or clicks in the web app.

- Backend turns intent into an action plan.

- Pi captures frames; vision finds the target and outputs coordinates.

- Backend computes a movement vector and sends it to the ESP32.

- ESP32 runs balance and motor control to execute the move, while TOF enforces a safe stop.

Challenges we ran into

- Driver carnage. We burned three motor drivers during bring-up which slowed integration.

- Tight clock. With only 36 hours, full end-to-end testing after the hardware setbacks was hard.

- Systems glue. Getting LLM intent, OpenCV, Pi control, and embedded balance loops to cooperate took careful interface design.

Accomplishments we’re proud of

- A working stack where frontend, backend, Pi vision, and ESP32 firmware can run in parallel and talk cleanly.

- Demonstrated natural-language to motion: text command → vision → coordinates → stable movement.

- A modular design that we can keep extending after the hackathon.

What we learned

- Treat hardware as a dependency with failure modes. Have backups and test harnesses early.

- How to translate open-ended language into safe embedded actions.

- Practical tricks for real-time CV on small hardware like the Pi Zero 2 W.

What’s next for Aura-67

- Replace the blown drivers and finish full closed-loop balance with the vision + language stack active.

- Improve detection and tracking for multiple objects and low light.

- Add multi-step tasks like patrol the kitchen and return to base.

- Polish the web app and ship a setup guide so others can build Aura-67.

- Explore the “home companion” path: simple security patrols, pet-play mode, and missed-notification alerts.

Try it out

- GitHub (org): https://github.com/HTN-Aura-67

- Demo video: https://www.youtube.com/shorts/rSfp2yne1f4

Team

Prisca Chien, Jiucheng Zang, Alex Xu, Hadi Ahmed

Log in or sign up for Devpost to join the conversation.