Inspiration

Over the past year, two our group members have had the misfortune of a few emergency hospital visits. While at the hospital, they gained first-hand experience of the current staffing shortages at hospitals across the United States, having to wait over an hour to receive service. Our group wanted to investigate possible uses of technology to help alleviate this issue, as this would save time for millions of people worldwide and improve their time-to-care.

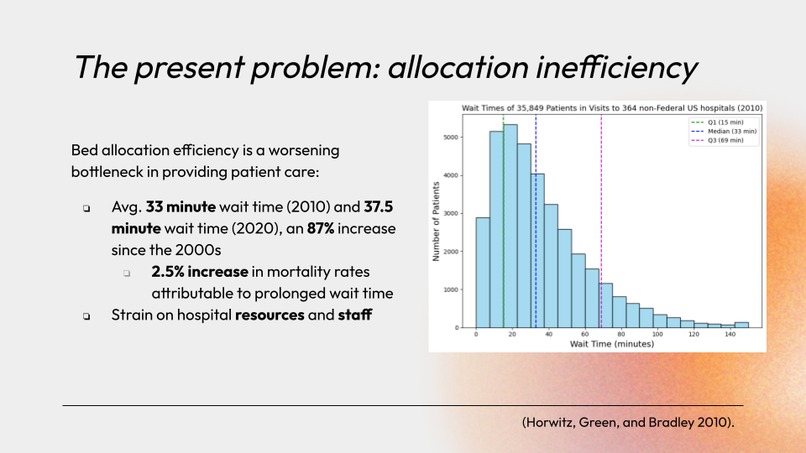

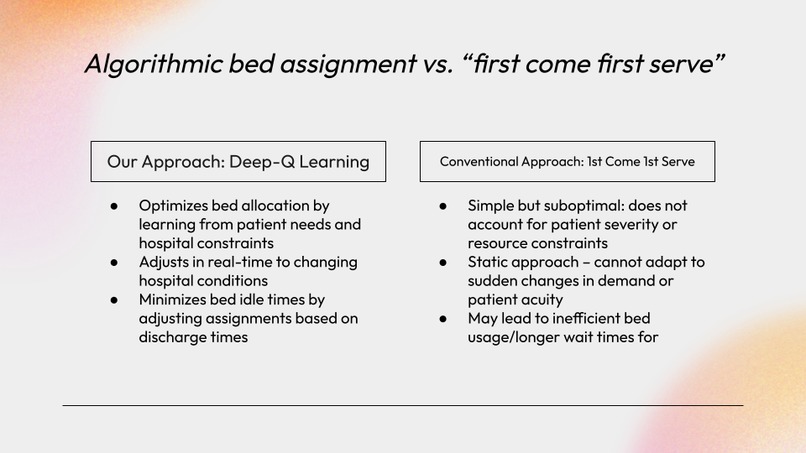

Based on our research and past experiences, we realized that hospitals tend to reserve beds in order to accommodate patients with extreme conditions. However, this can lead to a buildup of patients in the waiting room that are not allocated to these reserved beds, and must wait for care otherwise. Although this strategic practice is well-founded, we believe that we can optimize patient-to-bed allocations by using statistical analysis of patient conditions and hospital bed capabilities to prioritize both high-throughput as well as care-accessibility for patients that need immediate care. This would allow patients like our fellow group members with stabler conditions to receive care faster, without sacrificing the safety of other patients with more extreme conditions.

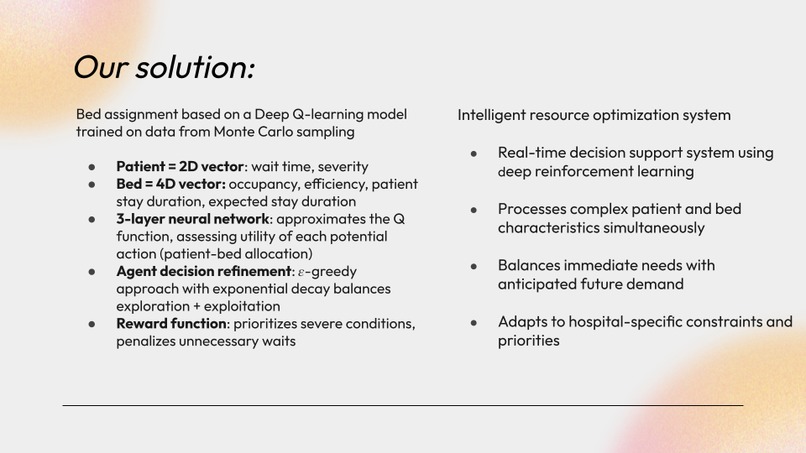

Driven by our deep commitment to improving healthcare delivery systems, our team has developed an innovative patient-to-bed allocation model using deep-Q networks trained on realistic synthetic datasets generated through Monte Carlo sampling. This project emerged organically from our shared interests—one team member will be working directly in a medical center this summer, while two others are actively pursuing pre-med tracks. Through countless discussions with healthcare professionals, we've come to understand that efficient resource allocation remains one of the most challenging aspects of hospital management, directly impacting patient outcomes and staff burnout rates. Our AI solution aims to address this critical pain point by dynamically optimizing patient flow and bed assignments in real-time hospital environments.

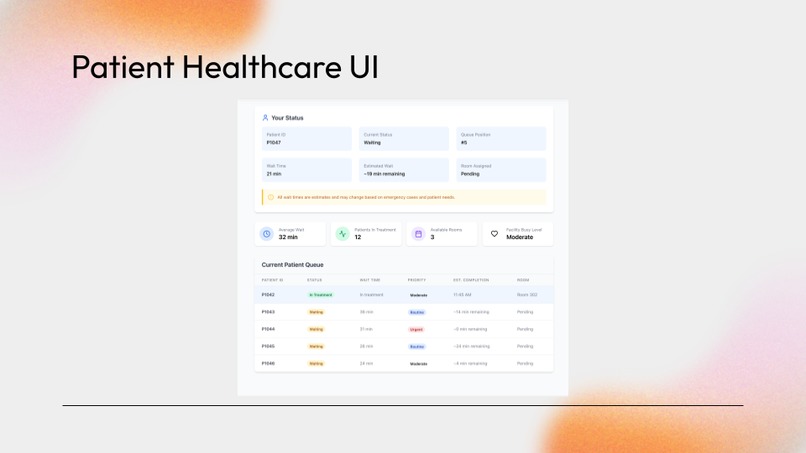

In order to help patients in addition to administrators, our system integrates a webapp that displays statistical data that people in the waiting room can access. This gives patients clear bounds on what to expect, alleviating anxiety, improving transparency, illustrating algorithmic fairness, especially in the context of AI and decision making, and fostering trust between patients and their caretakers.

What it does

Our project consists of three main components:

- A hospital simulation – This models real-world hospital behavior, including patient arrivals, discharges, and waiting room dynamics. It serves as the environment in which we train and evaluate our RL agent.

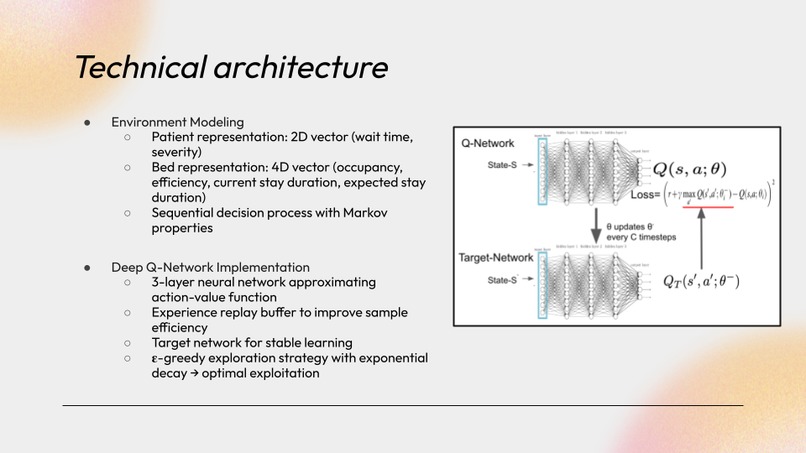

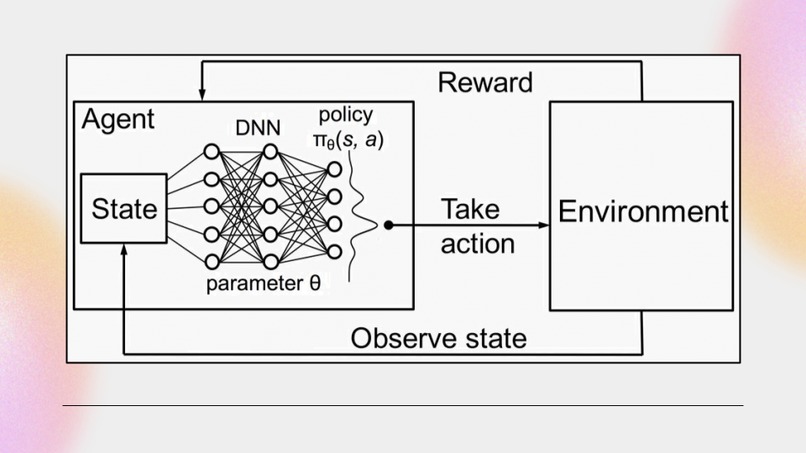

- A reinforcement learning agent – Using a deep-Q network (DQN), this agent takes in hospital state data—including patient conditions, bed status, and bed capabilities—and chooses optimal actions: either assigning a patient to a specific bed or placing them in a waiting queue.

- A patient webapp portal - A webapp that gives patients access to information regarding their care status, allowing them to see when they should expect care, their room assignment, as well as the hospital's overall status to improve transparency and algorithmic fairness.

How We Built It

We began by constructing a realistic hospital simulation, guided by research on daily patient flow and case distributions. We used Monte Carlo sampling to simulate incoming patients with weighted medical conditions to reflect real-world distributions. To visualize performance, we built a dashboard comparing our RL agent to a FIFO (First-In, First-Out) baseline.

Our DQN architecture was built entirely from scratch. We implemented a base neural network and iteratively added features as we ran into challenges—such as a replay buffer, target networks, and an epsilon-greedy policy. We also created a custom reward function to guide the DQN toward faster convergence and optimal decision-making.

We designed and tested multiple representations of the state space, iterating on both patient and bed state vectors. Throughout training, we closely monitored rewards and tweaked key hyperparameters—such as learning rate, epsilon decay, episode length, and buffer size—to refine the model’s performance. Once the model was trained, we saved its weights and implemented an inference script to run live demonstrations.

To develop the patient portal, we considered what information (queue status, room assignment, estimated wait time, hospital usage statistics) we would have found helpful to have when we were in the waiting room to alleviate our anxieties about when we would receive care. We then designed an inviting and intuitive user interface to display this information.

How we built it

We first build a realistic hospital simulation after some brief research on patient dynamics (arrival quantity, case types) for an average day. In developing this simulation, we utilized Monte Carlo sampling to generate patients with weighted (for realism) medical conditions to simulate people entering the hospital with varying conditions. We also made a visualizer to show active performance of the model compared to a FIFO (first in, first out) baseline allocation strategy.

We developed the architecture for our DQN from scratch. We wrote code for the basic neural network and made additional architectural choices as we ran into issues. In the end, we implemented a replay buffer, target networks, and an epsilon greedy policy. We also crafted a special reward function in order to try and guide the DQN's learning process, to speed up model convergence. Furthermore, we designed a dedicated state space to best represent the information we thought our DQN would need to make optimal actions; we developed several iterations of patient and bed state vectors. We ran multiple training loops, observing the DQN's obtained reward, while making changes to hyperparameters such as epsilon decay rate, learning rate, episode length, and replay buffer size, amongst others. After obtaining a capable model, we saved its weights and wrote code to run pure inference, demonstrating our model's performance.

We considered what information we would like to see in personal hospital visits in the interest of transparency, as well as algorithmic fairness given the use of AI for decisionmaking.

Challenges we ran into

A significant issue we ran into was making our model converge during the training process. Given the complicated nature of our simulation, the model had immense difficulty learning optimal actions over the large state space. We spent several hours tuning our hyperparameters in order to encourage exploration and meaningful learning to obtain good final performance.

Accomplishments that we're proud of

We are especially proud of the fact that we developed an entire reinforcement learning framework from scratch, including architecture development, simulation development, and training loop tuning.

We are also proud that we were able to develop a solution that could theoretically go on to improve hospital performance in the real world. Given our personal experience with hospitals, this issue holds significant personal meaning to both us and our families.

What's next for bedQ

Our next steps for bedQ involve making our solution more robust to sudden changes in incoming patient conditions, such as being responsive to an influx of patients with similar conditions due to local disasters. We additionally would like to have our system consider more state parameters related to beds and patients. Furthermore, we would like to consider the optimization of allocation of other non-bed hospital resources, such as X-ray scanning rooms, CT scanners, etc.

Log in or sign up for Devpost to join the conversation.