Inspiration

In the complex creator economy, there are one-time seemingly little mistakes can end up having serious consequences. Many streamers, educators, and other professionals live in fear of accidentally revealing a password, an API key, a phone number, or a private document during a live broadcast. A split-second error can lead to doxxing, financial loss, and a breach of trust with their audience. Existing solutions are manual and reactive, often requiring streamers to use clumsy overlays or simply "be more careful." We knew there had to be a better way: a proactive, intelligent safety net that protects creators without them even thinking about it.

What it does

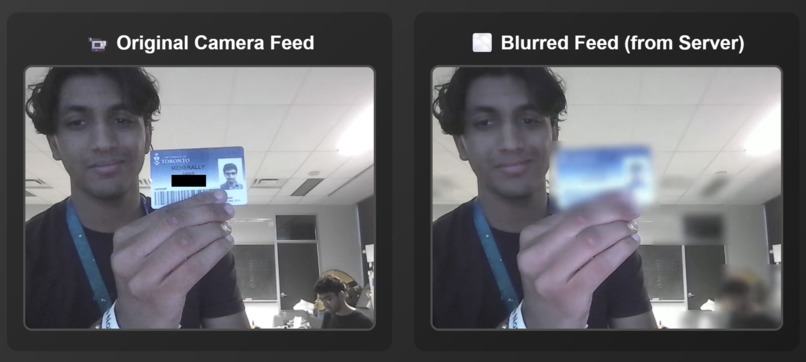

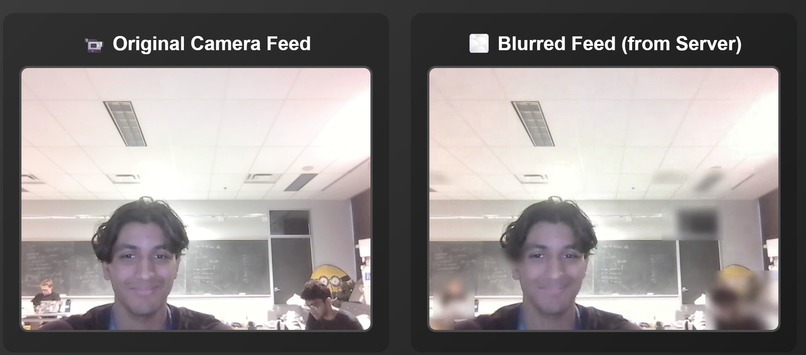

Blurr is a dynamic redaction service that acts as a real-time security guard for every bit of content passing through a creator's video feed. Blurr is designed to be easily integrable with streaming platforms like Twitch, YouTube, and Discord, detecting and blurring sensitive information before it ever reaches the audience. For the streamer, it's as simple as toggling on a switch in their broadcast settings.

- Smart Redaction: Intelligently identifies and blurs passwords, phone numbers, emails, API keys, private documents, and more.

- Minimal Performance Impact: All computer vision processing can be easily deployed on the cloud to allow nearly 0 impact to a creator's local CPU/GPU usage. This allows creators to dedicate their PC's full power to curating their content.

- Seamless Integration: Designed to function as a simple "Privacy Mode" toggle within the streaming platform's native dashboard. No downloads, no complicated setup.

How we built it

Blurr is architected as a highly scalable, vision-based system for real-time video processing.

- Core Technology Stack: The core functionality uses a Python backend leveraging state-of-the-art computer vision models like YOLOv8 for object detection (ID cards, documents), OpenCV for OCR with text-pattern recognition.

- Video Rendering: We use WebRTC to transport video feeds between our service and the desired endpoint. This peer-to-peer protocol is critical for minimizing the latency introduced by our processing hop.

- Dynamic Demo Platform: Platforms can test our service through our React and WebRTC based in-browser meeting tool. Our tool supports 2 users and has both webcam and screen share capabilities.

Challenges we ran into

- Low Latency: Our biggest challenge was making a low latency computer vision pipeline to detect all critical video segments in real-time with minimal processing delay. We tried several different OCR solutions before getting to our final optimized OpenCV solution.

- Keeping Privacy/Security Above All: We considered many different situations and scenarios where different types of data would need to be identified, from license plates to API keys. We designed our system to be ephemeral, making it easy to add new protection requirements through easy updates. Additionally, to protect the curator's data, we process video streams entirely in-memory without ever writing data to a disk.

- Ease of Use: Creating a powerful tool that feels invisible was a major hurdle. Our goal was a true "set it and forget it" experience. This meant spending a significant portion of our time abstracting away all the complexity of video routing and AI processing behind a single toggle switch.

Accomplishments that we're proud of

- Zero-Impact Security: We successfully created a powerful security tool, which—through a scaling process—would result in almost no performance cost for the end-user. This is a game-changer for streamers who cannot afford to sacrifice frames.

- Intelligent, Content-Aware Blurring: Blurr is more than a simple filter. It demonstrates a contextual understanding of the screen, capable of redacting a single line of text in a document while leaving the rest perfectly readable.

What we learned

- Real-Time Computer Vision Requires Architectural Trade-offs: Achieving sub-100ms latency for complex OCR and object detection meant carefully balancing model accuracy with inference speed. We discovered that ensemble approaches using lightweight models often outperform single heavy models in streaming contexts.

- Privacy-First Design Shapes Every Technical Decision: Building ephemeral, in-memory processing isn't just a security feature—it fundamentally changes how you architect data pipelines, memory management, and error handling. Every component must be designed to fail safely without data persistence.

- Context-Aware Redaction is Exponentially Harder Than Detection: Simply finding text on a screen is straightforward; understanding whether a detected string is a sensitive phone number or simply a standard piece of text, requires sophisticated scene understanding and temporal analysis across video frames.

What's next for Blurr

- Scaling through the cloud: Moving the computing to a cloud service would mean even lower latency for curators through edge computing deployment across global data centers. By distributing processing nodes closer to users worldwide, we can reduce round-trip times to under 50ms while enabling horizontal scaling during peak hours.

- LLM-powered customization: Integrating large language models to enable natural language configuration of privacy rules would be a game-changing feature. Creators could simply prompt "block any crypto wallet addresses" or "hide all URLs except YouTube links" and the LLM would dynamically generate custom detection patterns. This AI-driven approach would allow for highly personalized privacy profiles that adapt to each creator's specific content and risk tolerance, going far beyond our current predefined detection categories.

Log in or sign up for Devpost to join the conversation.