Inspiration

Long-form video editing is a major time sink for creators who then have to manually chop that footage into dozens of platform-specific clips. With attention spans shrinking and demand for multi-platform content skyrocketing, we set out to build Clicked.AI—an AI assistant that automates the grunt work of editing so creators can focus on storytelling, not tedious cuts.

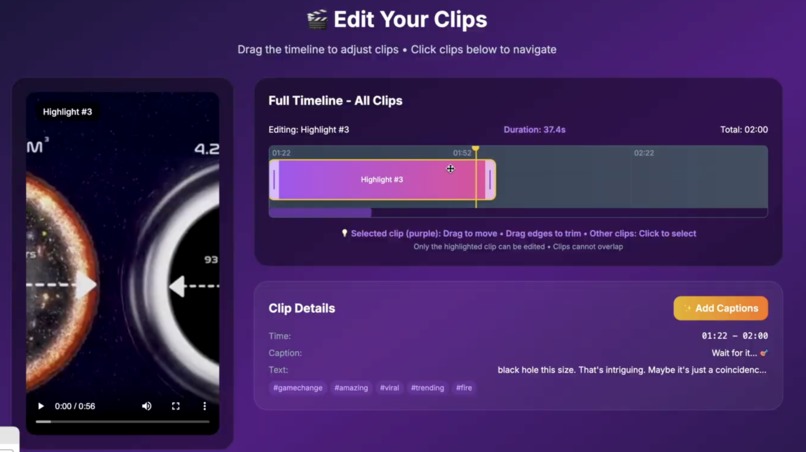

What it does

Clicked.AI takes a single raw video and:

- Denoises & transcribes the audio (FFmpeg + Whisper)

- Identifies topic shifts and enforces length bounds via GPT-4 prompts

- Generates titles, captions & hashtags tailored to each platform

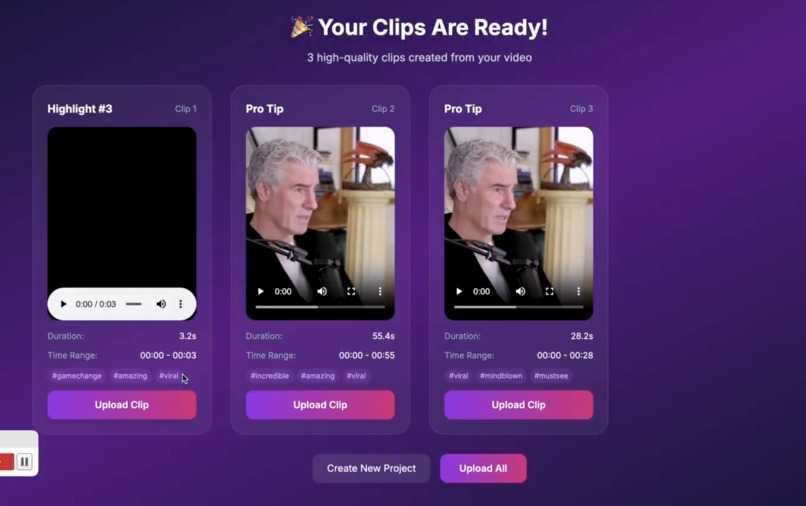

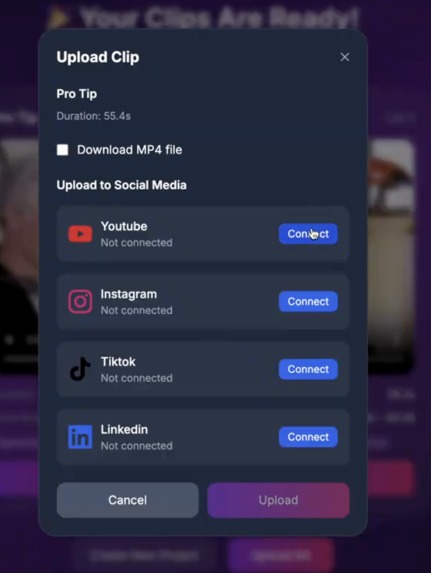

- Outputs a JSON of ready-to-publish clips for TikTok, Instagram Reels, LinkedIn highlights, YouTube Shorts, etc.

How we built it

- Backend: Python 3.11 scripts orchestrating FFmpeg, Whisper, PyDub, and the OpenAI API

- Segmentation: Compressed, time-stamped transcripts fed into GPT-4 with custom prompts

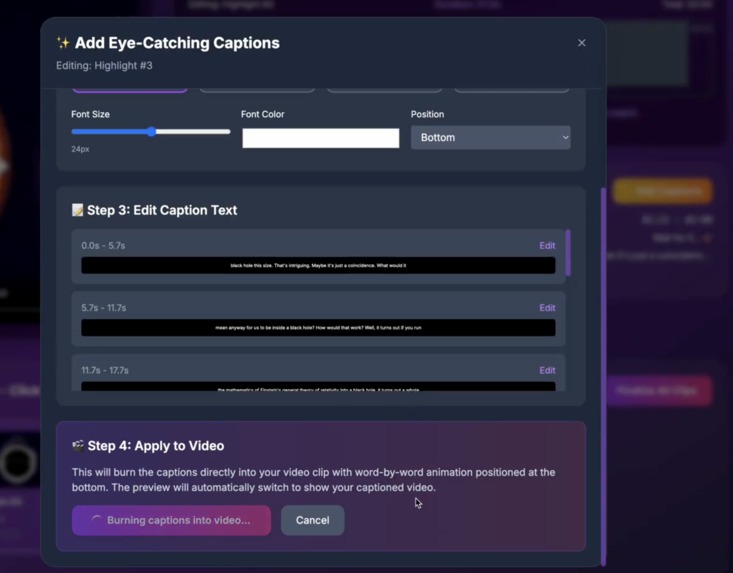

- Captioning: JSON-formatted GPT-4 calls to produce catchy titles and platform-optimized captions

- CLI tool:

reel_suggester.pyglues it all together—one command to process a video and generate multi-platform clips - Deployment: Containerized with Docker on DigitalOcean, with S3 for storage and CORS-configured playback

Challenges we ran into

- 🎬 FFmpeg Hell: Fighting binary restrictions in locked-down environments—solved with dynamic FFmpeg detection and fallback paths.

- 🧠 AI Optimization Nightmare: Balancing Whisper model speed vs accuracy, cutting prompt tokens to avoid rate limits, and iterating our engagement scoring dozens of times.

- ⚡ Real-Time Scaling: Processing large videos in memory-constrained containers, building streaming pipelines, and keeping API calls responsive with background jobs.

- ☁️ Deployment Complexity: Orchestrating frontend/backend containers, configuring S3 CORS for cross-domain video playback, and maintaining parity between local and cloud.

Accomplishments that we're proud of

- 🎯 End-to-End Prototype: In just a weekend, built a fully automated pipeline from raw video URL to multi-platform clips with zero manual editing.

- 🚀 Robust Segmentation: Developed transcript-compression and prompt templates that reliably yield platform-appropriate segments without blowing past API limits.

- 🔧 Production-Ready CLI & Architecture: One-command tool, secure containerization, and scalable cloud deployment handling concurrent processing seamlessly.

- 🎬 Caption Innovation: JSON-formatted prompts that produce perfect titles, captions, and hashtags every time—no post-edit required.

What we learned

- Prompt Engineering is Everything: Crafting concise, context-rich prompts was more critical than tweaking model parameters.

- Audio Cleanup Matters: FFmpeg’s

afftdnfilter plus confidence-based Whisper filtering drastically improved transcript quality. - Transcript Compression: Bucketing transcripts into ≤50 entries kept GPT calls under rate and token limits while preserving context.

- DevOps Realities: Containerizing video pipelines and managing large media files taught us key lessons in streaming, memory management, and CORS.

What’s next for Clicked.AI

- 🎥 Visual Segmentation: Integrate shot-boundary and CLIP-based topic detection so cuts respect on-screen changes.

- 🤖 Learning from Popular Reels: Train a lightweight ranking model on high-engagement Instagram Reels to learn real viral pacing.

- 🌐 Web UI & Mobile App: Move from CLI to a user-friendly web dashboard and native iOS/Android apps for on-the-go editing.

- 📈 Analytics & Scheduling: Build creator dashboards for performance metrics, post-scheduling, and ROI tracking.

- 🔗 Expanded Platform Support: Add Snapchat Spotlight, Pinterest Idea Pins, and custom aspect-ratio exports for every channel.

Built With

- ffmpeg

- python

- react

- typescript

- whisper

Log in or sign up for Devpost to join the conversation.