Project Story — CodeBlue

About the Project

CodeBlue is an AI-powered emergency intake and triage support system designed to assist overwhelmed emergency departments and hospital wards. Traditional triage relies almost entirely on what patients report at check-in, but some of the most dangerous medical events, such as choking, sudden collapse, or acute chest pain and can occur silently while patients wait or during the night when hospitals simply don't have the staff to monitor every room. CodeBlue addresses this gap by combining structured patient intake, grounded medical reasoning, and real-time computer vision into a single, unified triage workflow.

Inspiration

Emergency waiting rooms are high-risk environments. Staff are multitasking, patients are anxious, and subtle signs of deterioration can be missed. We were motivated by a simple question:

What if the waiting room itself could help detect emergencies?

Rather than replacing clinical judgment, we wanted to build a system that acts as a second pair of eyes and ears, continuously monitoring for signs of distress and escalating care when seconds matter.

How We Built It

CodeBlue is built around a dual-channel architecture:

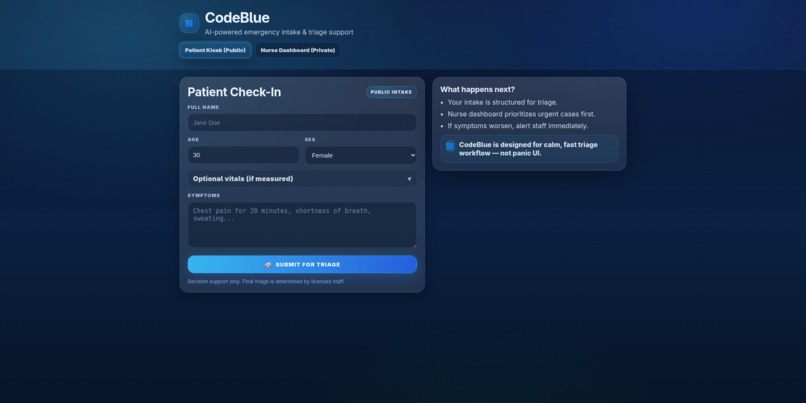

1. Intelligent Intake (Text → Clinical Priority)

Patients check in using a public kiosk interface, where they provide basic demographics and describe their symptoms in free text. When the form is submitted, the frontend sends the data to a Flask backend, which triggers a Retrieval-Augmented Generation (RAG) pipeline.

We indexed the official Emergency Severity Index (ESI) Handbook into a vector database (ChromaDB) and used Gemini embeddings and Gemini Flash to reason over the retrieved sections. This allows the system to assign an ESI level (1–5) and generate a short, nurse-facing medical summary grounded in real clinical guidelines rather than generic language model intuition.

We used mediapipe pose tracking models with fine-tuned positional parameters in order to recognize critical immediate-attention-needed cases and display them to the nurse dashboard such as choking, chest pains, head trauma, falling and so on.

We used Auth0 as the sign in/out feature for the nurses' dashboard to provide a patient information protection layer.

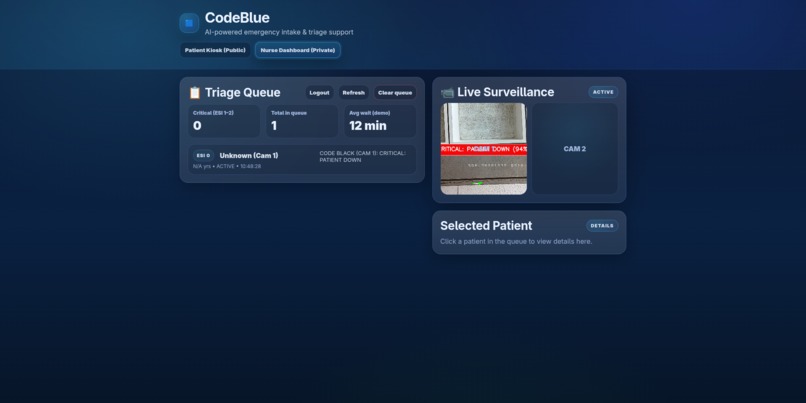

2. Visual Sentinel (Pose → Emergency Detection)

In parallel, CodeBlue runs continuous visual monitoring using two live camera streams. Each camera operates in its own background thread and uses MediaPipe Pose to extract skeletal landmarks. Instead of training a black-box classifier, we apply pure geometric reasoning on these landmarks.

We use shoulder width as a dynamic scale reference, allowing all distance thresholds to be expressed as ratios. This makes the system robust to distance and camera placement. Using this approach, we detect:

- Choking (both hands near the neck)

- Chest pain (Levine’s sign-like gestures)

- Falls or collapse (head position below hips or sudden downward velocity)

- Headache (hand near head above shoulder level)

Detected events are visually overlaid with skeletons and alert banners, making the system’s behavior fully explainable.

3. Code Black Override

When a critical visual event is detected, CodeBlue automatically triggers a “Code Black”. This bypasses the normal triage flow by inserting a patient with priority 0 at the top of the queue and attaching a snapshot of the event. This ensures immediate attention and gives staff visual evidence of why the alert was raised.

Challenges We Faced

One of the biggest challenges was ensuring robustness and explainability under tight time constraints. MediaPipe pose models are not thread-safe, so we had to carefully isolate each camera in its own thread and instantiate separate vision models.

Another challenge was scale invariance, a choking gesture looks very different depending on how far a person is from the camera. We solved this by using shoulder-width ratios as a dynamic ruler instead of fixed pixel thresholds.

Finally, streaming live video reliably to a browser without complex infrastructure was difficult. We opted for streaming over HTTP, which proved simple, stable, and well-suited for a hackathon environment.

What We Learned

This project reinforced several key lessons:

- Geometry can outperform heavy ML models for well-defined physical gestures, as well as cut down on processing latency.

- RAG is extremely performant for SOP-critical domains like healthcare, where reasoning must be grounded in official protocols.

- Simple, well-structured architectures can often outperform complex systems under real-world constraints.

What’s Next

Future improvements include multi-frame confirmation to reduce false positives, additional visual distress patterns (such as respiratory distress or seizure-like motion), real authentication for the nurse dashboard, and edge deployment for offline hospital environments. We also plan to integrate voice-based intake and patient re-identification to better track individuals throughout their visit.

CodeBlue demonstrates how real-time, explainable AI can augment emergency care—catching what humans might miss when every second counts.

Log in or sign up for Devpost to join the conversation.