Inspiration

When our team first met up at the beginning of Hack the North, we all brought our own ideas and aspirations for this event. After debating for over two hours through the opening ceremony and dinner, we realized everyone has worked with visual art and filmmaking in one way or another throughout their life. We stumbled on this topic as we shared interesting thoughts on the classical Harry Potter movies, and realized that we all enjoyed watching movies and TV shows for entertainment. Some of us did animation, 3D design, UI design, and drawing, while others worked with video editing. We started thinking about the problems the film industry and movie directors could face. One of the most prevalent issues that could stop a piece of work from coming to the big screen would be the time, money and resources to make CGI scenes.

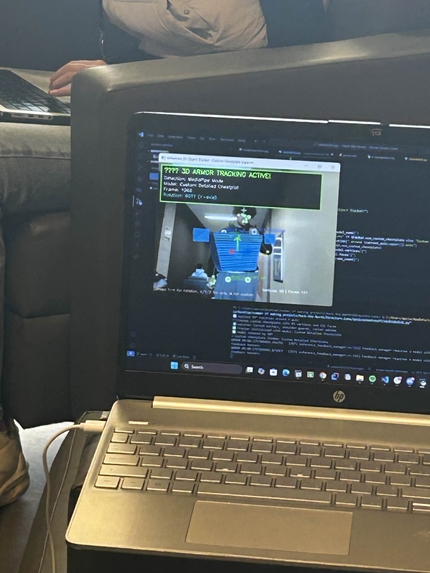

What it does

While there is no way to get around the effort of making high-quality scenes, there is undoubtedly a way for a team to experiment better with their ideas and sketches. This is why we developed Director's Lens, a tool that could directly turn a 2D sketch into a 3D mech and cast it onto a person's body. Director's Lens would help teams visualize their idea and experiment with various factors without spending a vast amount of assets, making them very high quality.

How we built it

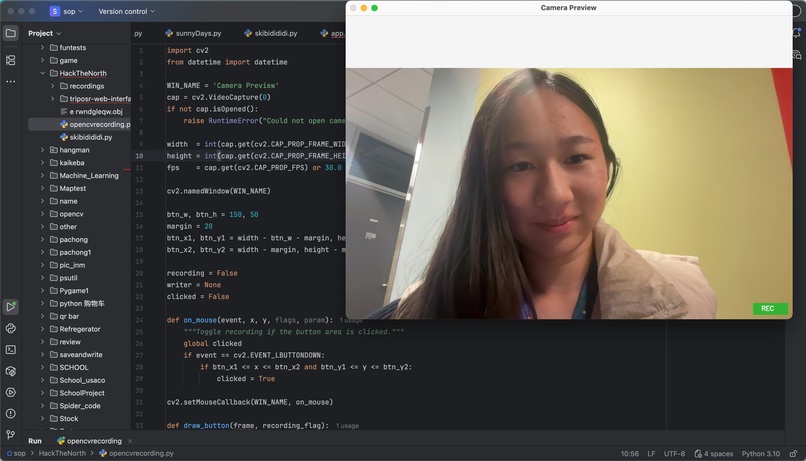

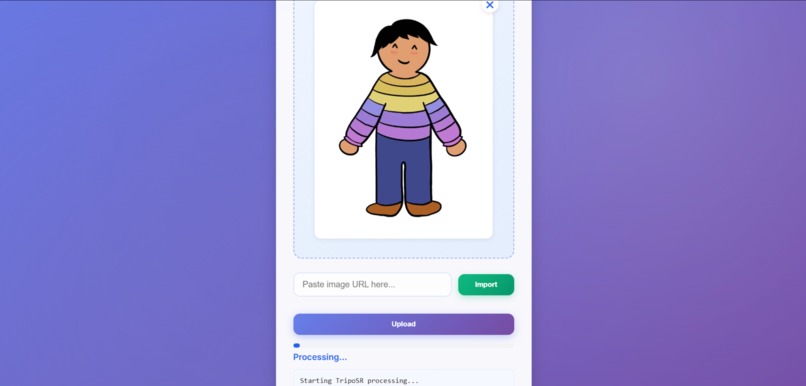

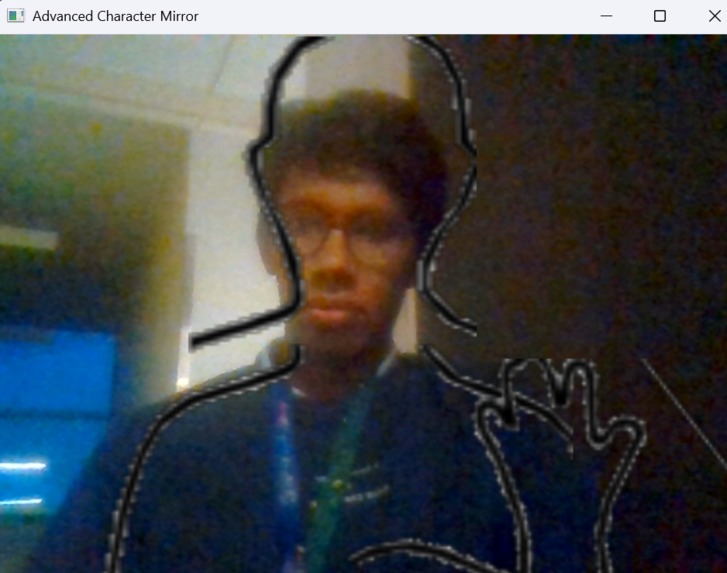

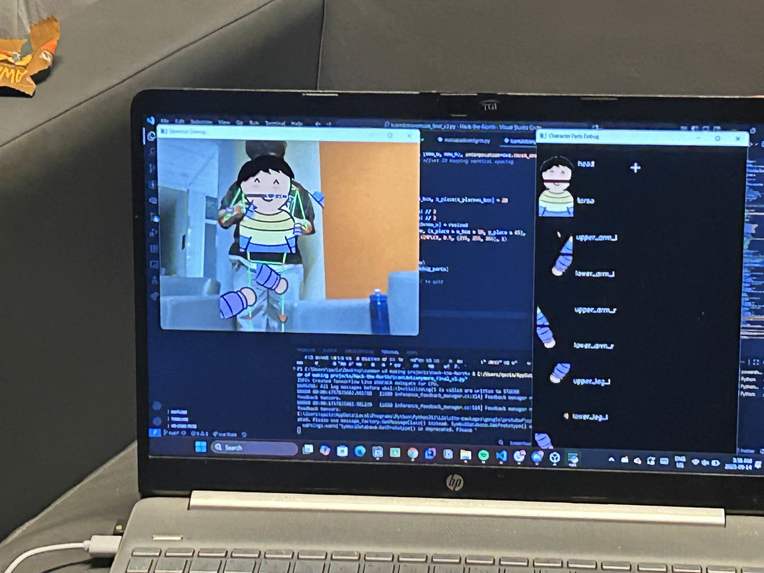

To make this product a reality, our team leveraged the use of a Windows-oriented Flask web app that lets a user upload a 2D image, called TripoSR (via a PowerShell script), to generate a 3D mesh, and then serves the resulting OBJ/textures for download. With this mesh, we used the Python library OpenCV to stick each moving section onto specific limbs in the video. The 3D model would then follow the person around while doing various actions so that teams can get a general idea of their sketch. Our app also provides a recording function where teams can film a moving person with the mesh on them for further analysis. To make this process more user-friendly, the 2D to 3D transformation has been made into a UI with Flask that can be run directly on any Windows device. And the final recorded video would be automatically downloaded into a user's default folder.

Challenges we ran into

The making of this project was full of successes; we also experienced our fair share of hardships. A considerable challenge was when one of our teammates had to leave the event for 12 hours to take her SAT, which meant we were down a person at the beginning of the event. However, because of considerable planning from the night before with everyone, we started rather quickly with an action plan. Another challenge we ran into was the implementation of a 3D mesh onto a moving person. We wanted to provide the most realistic and accurate representation of a costume on a real person; this includes being able to turn the torso area virtually with the rotation of one's shoulders. Because of the lack of depth perception that computer and USB cameras provide to us, this would involve very complex mathematics and still have a wide error margin. Since we didn't have an efficient solution to this problem, we decided to focus on the larger factors, such as arm and leg movement, which, after analyzing large fight scenes, is where the audience's primary focus would be. Later on into the very late hours, manipulating the 3D models was just not working and our team resulted in sticking 2D layers onto a moving person to use for demonstration purposes.

Accomplishments that we're proud of

We are very proud of our results in fixing each small challenge that comes our way. A huge accomplishment is when our team member working on the 2D to 3D implementation successfully generated the 3D mesh from a 2D image. This was the basis of our entire project, and seeing this step successfully done in a relatively short time was extremely rewarding. On the other hand, sticking 2D images onto a torso with OpenCV was also going really well, and we could effectively track a body's position with each of its arms and legs marked. Merging the recording system with the OpenCV detection was also a huge success, because we can now efficiently record anything that happens with a person and the visual overlays.

What we learned

Throughout this process, we expanded our knowledge of live image detection with a camera and libraries such as OpenCV and TripoSR and made a user interface with Flask. It was also a novel experience to make an application on three different Python versions and two operating systems run together seamlessly. While researching the need for this product in the film industry, we also learned interesting information on how these technologies would work and fit together in a professional setting.

What's next for Director's Lens

In the future, we want Director's Lens to become a fully packaged application that can run everywhere, from smartphones to augmented reality glasses. We also hope that with more time we are able to implement a fully controlled 3D mesh onto our system.

Log in or sign up for Devpost to join the conversation.