Inspiration

After working in several labs, we found it can be hassle managing several Zoom links, figuring out who takes notes at meetings, keeping things in consistent formats, etc. We aim to solve that problem to provide a more seamless process for all of these steps.

We also realize that this can be used as a way to generate notes for classes to improve accessibility for students who are unable to take notes. If the lecture is conducted by the teacher sharing slides from their computer, our project will enable the teacher to generate a summary of the class slides automatically without any extra effort on their part.

What it does

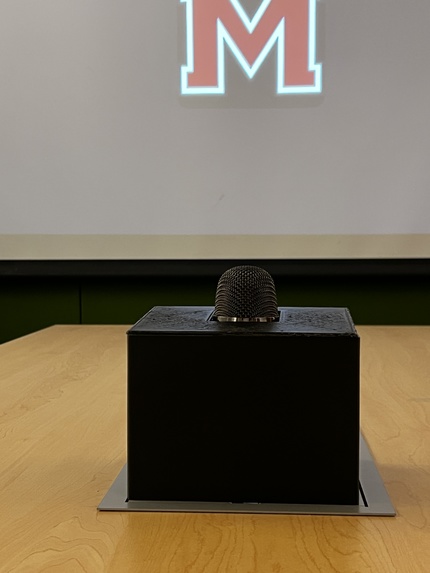

When placed in a conference room, our device takes in audio input from the presenter and HDMI input from the presenter's computer. It then produces a PDF with individual slides paired with the appropriate summarized minutes and timestamps. It also can share the presentation with remote participants and allow them to share when applicable.

How we built it

We adopted a divide-and-conquer approach, assigning two of our team members to hardware and two of our team members to software. The software people developed code that uses open turns a .mp4 file into a compiled summary pdf document. The hardware people got everything set up on a computer that has all the needed dependencies and creates the mp4 from HDMI input and audio input. The computer will also record from a Zoom meeting so that online presentations can be a part of the outputted transcript. The hardware people also designed and 3D printed a casing that holds all the needed components.

Challenges we ran into

We initially wanted to create this on a Raspberry Pi, however, the hardware desk only had Raspberry Pi 2s, which were not powerful enough for the video processing we were doing, so we needed to run this on a computer.

Accomplishments that we're proud of

We were able to account for several crucial edge cases when it came to slide transition detection, audio splitting, and excluding false slide inputs. We're proud that our product is in a near usable state, and could without too much polish, be deployed to help research productivity.

What we learned

We worked with the Zoom, OpenAI Whipser, ChatGPT 4o, and OpenCV APIs for the first time. We learned to integrate these tools together for our project.

We learned that it requires a lot of troubleshooting and an ability to stay open-minded and pivot if a certain pathway or idea does not seem to be producing results. Having flexibility was crucial to the success of our project.

What's next for Easy Minutes

Given more time and resources, we would like to do more edge case testing, implement speaker diarization, and we would slightly modify our device to include a 360 camera. We could potentially add directional audio to provide more information on who's speaking. We have preliminary integration with Zoom and google calendar to automatically schedule zooms and recordings, but couldnt get this fully working in time.

Log in or sign up for Devpost to join the conversation.