Inspiration

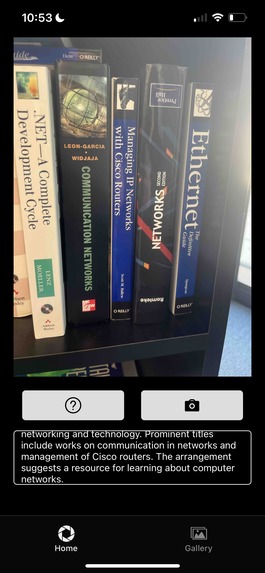

At the 5C Hackathon, our team was driven by the idea that taking and sharing photos—a seemingly simple task for most—can be daunting for individuals with visual impairments. We wanted to create a tool that fosters independence and genuine social connection. ExploraVist evolved from our desire to address the lack of accessible photo-sharing apps, bridging the gap between visually impaired users and the memorable moments they capture.

What We Learned

- Human-Centered Design: We discovered the importance of collaborating with end users (e.g., visiting the Lighthouse Center for the Visually Impaired) to understand accessibility needs.

- Rapid Prototyping: Under tight hackathon constraints, we learned to iterate quickly and focus on core functionalities that deliver immediate value.

- Technical Exploration: Implementing semantic search and AI-driven descriptions introduced us to embedding models, prompt engineering, and data encryption best practices.

How We Built It

- Front-End & UX: We used React Native with Expo to build an easy-to-navigate interface. Simple gestures and text-to-speech features accommodate users with varying levels of visual ability.

- Backend & Data Handling:

- Firebase stores encrypted descriptions that map to images stored locally on the device. This approach preserves user privacy while allowing quick retrieval of metadata.

- We integrated OpenAI’s APIs for image recognition and generative text descriptions.

- Semantic Search: Our plan was to leverage CLIP embeddings to enable natural-language photo searches (e.g., “Show me the selfie in front of the library”), but due to time constraints, we implemented a prompt-based approach using GPT for semantic matching.

- Future Hardware Integration: We plan to link this app to our existing wearable device so users can capture surroundings completely hands-free.

Challenges

- Time Constraints: Balancing a complete prototype with thorough testing within 24 hours was intense. We focused on essential features that showcase the app’s potential.

- Technical Hurdles: Configuring AI-based descriptions for real-time feedback required careful prompt engineering and optimization. We also encountered complexities in setting up secure, efficient Firebase interactions.

- Accessibility Complexity: Designing an interface that truly serves visually impaired users introduced new layers of UX considerations (e.g., voice control, navigation cues) that we want to expand on post-hackathon.

By centering on usability, privacy, and scalability, we believe ExploraVist can become a pivotal tool for visually impaired users to confidently capture and share life’s moments.

Built With

- firebase

- javascript

- jsx

- openai

- react-native

Log in or sign up for Devpost to join the conversation.