Inspiration

Memory loss and difficulties with facial recognition aren’t only frustrating and painful for the individuals suffering from them, but also for the loved ones left holding onto memories that are no longer shared. It creates a silent barrier that slowly erases shared history. We designed this project to break that barrier with a simple, helpful, and human-centered approach. By supporting the millions of people who face these challenges every day, we aim to protect the vital connection to the faces and relationships that matter most to them.

What it does

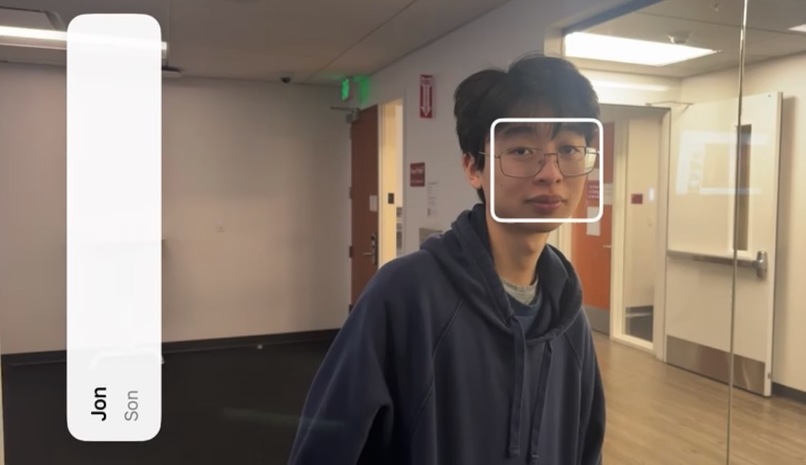

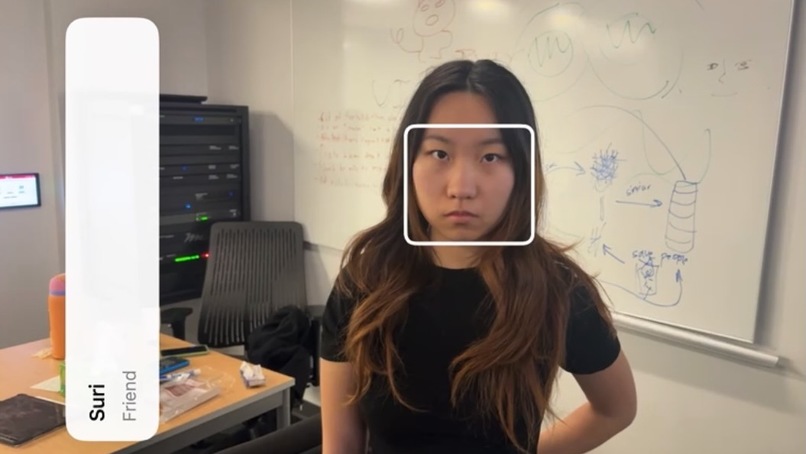

Glimpse identifies faces and recalls memories for those who may be struggling to do so themselves. Our app includes a feature that allows users to scan faces to save to their facial records using the phone's camera. The interface was designed to be minimal and accessible, making the main screen primarily dedicated to the camera. Glimpse is a discrete app that allows users to navigate social moments with confidence.

How we built it

Frontend: Swift via Xcode

Backend: MobileFaceNet, Tensorflow

Challenges we ran into

We ran into many difficulties since half of our team had access to Macs while the other half only had access to Windows. Since our CV team had Windows, when they tried to access the expo app to integrate the ML models several hours into the hackathon, they quickly realized that there were many incompatibilities, security errors, and odd dependencies which occurred as a result of this mix-match. Therefore, we decided to pivot to using Xcode midway through the hackathon, but this introduced a bunch of new difficulties since Swift wasn't our primary language. Since we wanted to maximize our efficiency, we had half of the team research on their Windows laptop on best ways to implement this app via Xcode along with different facial detection and embedding models while the other half would take our written code and test it from their Macs.

We also had difficulties with recognizing faces, due to our limited GPU and storage/memory capacities. We quickly found that we had to make a decision between high accuracy and high functionality. We felt that the latter should be prioritized since a very well performing model can only be so good as how its results can be visualized. Therefore, our researchers found small models that could maintain accuracy without taking up too much storage/power.

Accomplishments that we're proud of

We're very proud of the progress we were able to make despite the roadblocks which we encountered. We feel like we made a lot of progress and are especially proud that we were able to implement all of our core features and maximize our app's efficiency to the best of our abilities. However, we are most proud to know that our app can make a difference in the lives of many people across the world. We are also so proud that we can help more of our elderly population and those struggling with memory loss, integrate and reconnect with their loved ones.

What we learned

We learned that in development, many compromises will need to be made, but it's up to the developer to make those decisions to the best of their abilities. It may be difficult at first, especially if you feel just as strongly about your project and cause as we had, but taking some action to make a difference will always make a greater impact than not doing anything at all.

What's next for EyeR

In the future, we also want to include a tab for caregivers to upload images and descriptions of their loved ones themselves. We also want to be able to implement this on real glasses so that this technology can be more seamlessly integrated into our user's everyday lives. We have so many updates which we will strive to continue making progress on as we learn more about the different technologies available in our world.

Thank you for reading about Glimpse, and together, let's make a difference.

Built With

- computer-vision

- facial-detection

- react

- typescript

- xcode

Log in or sign up for Devpost to join the conversation.