Inspiration

Creative teams increasingly rely on AI for image generation, but most tools still behave like black boxes—small prompt changes cause unpredictable results, making them hard to use in professional, production environments. At the same time, developers and artists need workflows that are controllable, reproducible, and scalable, not just visually impressive.

We were inspired by Bria FIBO’s core idea: JSON-native image generation. Instead of treating prompts as unstructured text, FIBO enables structured, camera-level control over visual attributes. Our goal was to fully embrace this philosophy and build a system that turns creative intent into deterministic, production-ready visual pipelines—usable by both developers and artists.

What it does

FIBO Studio is a JSON-native visual generation platform built on Bria FIBO.

It converts natural language or images into structured JSON prompts using FIBO’s LLM translator, then uses that JSON as the single source of truth for image generation. Users can precisely control camera angle, lens, lighting, color palette, and other visual attributes, and regenerate images deterministically using seeds.

FIBO Studio supports three professional workflows:

Generate: Natural language → structured JSON → image

Refine: Modify specific JSON fields (e.g., lighting or camera angle) without breaking the overall composition

Inspire: Image → structured JSON → controlled variations

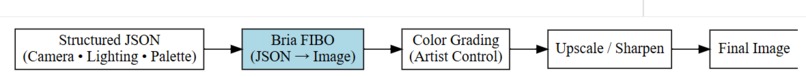

The same JSON prompts can also be exported into a ComfyUI-style node graph, allowing artists to integrate FIBO into existing node-based workflows for post-processing such as color grading and upscaling.

How we built it

We built FIBO Studio as a modular, production-oriented pipeline centered around JSON.

LLM Translation We use Bria’s FIBO VLM to translate natural language prompts, images, or refinement instructions into structured JSON that captures camera, lighting, color, and composition attributes.

JSON as the Control Plane The generated JSON is exposed directly to the user and treated as the authoritative representation of intent. Users can edit or refine individual fields while preserving all other attributes, enabling predictable and controllable image generation.

Deterministic Image Generation Images are generated using the BriaFiboPipeline, with structured JSON prompts, explicit parameters, and seeded execution to ensure reproducibility.

Professional User Interface We built an interactive Gradio-based studio that combines natural language input, camera-style controls, live JSON visualization, and side-by-side refinement workflows.

Artist Workflow Integration To demonstrate scalability and real-world integration, we designed a ComfyUI-inspired node graph where the same JSON prompts feed into a FIBO generation node followed by artist-oriented post-processing steps.

The result is a production-ready, automated visual generation pipeline that highlights the full power of FIBO’s JSON-native architecture.

Challenges we ran into

One of the main challenges was moving away from traditional free-text prompting and fully committing to a JSON-native workflow. While FIBO is designed for structured prompts, most existing AI image workflows and UI patterns assume unstructured text. We had to carefully design our pipeline so that JSON—not text—became the single source of truth at every stage.

Another challenge was ensuring controllability without prompt drift. When refining images, it is easy for LLM-based systems to unintentionally modify unrelated visual attributes. We addressed this by explicitly preserving unchanged JSON fields during refinement, allowing users to modify one parameter—such as camera angle or lighting—without affecting composition, color, or subject.

Integrating LLM translation, deterministic image generation, and a real-time user interface also required careful coordination. We needed the system to feel responsive while still exposing the full structured JSON to users, which meant balancing usability with transparency rather than hiding complexity.

We also encountered practical challenges related to model access, environment setup, and compatibility when working with gated Hugging Face repositories and GPU-based execution in Google Colab. Ensuring the pipeline was reproducible and stable across sessions required explicit dependency control and deterministic execution settings.

Finally, designing an artist-friendly workflow that complements a developer-focused JSON pipeline was non-trivial. We wanted to demonstrate how the same structured prompts could plug into a ComfyUI-style node graph without fragmenting the workflow, reinforcing the idea that JSON prompts can bridge developer and creative tooling.

Accomplishments that we're proud of

Built a fully JSON-native visual generation pipeline We designed FIBO Studio so that structured JSON prompts—not free-text—are the single source of truth. This enabled deterministic, controllable, and reproducible image generation aligned with FIBO’s core architecture.

Successfully leveraged FIBO’s LLM translator across multiple workflows We implemented natural language → JSON, image → JSON, and JSON-preserving refinement flows, demonstrating how LLM translation can power automated, production-ready visual pipelines without prompt drift.

Delivered camera-level controllability with predictable outcomes Users can explicitly control camera angle, lens, lighting, color palette, and other attributes, and reliably regenerate images using seeds. Changing one parameter results in one clear visual change, proving true controllability.

Created a professional, end-to-end creative tool—not just a demo Our Gradio-based FIBO Studio combines generation, refinement, inspiration, live JSON visualization, and reproducibility controls into a cohesive, production-oriented user experience.

Bridged developer and artist workflows We demonstrated how the same structured JSON prompts can be exported into a ComfyUI-style node graph, enabling artists to integrate FIBO into existing node-based pipelines for post-processing and creative iteration.

Demonstrated scalability beyond a single use case While showcased with product imagery, the pipeline is domain-agnostic and extensible to scientific visualization, enterprise design systems, and other professional visual workflows.

What we learned

Building FIBO Studio reinforced the importance of structured, JSON-native workflows for professional AI image generation. We learned that treating JSON as the primary interface—rather than as an internal implementation detail—dramatically improves controllability, reproducibility, and scalability.

We also learned that LLM-based translation is most effective when used as a bridge, not a black box. By constraining the LLM to produce and refine structured JSON, we were able to preserve intent across iterations and avoid the prompt drift that commonly affects free-text workflows.

Another key learning was that controllability requires explicit design, not just better prompts. Exposing camera-level parameters and enforcing deterministic execution with seeds allowed us to deliver predictable results that align with how creative professionals actually work.

From a systems perspective, we learned the value of separating intent, control, and execution. Translating intent into JSON, refining that JSON independently, and then executing it through FIBO made the pipeline modular, debuggable, and extensible to new interfaces such as node-based artist workflows.

Finally, we learned that developer and artist workflows do not need to be siloed. A shared, structured representation of intent enables collaboration across tools and roles, opening the door to production-ready creative pipelines rather than isolated demos.

What's next for FIBO Studio Alpha

FIBO Studio Alpha demonstrates the core value of JSON-native, controllable visual generation. Our next steps focus on turning this foundation into a fully production-ready creative platform.

Expanded JSON schema and validation We plan to extend the structured prompt schema to cover additional camera, composition, and lighting attributes, along with schema validation and presets to ensure consistency across teams and projects.

Agent-assisted creative workflows Future versions will introduce lightweight agents—such as a Creative Director or Camera Assistant—that operate directly on JSON prompts, suggesting refinements while preserving deterministic control.

Deeper ComfyUI and creative tool integration We aim to ship official ComfyUI nodes and example workflows so artists can seamlessly plug FIBO Studio prompts into node-based pipelines for post-processing, animation, and batch production.

Batch and campaign-scale generation FIBO Studio will support batch execution and parameter sweeps driven entirely by JSON, enabling large-scale visual campaigns with consistent style and reproducibility.

Collaboration and versioning We plan to add JSON versioning, diffing, and history tracking so teams can review, audit, and reuse visual configurations across products and releases.

Enterprise and scientific use cases Beyond creative workflows, we will expand support for domains like scientific visualization and enterprise design systems, where reproducibility and structured control are critical.

Log in or sign up for Devpost to join the conversation.