Inspiration

Many companies within the EdTech space are killing thinking. They bypass the struggle and hand you the answer—no reasoning, no retention, skipping the part where you actually learn.

We offer a different perspective: giving just enough information to push you in the right direction, allowing you to formulate thoughts you actually understand.

What it does

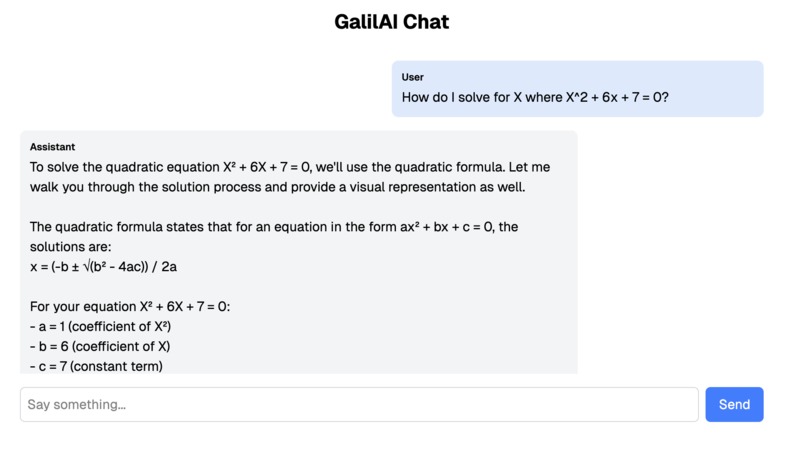

GalilAIo detects a student’s assignment and provides constructive feedback to guide them toward a solution with limited intervention.

It offers:

- Visual cues via AR displayed on the XREAL ONE glasses

- Audio feedback for an immersive learning experience

By analyzing the student’s work in real time, GalilAIo delivers contextual hints, ensuring personalized support without overwhelming the student.

How we built it

- OCR & Computer Vision

- Detects a student’s progress on their assignment

- Uses AprilTag fiducial markers to establish a coordinate system

- Performs perspective correction for accurate text recognition

- AI Tutoring Interface

- Built on Claude for real-time tutoring

- Interaction through text and voice

- WebSocket support + ElevenLabs text-to-speech for smooth audio feedback

- Real-time camera feed for contextual assistance

- Voice Integration

- Whisper AI handles speech-to-text for natural conversations

- System intelligently switches between speech-to-text and text-to-speech

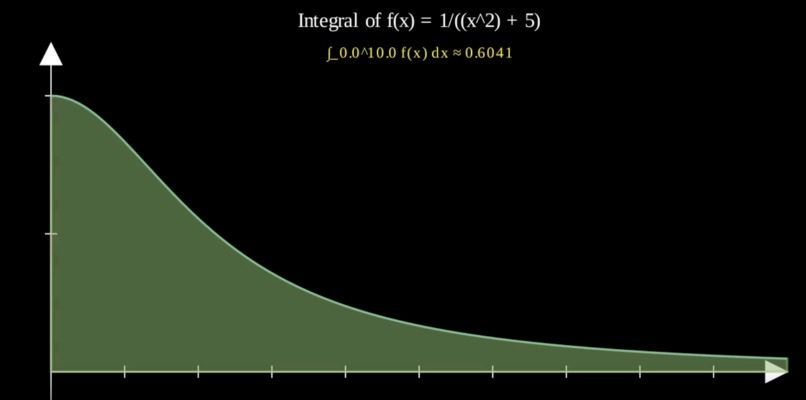

- Math Visualization

- Manim (Math Animation library) generates high-quality animations

- Helps students visualize concepts like polynomials and trigonometry

Together, computer vision monitors progress while AI provides personalized support through text, voice, and visuals.

Challenges we ran into

Stack Changes

- The stack for communicating between the LLM and the person behind the screen had to have been changed a series of times, to accommodate for several differing hardware needs alongside the requirement for a simplistic, modular, and extensible system.

- Thereby, we have had to engineer a complex stack incorporating Whisper, Elevenlabs, combining them with websockets, alongside a series of tweaks to get a bunch of different models to cooperate.

- This had to be done as current solutions act as agents for simple tasks, such as booking an appointment. For extended requirements with larger models, we had to design homebrew.

Current LLM Limitations

- Most SDKs do not support images as a response to a tool call. The few that do, have an incredibly limited scope. → This led us to use Claude as our big backend model, which does

LLMs, Images, and OCR

- OCR is not very good at math. Thereby, we need a model that understands what the user is attempting to do.

- LLMs are not very good at OCR. Thereby, the more clarity we can provide, the better.

- LLMs and OCR do not go hand in hand, especially in math. Digital math notation is done in LaTeX, which is fundamentally incompatible with pretty much any OCR model on the market, including Mistral's newest offerings.

- Laptop cameras are not very good. This was fixed with extensive image processing detailed in the "vision" folder within the monorepo, which incorporates several attempts at gamma, contrast, and color correction, as well as the solution we settled on.

Technical Limitations

- LLMs are technically limited

- Although this may improve in the future, LLMs still struggle with many basic tasks

- Thereby, we had to build a lot of scaffolding around the AI in order for it to be able to produce the output that's needed for a successful tutoring session.

Accomplishments that we're proud of

- Built a strong, high-energy team grounded in good morals.

- Running the input processing backend with OpenCV and FastAPI.

- Implementing computer vision with AprilTags.

- Experimented with Micro-Service Architecture, chaining together:

- LLM → WebSockets → LLM → Manim for modular workflows.

What we learned

- Learned the value of moving quickly with high velocity to maximize progress.

- Embraced a “break things often” mindset as part of rapid iteration.

- Understood the need to pivot repeatedly to adapt to changing challenges and insights.

What's next for GalilAIo

- Streamline the product: smaller form factor, truly wireless

- Expand compatibility: support AR devices beyond XREAL ONE

- Premium features: adjustable LLM responses tailored to a student’s prior knowledge

- Quicker response times: Add additional models that produce faster results, such as Mixtral 8x7B, thereby letting the user have more control over the range of their results.

Built With

- built-with-pytorch

- claude

- cuda

- elevenlabs

- fastapi

- manim

- nextjs

- openai-whisper

- opencv

- trpc

- vercel-ai-sdk

- websockets

Log in or sign up for Devpost to join the conversation.