Inspiration

We wanted an accurate way to track hand movements for sign language recognition. Many existing tools rely on OpenCV, but their tracking is not very precise. VR provided a more effective solution through the Meta Quest SDK, allowing us to capture hand motions with better accuracy.

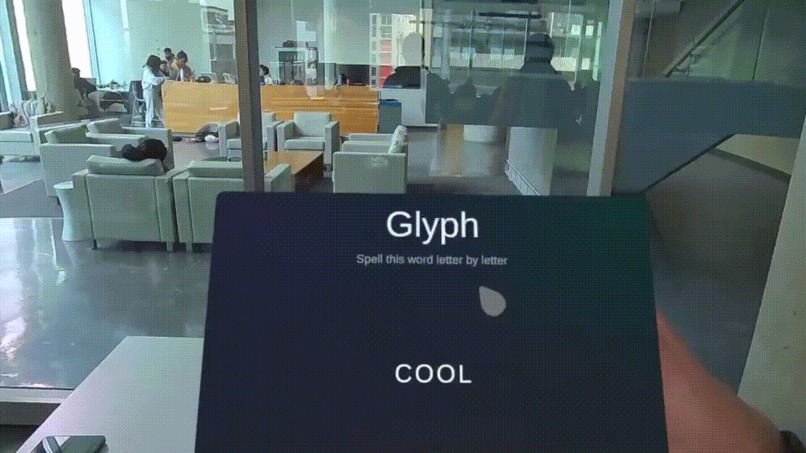

What it does

Glyph is a VR game that teaches American Sign Language (ASL). It tracks hand movements in real time, sending hand models and gestures to an AI model for recognition. The AI processes the gesture and returns feedback to the user, helping them learn sign language interactively.

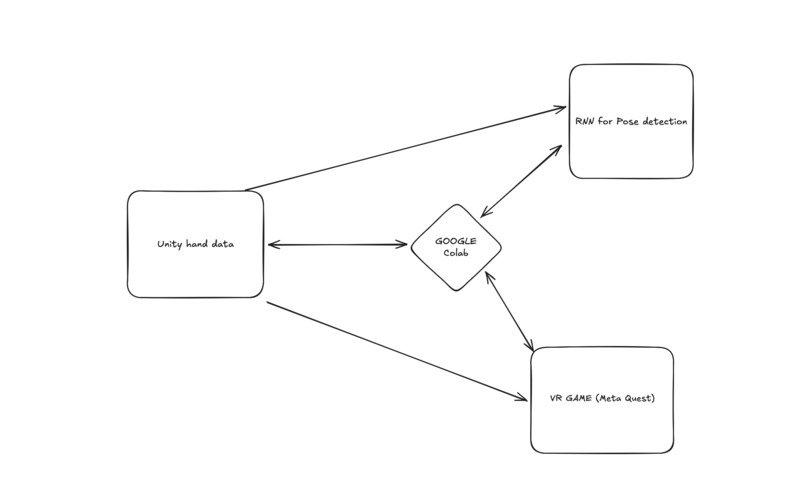

How we built it

We developed Glyph using Unity, TensorFlow, Meta Quest 3, OVR Hand Skeleton, and Flask. The AI model, a Recurrent Neural Network (RNN) built in TensorFlow, recognizes hand poses. Data is transmitted between Unity and Flask using WebSockets (WSS instead of WS).

Challenges we ran into

Learning VR development and extracting the hand skeleton data was a major hurdle. Another challenge was setting up communication between Unity and Flask, as Unity required WSS (WebSockets Secure) instead of WS.

Accomplishments that we're proud of

We successfully implemented data augmentation using NumPy to expand our training dataset. Additionally, we built a recording system to capture hand inputs and send them to Flask for AI processing.

What we learned

We realized that Unity integration is frustrating and makes for a difficult development environment. However, we gained valuable experience in both AI model development and Unity VR integration along the way.

What's next for Glyph

We plan to improve AI accuracy and expand the number of supported ASL signs to enhance the learning experience.

Log in or sign up for Devpost to join the conversation.