Inspiration

H2Optimize: AI That Doesn't Cost the Earth

Every AI query has a hidden price tag. Server farms gulp electricity, cooling systems drain water reserves, and carbon footprints expand with each conversation.

Most people don't realize their casual ChatGPT sessions contribute to environmental strain. But here's the reality: inefficient AI usage is quietly becoming one of tech's fastest-growing sustainability challenges.

H2Optimize changes the equation. Our platform automatically streamlines your AI interactions by compressing prompts, eliminating redundant processing, and rerouting prompts to more energy-efficient data centers. The result? You get the same quality responses while dramatically reducing resource consumption.

It's intelligent AI usage without the guilt. No behavior changes required. No complicated settings. Just cleaner, greener conversations that prove sustainability and cutting-edge technology can coexist.

Smart prompting shouldn't come at the planet's expense.

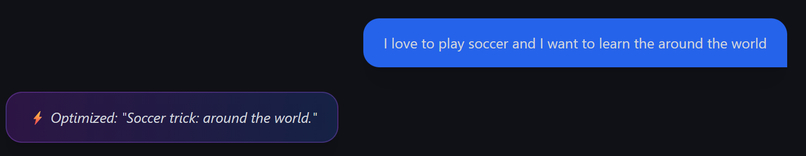

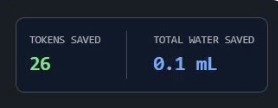

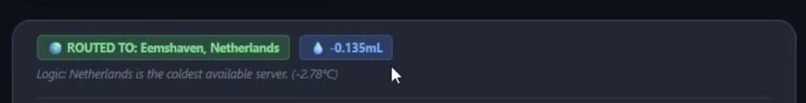

What it does

Optimizes your prompts to minimize token use and water use, and reroutes your prompts to the data center with the coldest weather.

How we built it

Backend:

- Python

- Flask

- Gemini API

Frontend:

- TypeScript

- Vite

- Tailwind CSS

- Llama

Challenges we ran into

- Figuring out how to re-route the prompts to colder data centres

Accomplishments that we're proud of

- Creating something that actively tries to solve an issue on our planet

What we learned

- Learned how AI efficiency extends beyond latency and accuracy to include energy and water usage at data-center scale

- Gained experience designing a hybrid AI architecture combining a local LLM with cloud APIs

- Discovered how prompt optimization and token reduction can meaningfully reduce compute when scaled to billions of requests

- Learned to work with real-world constraints (uncertain metrics, variability across data centers, sustainability trade-offs)

- Improved ability to justify technical choices with data rather than assumptions

- Practiced communicating complex AI sustainability concepts clearly to both technical and non-technical audiences

- Realized the importance of honest framing and caveats when working with emerging or imperfect metrics

What's next for H2Optimize

We want to work on upgrading our preprocessing models on browser cache

Built With

- flask

- gemini-api

- llama

- python

- react

- tailwind-css

- typescript

- vite

Log in or sign up for Devpost to join the conversation.