Inspiration

As the pandemic begins to subside, stores have begun to open, but many people are now comfortable with the ease of technology at their fingertips while shopping. This begs the question of how we can incorporate technology to create an immersive in-store experience.

Another important issue for both retailers and consumers is miscommunication. How big is this couch really? Will this chair fit under my desk? Does my backpack have enough space to hold this? Is this ethically sourced? Is there anything else just like this?

InvestigatAR aims to bridge the gap between technology and in-store experiences as well as reduce this miscommunication to consumers via a mobile application powered by Machine Learning, AR, and Crowdsourcing. InvestigatAR allows customers to analyze products and make informed decisions by utilizing our AR features, reviews, sustainability scores, and more!

InvestigatAR has three guiding principles:

- Sensibility

- Sustainability

- Scalability

What it does

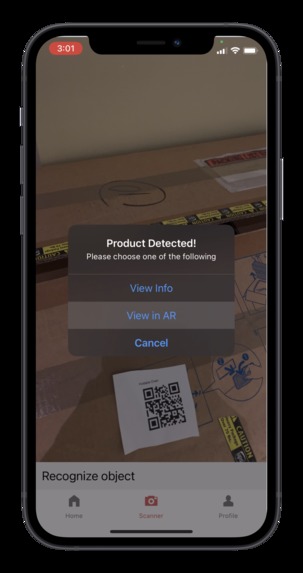

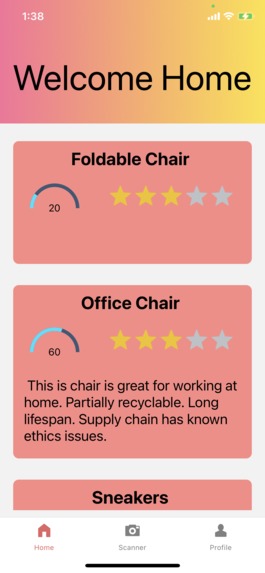

By scanning QR codes in our app, users are able to see a full scale model of the assembled product at the tips of their fingers. Using our Spring Boot server and NCR's Catalog API, users are able to see the name, reviews, description, pricing, and other critical information of the product. Don’t know what a product is? Scan it. Using an image recognition and object detection model, we can show you the closest matches in other products AND show information about whether the product is sustainable for the environment, comes from ethical sources, and has good reviews.

How we built it

Starting from the cloud down.

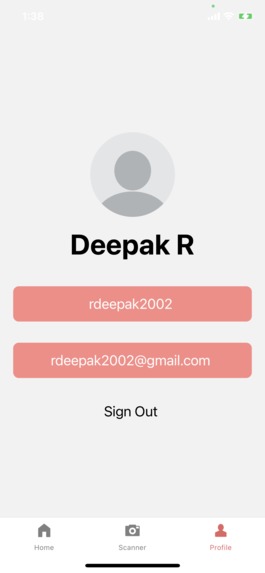

The backend is built with Spring Boot, Spring Security, MongoDB, JSON Web Tokens for Authentication, and Java Unirest to communicate with NCR’s Catalog API, all deployed to a remote server on Heroku. What all of those words mean is that we send and collect information from our cross platform application to display all the information on screen: user profiles, reviews, AR models, machine learning-powered analysis, and more.

The frontend is built in Typescript, React Native, Redux, Google ML, and VIRO AR. First, we authenticate users against our database, allowing them to register if they do not have an account. Users can then scan QR codes or objects, and then see AR models and reviews associated with the product. They are also able to see the history of everything they’ve scanned. In addition to this, our mobile application has the capability to run on both Android and iOS since it is built in React Native.

Challenges we ran into

Two Big Things: Incorporating sponsor APIs and object detection into the mobile app. Incorporating the NCR API took a lot of trial and error and calls with NCR engineers, until finally becoming a core part of our server. From the ashes rose the phoenix (or just a small bird) of the server side. Being able to catalog data adds a huge benefit to our application, with retailers being able to update item inventory, descriptions, names, and more.

We initially started with using built-in libraries in React Native to serve predictions for object detection. Unfortunately, our application could neither object nor detect. After spending multiple (i.e more time than should ever be spent) hours on trying to fix dependencies for TensorFlowLite for React Native, we did what any sane team would do and redid the entire code so that we could instead use TensorFlow.js. And guess what? That also did not work either. So, instead of using TensorFlow, we decided to go with an API call to ClarifAI, which hosts a pretrained ML model for image classification. Now, we are able to recognize clothing AND display AR models of what we have in our catalogue. Pretty neat. We further wanted to improve this feature by reducing our dependencies on open-source apis, and instead developed our own API using Flask that serves predictions using a state-of-the-art deep learning PyTorch YOLO Object detection model. After a bit of debugging, we were successful in integrating this endpoint into our platform.

Accomplishments that we're proud of

Augmented reality and our server. Getting our app to display a variety of models and having a server to back all of these requests is extremely cool and took the vast majority of our time.

There are a variety of AR objects and sustainability scores! The sustainability scores were key to our design because we want users to shop for alternatives that help the environment and that is a lot easier when they can see exactly what those are.

As a side note, our UI also took a lot of time, and most people won’t notice (which is the point). Shoutout to Vivek for (mostly) single handingly designing it.

What we learned

Two of our team members had no experience with any of the Tech Stack before this hackathon. By the end, they became crucial parts, learning React Native, Spring Boot, and UI design.

Our other two team members learned the importance of friendship. In more serious matters, they learned how to make object recognition on mobile devices user friendly, connect to our server, and display it all in the span of 6 hours.

What's next for InvestigatAR

Create better classification models, including user input on how accurate rankings are. Add many more AR models of all different brands, types, and shapes of every object imaginable. Furthermore, we are looking into webscraping HowGood, the world's largest sustainability database. Product reviews can also be labeled and filtered with sentiment analysis to allow users to filter by positive/negative reactions. Oh, and we'll probably sleep for a week before that.

Built With

- augmented-reality

- clarifai

- flask

- google-ml

- heroku

- javascript

- jwt

- mongodb

- ncr-catalog-api

- python

- pytorch

- react-native

- redux

- restapi

- spring-boot

- tensorflow

- typescript

- viro

Log in or sign up for Devpost to join the conversation.