Inspiration

Networking is essential at professional events. At large gatherings, where theres hundreds of people to meet in the matter of a few hours, it can be difficult to end up meeting with the person that you'd collaborate or connect the best with. A lot of us have experienced this personally, where we've ended up at networking events and not met the co-founder/expert we're looking for.

KayEcho.AI is our solution to this exact issue. Our full-stack web-app helps you network more effectively by helping you prepare in advance for large events!

What it does

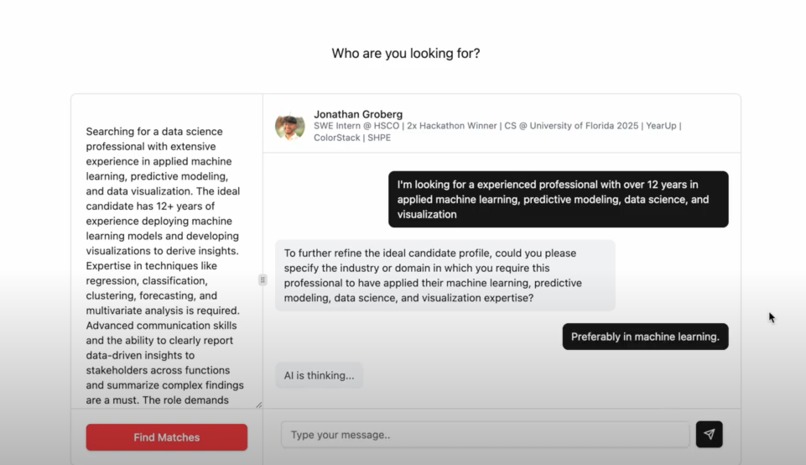

Once you arrive on KeyEcho.AI's landing page, you insert the link to your LinkedIn page to help the app learn more about you. Then, you speak with our Anthropic API-powered chatbot to figure out the type of person you're looking forward to meeting at the professional event. The chatbot repeatedly asks you questions to refine the description of the ideal profile that you're looking for. You can also modify the working ideal profile description manually if convenient.

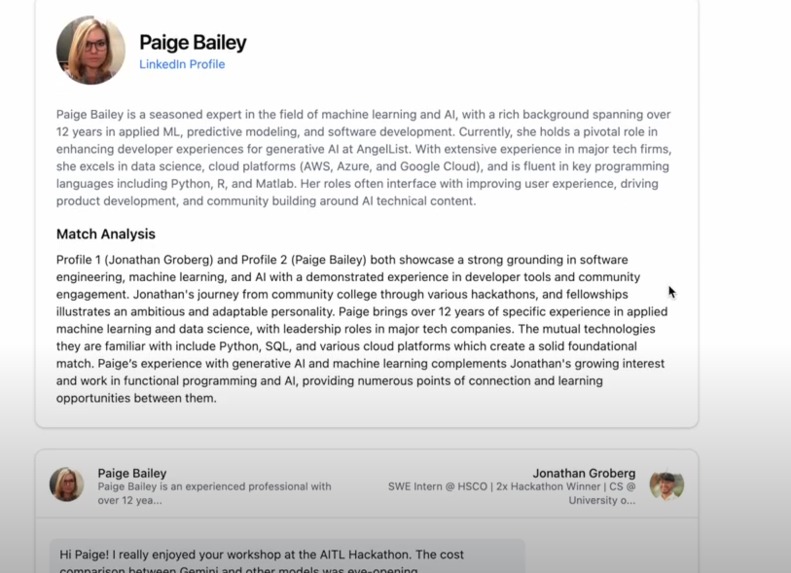

Once you've figured out the type of person you're hoping to meet, you can press "Find Matches". The LLM then looks through a RAG containing the LinkedIn profiles of all the event attendees to find the best match for your description. The LLM then generates a hypothetical conversation between you and your match using data scraped from both your LinkedIn profiles, ultimately giving you a list of ice-breakers you can use when you meet said person in real-life or an ice-breaker email. You can also just read over the conversation to get a better idea of what your interaction with the person will be like when you meet them at the event.

How we built it

Our front end was built with React, Tailwind, Astro, and Shadcn. We chose to implement a minimalist design to ensure that the user has a easy-going experience as they progress through the app. We designed the frontend as a single page application to create a engaging user experience, all deployed to Vercel.

Our back end was built over multiple steps. The initial step in our process involved scraping profiles from the hackathon using LinkedIn’s API, extracting essential keywords, and relevant professional insights to build a structured dataset. We stored this dataset in MongoDB Atlas and applied Atlas Vector Search to vectorize each profile, enabling efficient similarity-based retrieval for downstream tasks, especially for our Retrieval-Augmented Generation (RAG) pipeline.

A chat agent, powered by Anthropic’s Claude API, which is mounted on GCP, was then introduced to interactively assist users in crafting well-structured, specific prompts to get the profile that they are looking for. This iterative refinement process with the agent ensures prompts achieve the level of specificity required for precise RAG-based matching. With profiles now accessible via MongoDB Atlas Vector Search, we incorporated an evaluator to verify alignment between user-provided data and RAG’s output. The evaluator iteratively refines prompts until achieving maximum similarity, ensuring the retrieved profiles closely match the user's objectives.

Once we identified the top three candidate profiles, we initiated simulation rounds, leveraging recent posts as focal points. Using the Anthropic-powered agent, we generated a simulation script that maximized relevant insights from conversational exchanges. After each simulation, we applied self-reflection techniques to analyze mutual perspectives and compatibility based on shared interests and experiences. This simulation was repeated across profiles to identify the best match.

Upon determining the optimal profile match, we used the LLM again to extracted actionable insights and generated tailored communication strategies, such as an introductory email and a conversational icebreaker.

Challenges we ran into

Prompt engineering was by far the hardest part of this project. Given that we used an LLM to process user input, generate the ideal user profile, match the user to an ideal connection, generate a genuine sample conversation, and (LASTLY) come up with ice-breakers, we had to do a lot of prompt engineering to ensure that the responses from the API were concise and relevant. We had to do a lot of trial and error to work out the perfect response from the LLM whilst ensuring that token usage is not unsustainably large.

Accomplishments that we're proud of

We're really happy that we were able to use the Anthropic API to its fullest extent. For example, instead of just generating an ideal profile based on one round of user input, we developed a system that repeatedly asks the user to add more details so that the ideal profile gets iteratively more relevant and useful.

We're also proud to have split up the work effectively. Given that our product consists of many stages, each one of us 4 worked on a specific part and later worked together to integrate everything. Before starting any of the coding work, we spent a lot of time discussing the architecture of our application and our approach to LLM-prompting to ensure that all parts of the app would fit well together.

What we learned

Through this project, we learned about the vast applications of LLM technologies within the same overall app. Prior to this, we had seen LLMs being used once or twice within the same app as a supplemental feature or just one-step. The process of developing KayEcho.AI, where every step is powered by Claude through careful and selective prompts, taught us that AI can encompass and help complete a large assortment of tasks. In particular, an LLM can enhance the user experience by doing everything through a comfortable conversation.

What's next for KayEcho.AI

The next step for us is partnering with large event organizers and gathering a larger dataset of profiles to feed into our RAG so that we can most effectively enhance peoples' networking experience at what could otherwise be overwhelming, unproductive events.

Log in or sign up for Devpost to join the conversation.