Inspiration

Bocchi

What it does

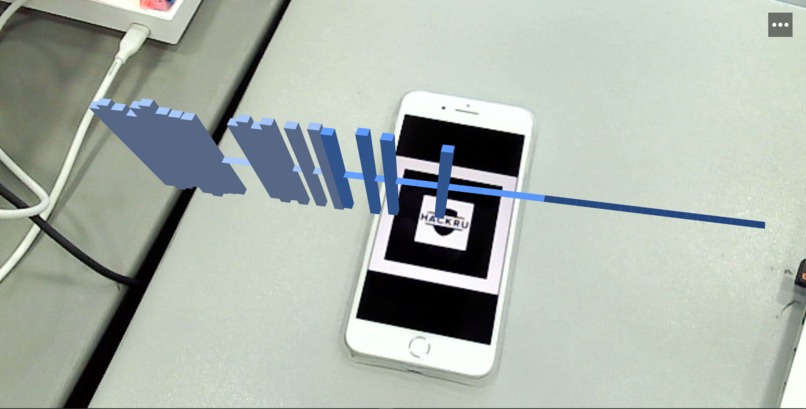

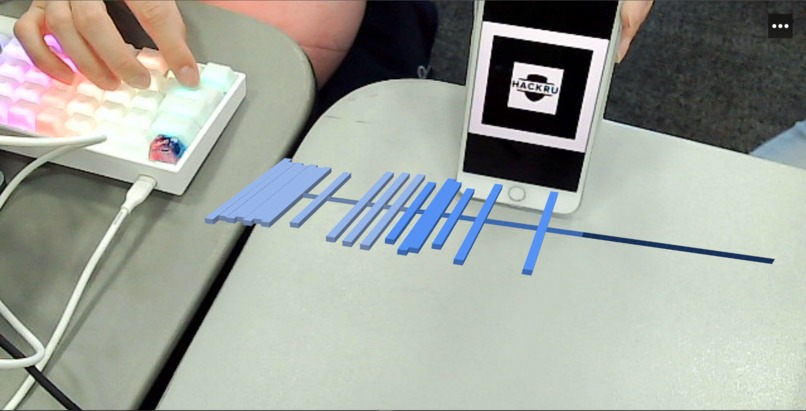

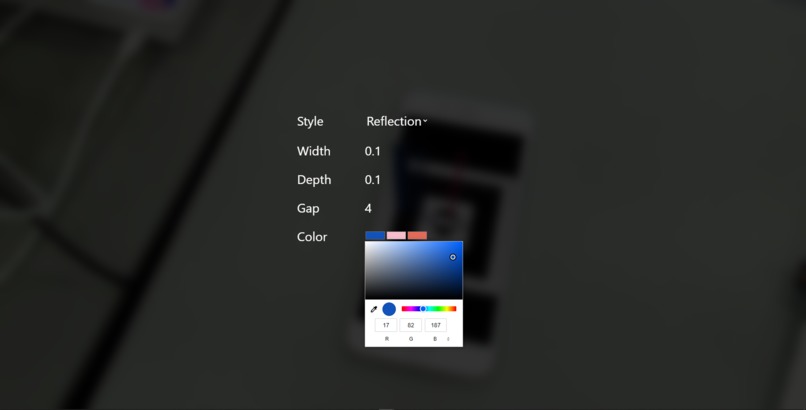

This application is designed to take audio data from a user's microphone and visualize the different audio levels in a customizable AR experience on the web.

How we built it

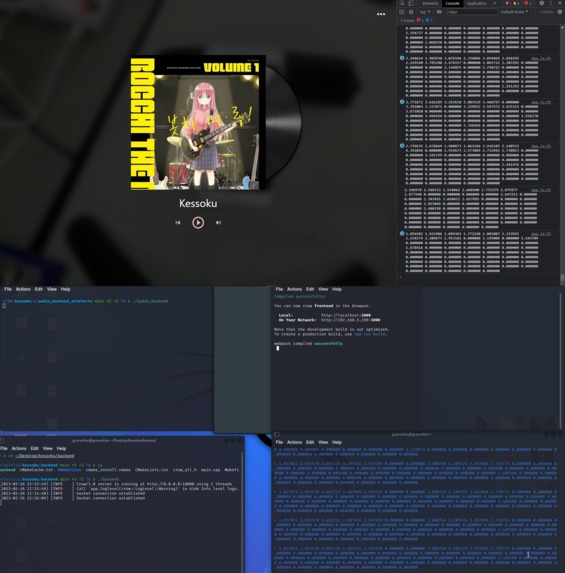

Frontend: React.js, TailwindCSS, AR.js/A-Frame

Backend: C++, Crow, JUCE

Misc: Github, Figma

As mentioned, this project takes both the user's microphone audio and webcam feed to display an AR audio visualization onto a surface. Thus, the project was split up into two concentrations:

- frontend team to setup AR.js

- backend team to handle parsing audio data

The frontend displays the webcam feed and tries to find our corresponding marker pattern which anchors the AR.js object. We then calculated several equations to determine object positioning and to maneuver these objects in the 3D AR space.

The backend uses C++ to efficiently capture audio data and transmits the data through low-level pipes to communicate between the audio server and webserver. The webserver then communicates the audio data to the frontend using websockets.

Challenges we ran into

We wanted to use a low level programming language for our backend in order to have low latency with our frontend. So we decided to use Crow, which is a C++ backend framework. The biggest issue was not the framework itself, but it was setting up the dependencies required to run this framework. A lot of modern C++ applications are based on Boost. After hours of trying to compile, we were unable to get Boost working on Windows, but we got it to work on Linux. We also had a some challenges with MacOS since one of our teammates used mac.

Another challenge we ran into is the conceptual understanding of sound waves and DSP. None of us are audio engineers, so we needed to do some research on how sound can be analyzed through sine waves and how frequencies can be calculated through fast fourier transforms.

Working with poorly documented libraries made it much more challenging for the frontend team to translate simple HTML to React for AR. We spent a lot of time trying different versions and attribute placements because of poorly maintained and documented AR libraries and ended up compiling multiple Github issues into a working solution.

Accomplishments that we're proud of

We were able to seamlessly and effortlessly connect the frontend and backend using websockets. While we were afraid of websockets' potential lag, we ended up creating a fascinatingly efficient service, both on the frontend and backend.

What we learned

The frontend team took a leap of faith into AR with AR.js. The new environment was a challenge and harsh to work in.

In terms of the team responsible for the backend, we had a greater refresher on C++ as well as how modern day C++ applications get compiled and integrated into developer environments. In particular we got to put to practical use from the concepts we learned in Systems Development with low level concepts such as pipes. It was also very enlightening to see how C++ can offer an easy to use and intuitive HTTP and WebSocket server as all of the team members have backgrounds in full-stack development with JavaScript.

What's next for Kessoku

With more time in the future, we would love to add user uploaded music to visualize as well as more ambitious endeavors such as Spotify integration with our project.

Built With

- aframe

- ar.js

- c++

- crow

- juce

- react.js

- websockets

Log in or sign up for Devpost to join the conversation.