Inspiration

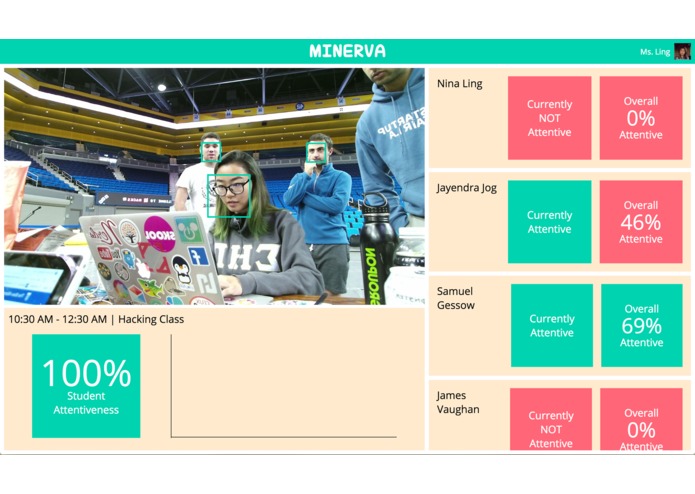

In the 2015-16 school year, roughly 1 in 4 high school seniors in the Los Angeles school district didn’t graduate. [1] It’s not so much that they failed—the system failed them. Its not the teachers fault either—with the current decrease in federal funding and increasing class sizes, teachers are working harder than ever and simply can’t devote enough individual attention to each individual student. High achieving students are often bored in class, and other students don’t have the personalized care they need to succeed. Teachers don’t have enough time to individually engage each student, leading them to unknowingly overlook the problems their students are facing. That’s where Minerva comes in—targeted machine learning, facial recognition, and sentiment analysis gives teachers huge insights into the needs of each student even in the face of increasing class sizes and decreasing budgets.

Technical Details

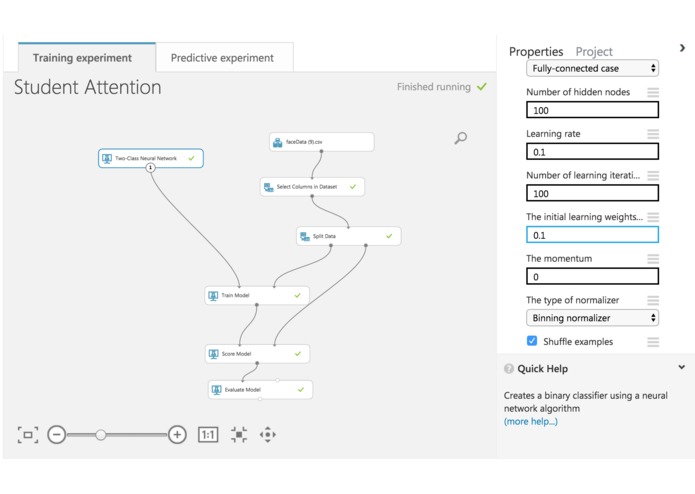

ML Setup:

- Microsoft Bing Image Search API was used to automate image classification to get the training data

- Microsoft Cognitive Services Face API took each image, generated the numeric associations for that image (i.e. the facial attributes and the emotions)

- Microsoft Azure Machine Learning Workspace was used to train a Two-Class Binning Normalizer Neural Network using the cognitive services face api information as the training data

Training Data: roll, yaw, pitch, anger, contempt, disgust, fear, happiness, neutral, sadness, surprise <=> label

Application Workflow:

- Microsoft Kinect is used to take photos of classroom every 3 seconds, this data gets sent to Azure store.

- Microsoft Azure Storage keeps each photo for 3 seconds (where the React front end and Azure function can get access to it), and them deletes it, making room for a new photo to protect privacy

- Microsoft Azure functions gets access to the photo URL from Microsoft Azure Storage

- Microsoft Cognitive Services Face API is used to do sentiment analysis to get the Facial Attributes (roll, yaw, pitch) and Emotions (anger, contempt, disgust, fear, happiness, neutral, sadness, surprise) and is then passed to Microsoft Azure Machine Learning Workspace to get the classification and classification probability

- Microsoft Cognitive Services Face API’s Find Similar feature used to associate each user’s face with their classification information

- React.js is used on the front to dynamically display all of the information

Takeaways

- Get better training data: pitch, anger, contempt, disgust, fear, sadness, surprise were almost always 0

- Kinect is not worth using at a Hackathon unless it’s integral to the application

Future Goals

- Incorporate an audio element to

- ensure students are engaged when the instructors is speaking

- transcribe the teachers speech to highlight particularly engaging and boring parts of the lecture

- Apply this to public speaking

- Apply this to online classes as well

- Use Kinect’s other sneers to better judge engagement

Citations [1] http://www.latimes.com/local/education/la-me-edu-grad-rate-20160809-snap-story.html

Built With

- microsoft-azure-machine-learning-workspace

- microsoft-azure-storage

- microsoft-bing-image-search-api

- microsoft-cognitive-services-face-api

- microsoft-kinect

- processing

- react.js

Log in or sign up for Devpost to join the conversation.