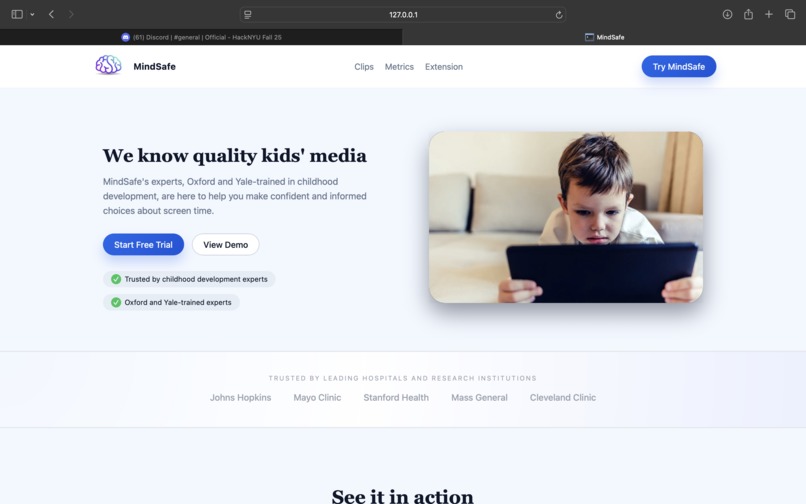

MindSafe: Progress Overview

Inspiration

Every parent we talked to had the same story with different names.

One child is calm after a slow, story-based show but wired and melting down after ten minutes of a hyper-cut YouTube cartoon. Another can quote entire episodes, but can’t handle waiting in line or losing a game. Parents aren’t really asking “How many minutes?” anymore, they’re asking:

“What is this doing to my kid’s brain?”

Age ratings (TV-Y, PG) tell you if there’s swearing or gore, not whether the pacing, language, and emotional tone are building self-regulation or quietly eroding it. Algorithms optimize for watch time, not development.

So we asked:

What if kids’ videos had a brain health label, like a nutrition label, but for the developing brain?

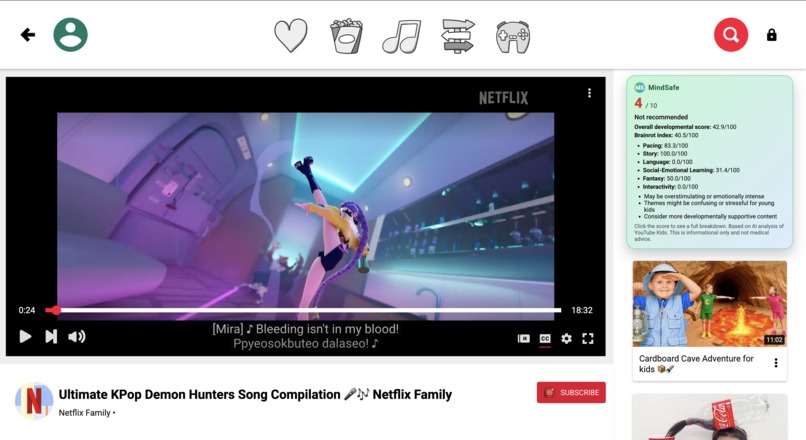

MindSafe is that label.

What MindSafe Does

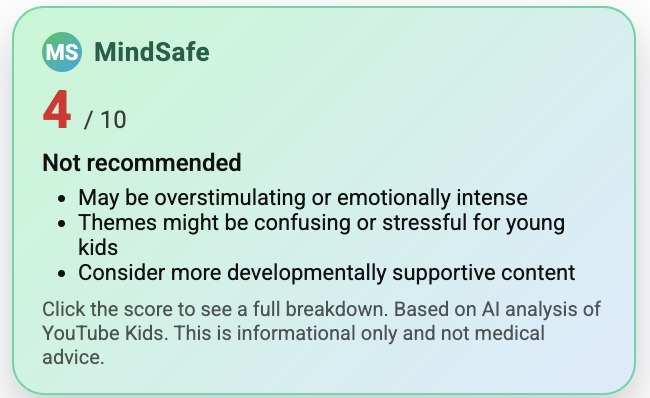

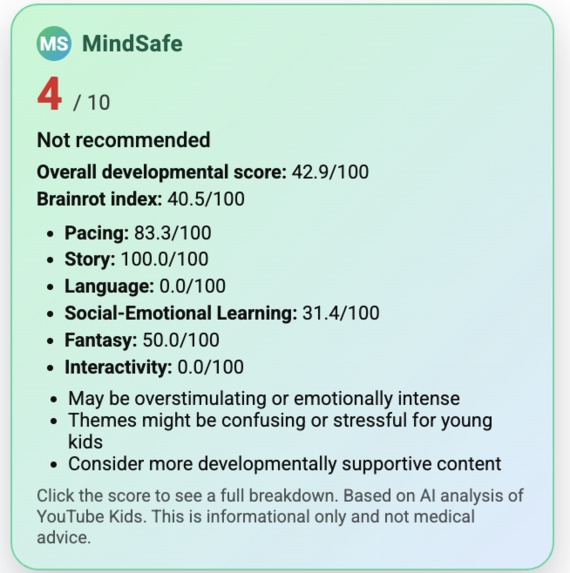

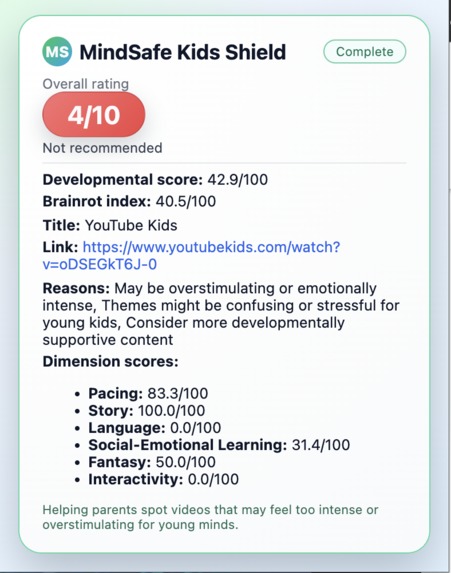

MindSafe is an AI engine that evaluates children’s videos and gives two age-aware scores:

- Development Score (0–100) – how much the video supports language, empathy, self-regulation, and real understanding for this specific age.

- Brainrot Index (0–100) – how overstimulating, chaotic, shallow, or behavior-warping it is for this age.

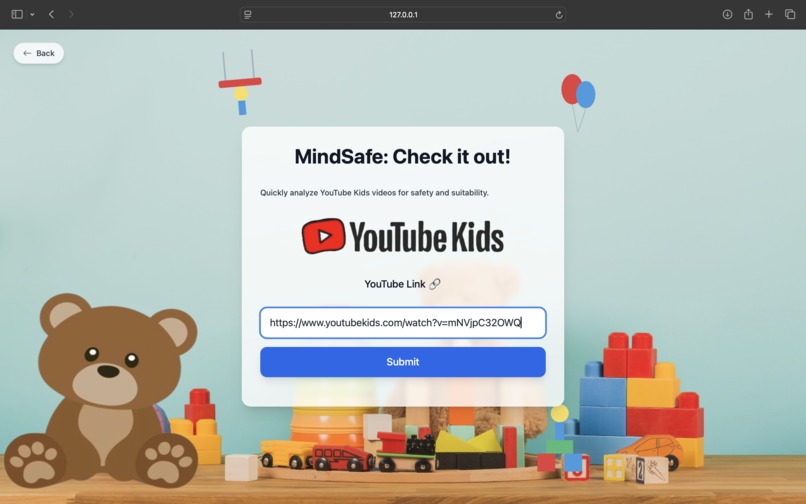

Given a YouTube URL and a child’s age, MindSafe:

- Extracts a time-aligned transcript, audio features, and the visual rhythm of the video.

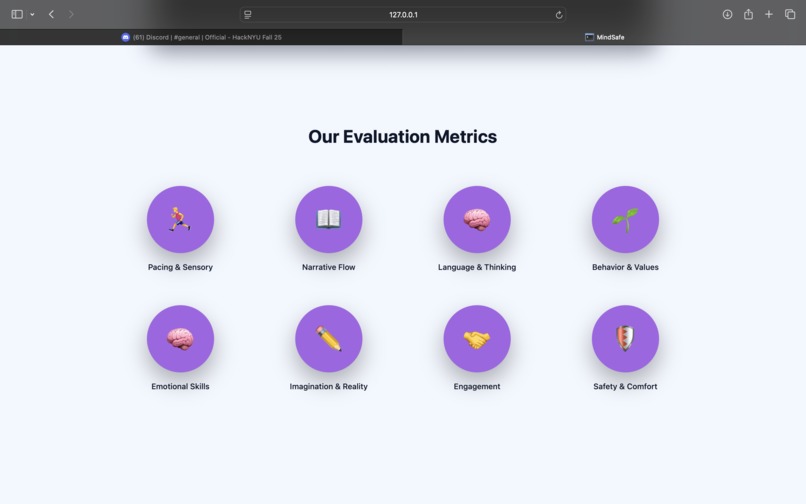

- Computes developmental metrics across:

- Pacing & Sensory Load – cuts per minute, loudness, sound effects.

- Narrative Coherence – smooth story flow vs random jumps.

- Language & Cognition – vocabulary richness, sentence complexity, questions, emotion and mental-state words.

- Prosocial vs Aggressive Modeling.

- Social–Emotional Learning (SEL) – explicit coping strategies (“take a deep breath,” “use your words”).

- Fantasy vs Realism.

- Interactivity / Direct Address – characters talking with the child.

For each age band (0–2, 2–3, 3–5, 5–8), we define research-informed “healthy ranges” for these metrics and map them into dimension scores (Pacing, Story, Language, SEL, Fantasy, Interactivity). From there we derive:

- A Development Score (weighted sum of helpful dimensions).

- A Brainrot Index (weighted sum of risk: overstimulation, aggression, chaotic fantasy, etc.).

Instead of “It’s 10 minutes and it’s PG,” you get:

“Development 82 / Brainrot 15 – gentle pacing, rich language, strong emotion coaching; recommended for ages 3–5.”

How We Built It (Tech & Scoring)

From YouTube link to structured content

MindSafe is a full pipeline that goes from YouTube link → scores + explanation.

- A data extraction layer downloads the video, separates audio and video, and turns the audio into a time-aligned transcript using Whisper, a local speech-recognition model.

- An evaluation engine + Flask API takes that transcript and audio, computes metrics, and returns scores and explanations. We cache results so repeated evaluations are instant.

Processing is chunked so we can handle episodes up to 20–30 minutes without huge latency or external ASR costs.

Semantic understanding with AI

On top of the raw transcript, we add a semantic layer:

In a “rich” mode, we use a large language model (Gemini 2.5 via OpenRouter) as a semantic annotator to:

- Clean the transcript into clearer dialogue,

- Understand what characters are doing and feeling,

- Label segments for prosocial vs aggressive acts, fantasy level, SEL strategies, direct address, and fear/intensity.

For narrative coherence, we use a sentence-embedding model to measure how similar neighboring segments are and how often the story “teleports” to something unrelated.

In the deployed FAST mode, we turn off LLM calls and rely on robust keyword- and pattern-based heuristics for prosocial/SEL/fantasy signals. This keeps the API cheap and predictable while still distinguishing “gentle prosocial” from “chaotic aggressive” content.

Low-level metrics: pacing, audio, language

We combine AI with classical signal processing and simple NLP:

Pacing & audio

We estimate cuts per minute, average shot length, mean loudness, dynamic range, constant music, and bursts of sound effects using standard Python tools (e.g., librosa). This captures how “hyper” or calm a show feels.Language & emotion

Over the transcript, we compute:- vocabulary diversity and average sentence length,

- how often characters ask questions (especially “why/how”),

- how often they use emotion words (“sad,” “excited”) and mental-state words (“think,” “know”),

- how much vocabulary goes beyond a simple Tier-1 word list.

These are transparent, explainable features that we can show to parents and researchers.

From metrics to scores

Instead of training a black-box classifier, we implemented a rule-based scoring framework:

- We define age bands and, for each, what “good” looks like for each metric: very calm pacing and almost no fantasy for toddlers; richer language and explicit SEL coaching for preschoolers; more complex narratives for early elementary kids, with limited violence and chaos.

- Every raw metric is turned into a 0–1 “developmental fit” for that age:

- Some are better low (aggression, extreme pacing),

- Some better high (prosocial ratio, SEL strategies),

- Some best in the middle (language complexity).

- Metrics are grouped into six dimensions: Pacing, Story, Language, SEL, Fantasy, Interactivity.

- For each age band, we:

- Combine dimension scores into a Development Score (0–100), emphasizing what matters most for that stage.

- Invert the risky aspects into a Brainrot Index (0–100), where overstimulation, aggression, and chaotic fantasy count most.

Finally, MindSafe generates a short explanation and recommendation label so the scores are easy to understand and act on.

What We Learned

“Screen time” is the wrong unit.

Two 10-minute videos with the same age rating can have completely different effects. Parents already feel this; MindSafe gives language and structure to that intuition.Research is easy to read, hard to encode.

Turning ideas like “fast-paced fantastical cartoons” or “prosocial educational TV” into measurable, age-specific metrics forced us to think like both engineers and developmental scientists.LLMs are powerful evaluators.

Using a large language model as a semantic labeler for prosocial behavior, SEL strategies, fantasy, and narrative coherence felt like working with an always-on expert annotator, not just a chatbot.

What We’re Proud Of

- A principled, interpretable score, not a vibe-based rating.

- A multi-modal understanding (video rhythm, audio intensity, language, social-emotional cues) distilled into two numbers and a clear explanation.

- Reframing the conversation from “less screen time” to “better screen time”:

If my child is going to watch something, can I at least make sure it’s good for their brain?

What’s Next for MindSafe

- Validate our scores with real families and behavioral outcomes (mood, bedtime, tantrums).

- Collaborate with child-development labs to test MindSafe against standardized language, executive function, and SEL measures.

- Move from age bands to personalized child profiles.

- Ship MindSafe as an API and dashboard so streaming platforms and parental apps can use Development Score and Brainrot Index as first-class signals in kids’ feeds.

Bibliography

A. Executive Function, Attention, Overstimulation

Lillard, A. S., & Peterson, J. (2011). The immediate impact of different types of television on young children's executive function. Pediatrics, 128(4), 644–649.

Lillard, A. S., Li, H., & Boguszewski, K. (2015). Television and children's executive function. In Advances in Child Development and Behavior (Vol. 48, pp. 219–248).

Christakis, D. A., Zimmerman, F. J., DiGiuseppe, D. L., & McCarty, C. A. (2004). Early television exposure and subsequent attentional problems in children. Pediatrics, 113(4), 708–713.

Yang, X., et al. (2017). The relations between television exposure and executive function in preschool children. Frontiers in Psychology, 8, 1833.

B. Infant / Toddler Language & “Baby Videos”

Richert, R. A., Robb, M. B., Fender, J. G., & Wartella, E. (2010). Word learning from baby videos. Archives of Pediatrics & Adolescent Medicine, 164(5), 432–437.

DeLoache, J. S., Chiong, C., et al. (2010). Do babies learn from baby media? Psychological Science, 21(11), 1570–1574.

Zimmerman, F. J., Christakis, D. A., & Meltzoff, A. N. (2007). Associations between media viewing and language development in children under age 2 years. Journal of Pediatrics, 151(4), 364–368. (Often cited in later reanalyses of “Baby Einstein” data.)

Madigan, S., et al. (2020). Associations between screen use and child language skills: A systematic review and meta-analysis. JAMA Pediatrics, 174(7), 665–675.

C. Educational TV (Sesame Street, Blue’s Clues, etc.)

Kearney, M. S., & Levine, P. B. (2015). Early Childhood Education by MOOC: Lessons from Sesame Street. NBER Working Paper 21229; later in Applied Economics. Shows Sesame Street improved school readiness.

Crawley, A. M., Anderson, D. R., et al. (1999). Effects of repeated exposures to a single episode of the television program Blue's Clues on the viewing behaviors and comprehension of preschool children. Journal of Educational Psychology, 91(4), 630–637.

Richert, R. A., Robb, M. B., & Smith, E. I. (2011). Media as social partners: The social nature of young children’s learning from screen media. Child Development, 82(1), 82–95.

D. Social–Emotional Learning (Daniel Tiger, background TV)

Rasmussen, E. E., et al. (2016). Relation between active mediation, exposure to Daniel Tiger’s Neighborhood, and U.S. preschoolers' social and emotional development. Journal of Children and Media, 10(4), 443–461.

Kirkorian, H. L., Pempek, T. A., Murphy, L. A., Schmidt, M. E., & Anderson, D. R. (2009). The impact of background television on parent–child interaction. Child Development, 80(5), 1350–1359.

E. Big-picture / Guidelines & Reviews

Council on Communications and Media, American Academy of Pediatrics. (2016). Media and Young Minds. Pediatrics, 138(5), e20162591. (Policy statement on media for 0–5-year-olds.)

Bavelier, D., Green, C. S., & Dye, M. W. G. (2010). Children, Wired: For Better and for Worse. Neuron, 67(5), 692–701. (Broad review of how digital media affects attention and cognition.)

DISCORDS: Kush Dudhia - kush162003 Sanchu - sanchu_27798 The MC - matei03847 Tyler - imbeantyler

Built With

- css

- flask

- html

- javascript

- openrouter

- python

Log in or sign up for Devpost to join the conversation.