Inspiration

The team was inspired by Mikul’s dad’s story regarding the recent flood in Chennai. His dad’s cousin was impacted by the heavy rainfall and flooding that also killed 20 people and damaged property.

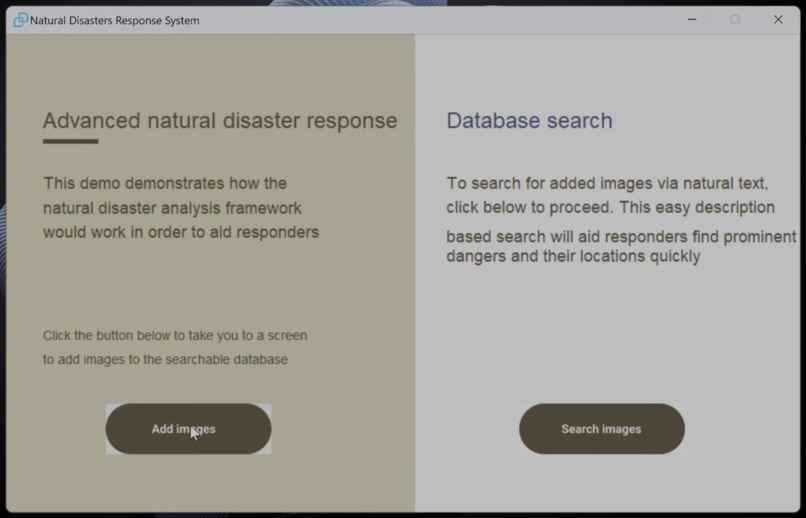

What it does

The app allows first responders, government officials, and relief teams to upload images of natural disaster scenes to a vector database, along with auto-populated coordinates and timestamp based on the current location and time of image capture. Using the database, users can submit a natural language query to receive information about an image that best matches the query, such as the coordinates and description of the image capture, to assist in accelerating disaster response. The goal is to have this on drones so that responders know where to look for people instead of wasting time aimlessly looking around.

How we built it

The GUI was designed in Figma then coded in Python. The core of the project revolves around the analysis of images, so we selected GPT4-V for its ability to analyze images and generate text. This text is what is used for the database. The image also comes with live GPS location to improve usefulness. For the vector database, we used the mlx-rag framework to create a model of the stored image information. Using LLMs, we are able to prompt the database for information about the images for the information retrieval.

Challenges we ran into

We ran into issues regarding the GPT4-V API and its use. We spent some time working out the prompt engineering that would get us the best results. Additionally, we had issues getting the mlx-rag vector database working on our machines and creating a custom database, so we had to work around using a PDF document as our database.

Accomplishments that we're proud of

We are the most proud of the way we leverage the strengths of AI to address a pressing issue. It can be difficult for humans to see people or identify specific objects during a natural disaster. Our app takes advantage of AI's ability to thoroughly analyze images to identify information that humans might miss. We were able to implement different AI language models for different purposes.

What we learned

We learned how to make a GUI in Figma and export the GUI to Python. We also learned how to use vector databases to retrieve information from files and how AI can help enhance that process for a more natural process.

What's next for MSSLAL

We want to add an image classification system before the image description model to filter out unneeded images. We also hope to implement a custom database that stores information about the images in a more organized manner than using a PDF text file.

Log in or sign up for Devpost to join the conversation.