Title

ChatGPT Could Not Come Up With A Good Project Title

Who

Anand Advani, aaadvani Mary Regan, mregan6

Introduction

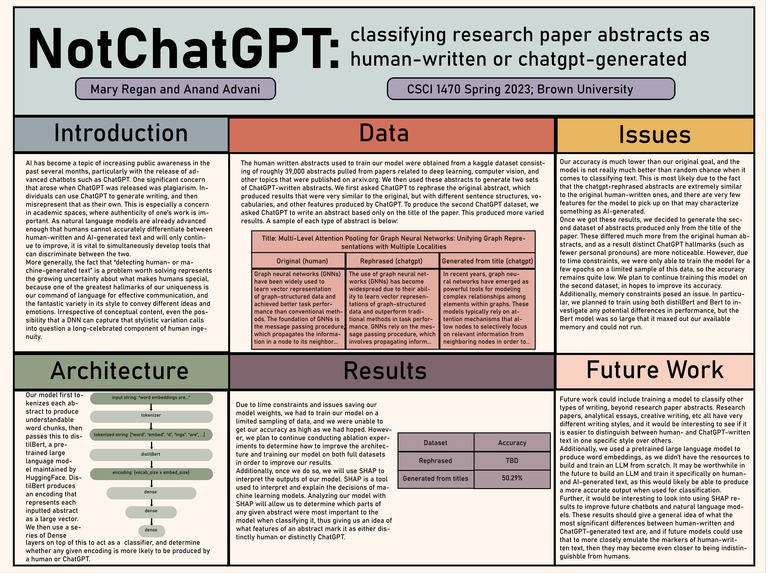

We are attempting to build a supervised classifier to distinguish between text authored by humans and text authored by ChatGPT, based on the paper preprint “ChatGPT or Human? Detect and Explain”. The paper consists of three experiments: 1) fine-tuning a modification of BERT (a large pre-trained transformer model) for the classification task, 2) qualitatively interpreting the kinds of words that model focuses on, and thus what the main differences between human and ChatGPT writing are, and 3) evaluating a simple classifier that assumes GPT-2 will produce closer output to ChatGPT than to human writing. The paper uses data from restaurant reviews, and asks ChatGPT to write its own restaurant reviews, but we believe that academic writing is more relevant to issues of plagiarism and poses a more difficult challenge. For example, many restaurant reviews contain uncapitalized sentences, nonstandard punctuation, and an abundance of emojis and misspellings, which are proxy variables that a model might hone in on instead of capturing the more fundamental difference in vocabulary and style between human and ChatGPT writing. We will focus on the first experiment, and build our own transformer network instead of fine-tuning a pre-trained large language model.

Related Work

The paper “Comparing Abstractive Summaries Generated by ChatGPT to Real Summaries Through Blinded Reviewers and Text Classification Algorithms” investigated the ability of a model based on DistilBERT from HuggingFace to differentiate between summaries of news articles generated by ChatGPT and summaries written by humans. The authors found that the model could classify summaries with 90% accuracy, compared to human reviewers’ accuracy of 49%. This further demonstrates the ability of large language models to distinguish between ChatGPT-generated and human-generated text in the context of news articles, which represent a distinct, formal style of writing.

The paper “ChatGPT or Human? Detect and Explain” doesn’t have a public implementation, but there are several public implementations available that classify text are human-written or ChatGPT-written: DetectGPT, GPTZero

There are also pre-trained large language models that are not built to classify ChatGPT and human text, but could be fine-tuned to do so: DistilBERT

Additionally, SHAP is publicly implemented. Rather than reimplementing, we would plan to utilize this implementation to evaluate our model after it is built and trained, and qualitatively determine what may explain the decisions our model made for various sample inputs.

Data

For human-generated abstracts, we will utilize this kaggle dataset, which contains roughly 38,000 abstracts pulled from arxiv.org. The abstracts are from the most recently uploaded papers that contain a selected list of query terms related to deep learning, computer vision, and other technical topics (see here for the complete data collection method).

For ChatGPT-generated abstracts, we plan to prompt ChatGPT to rephrase each of the human-written abstracts in our dataset. If we assume each prompt and response is roughly 300 tokens, and ChatGPT has a rate of $0.002/1K tokens, then doing this for 38,000 abstracts will cost roughly $45.

Both datasets will be fairly large, consisting of about 38,000 abstracts at roughly 200-300 words each. Preprocessing will involve tokenizing the inputs, filtering out abstracts that are too long, and labeling each abstract as human or ChatGPT written.

Methodology

We will be using a transformer network of exactly the kind described in class, except instead of the last layer being a softmax resulting in a distribution on the vocabulary to predict the next work, it will be a softmax with two outputs representing the probability of each class (human- or machine-generated). We will train and test the model using the labeled dataset of human-written and ChatGPT-rephrased paper abstracts. The most difficult part of this project may be constructing the model architecture, because the paper has not published its corresponding code and used pytorch, or it may be training the model efficiently, because ~76000 abstracts (38000 from each source) is a lot of data.

If this is not enough data to extract meaningful patterns about the differences in the style of the data, there are a couple alternative architecture options. We could train two transformer models to predict text as usual, instead of predicting one of two classes, which would give the model much more information during training - one for ChatGPT and one for human text. This may seem equivalent to attempting to rewrite GPT, but we do not need the model to be large enough to capture the semantic structure of English, just large enough to capture the syntactic and stylistic differences between ChatGPT and human text. To test this architecture, we would pass a sample of text through both networks and classify the text according to whichever architecture produced a lower perplexity score.

The paper presents another alternative classifier: We could try a normal transformer model trained on either just the human-generated text or just the machine-generated text, and train a single parameter representing a threshold perplexity score. For a given input text sample, if the model’s perplexity score were above the threshold, the text sample would then probably not be of the class the model was trained on, and if the perplexity were below the threshold, the text sample would probably be of the same class the model was trained on.

In addition, we will try to implement the same GPT-2 perplexity classifier as in the paper — the same as the last idea, but using GPT-2 instead of our own model with the assumption that GPT-2 will produce subsequent output words more similar to ChatGPT output than to human writing.

Metrics

Our first experiment is to see if our transformer-based model can accurately distinguish between human-written abstracts and the rephrased abstracts from ChatGPT. Once this model is trained, we will utilize SHAP to attempt to explain the model’s decisions. We’ll look at which words were most influential in classifying abstracts, and attempt to qualitatively determine what the model seems to rely on to produce its classifications. Finally, if we have extra time, we will experiment with using a perplexity-based model that utilizes GPT-2 to determine if abstracts can be accurately classified based on perplexity alone (similar to that implemented in “ChatGPT or Human? Detect and Explain”).

Since our project is a classifier, accuracy will be based on whether the model classifies inputs correctly or not. Our base accuracy goal is better-than-chance, i.e. at least 50%. Our target goal is 60%, and our stretch goal is better than the perplexity-based model implemented in “ChatGPT or Human? Detect and Explain” at 69%. Notably, it may be more difficult to achieve these benchmarks with the data we are using, since academic text tends to be much closer in style (and grammar, punctuation, capitalization, etc) to ChatGPT output than restaurant reviews are.

Ethics

What broader societal issues are relevant to your chosen problem space?

One of the biggest issues relevant to this problem space is plagiarism. This is one of the most significant concerns with ChatGPT, as individuals can use it to generate writing that they then misrepresent as their own. This is especially a concern in academic spaces, where authenticity of one’s work is important. As chatbots and language generative models continue to advance, it will be more and more difficult to distinguish between AI-generated text and human-generated text. This makes it more important to have models that are able to differentiate between the two, as humans are already generally unable to do so. This is one reason deep learning is a good approach to this problem — as deep learning models advance in their ability to replicate human text, we can simultaneously advance models’ ability to distinguish between human and AI-generated text.

More generally, the fact that “detecting human- or machine-generated text” is a problem worth solving represent the growing uncertainty about what makes humans special, because one of the greatest hallmarks of our uniqueness is our command of language for effective communication, and the fantastic variety in its style to convery different ideas and emotions. Irrespective of conceptual content, even the possibility that a DNN can capture that stylistic variation calls into question a long-celebrated component of human ingenuity.

Why is Deep Learning a good approach to this problem?

Language is composed of very deep and complex patterns; even syntactic differences are difficult or impossible to capture with traditional deterministic representations or simpler models.

Division of labor

Since our group is only two people, it will be easier to work together more closely on each part of the project. As such, we have listed the components of the project below instead of assigning them right now (who works on each part may change in the future):

Preprocessing (including getting ChatGPT output)

Main model architecture construction

Main model training and tuning (alternative models will be attempted if the main transformer classifier fails)

GPT-2 perplexity classifier

Check-in #3 Writeup

Final Writeup

Built With

- python

- tensorflow

Log in or sign up for Devpost to join the conversation.