Inspiration

The inspiration for Notescope came from the challenges students face in taking notes efficiently during classes and lectures. In fast-paced environments, it's often difficult to capture everything while staying engaged in the discussion. We wanted to create a tool that makes the note-taking process seamless, reduces manual effort, and enhances learning. The integration of speech-to-text technology along with AI-powered summarization and question-answering features makes this possible. Our aim was to provide students with a smart assistant that not only transcribes lectures but also offers valuable insights and context-based assistance, making studying and revision more effective.

What it does

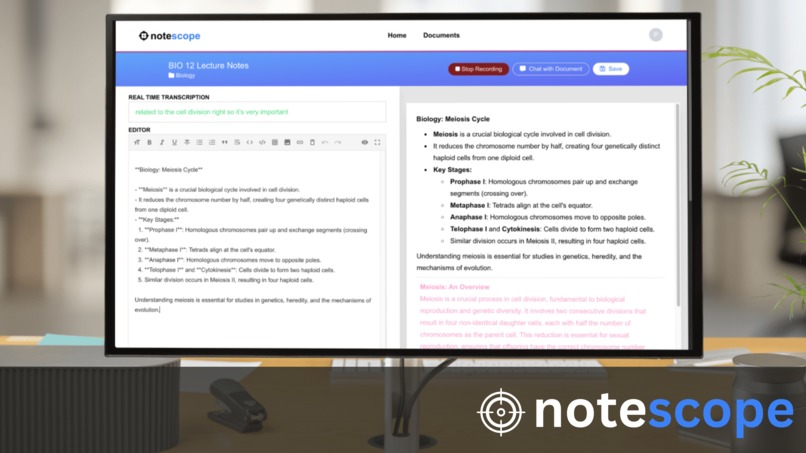

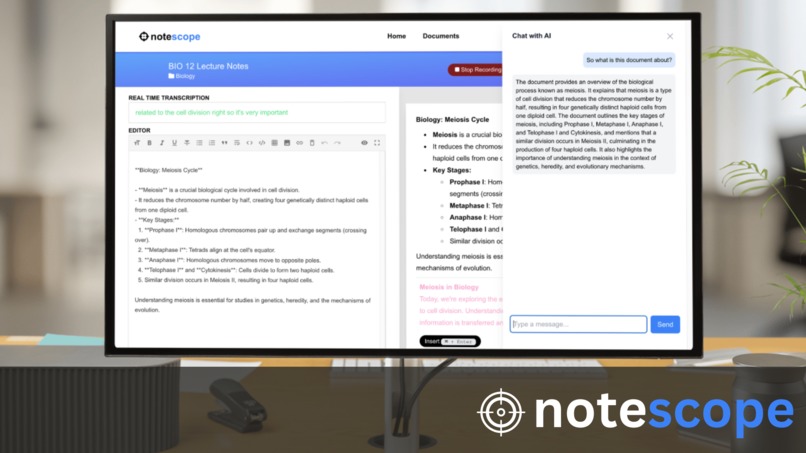

Notescope is an AI-powered text editor that uses your microphone to capture lecture content, converting it from speech to text in real-time. Beyond simple transcription, it includes an integrated chatbot that helps students by summarizing the lecture notes and answering questions based on the captured content. This allows students to quickly review key points, ask follow-up questions about the notes, and better understand the material, making it a comprehensive tool for in-class and post-class learning.

How we built it

How we built it We built Notescope using a modern stack of cutting-edge technologies to ensure a smooth and powerful experience:

OpenAI API: We leveraged OpenAI’s powerful natural language models for summarization and the Q&A chatbot feature, providing students with concise summaries and contextual answers from their notes.

Next.js: For the backend, we used Next.js to build server-side rendered pages and manage API routes, ensuring smooth real-time functionality.

Node.js: We used Node.js for the server-side logic, handling requests and integrating with the speech-to-text service and OpenAI API.

Tailwind CSS: Tailwind CSS was utilized for rapid UI design and customization, ensuring that the application looks clean and professional with minimal effort.

Firebase: Firebase provided us with real-time data storage and synchronization, allowing users to seamlessly save, retrieve, and access their notes across multiple devices.

MongoDB: MongoDB was used for storing user information, transcripts, and summaries, giving us flexibility and scalability for managing data.

This combination of technologies allows Notescope to efficiently handle real-time speech transcription, AI-powered summarization, and question answering, while providing a smooth and responsive user experience.

Challenges we ran into

Some of the challenges we faced while building Notescope include:

Accurate Speech-to-Text Conversion: Ensuring high accuracy in converting speech to text was challenging, especially in noisy environments or with different accents.

Efficient Summarization: Building an AI model that could generate meaningful summaries of lecture notes without losing key information required multiple iterations and fine-tuning.

Contextual Understanding: Developing a chatbot that could understand context from the notes and answer questions accordingly was complex, requiring advanced NLP techniques.

Real-time Performance: Integrating real-time transcription, summarization, and response generation while keeping the app responsive was a technical hurdle

Accomplishments that we're proud of

Seamless Real-time Transcription: Achieving accurate and efficient transcription of speech in real-time was a major achievement, especially when combined with the summarization and Q&A features.

AI-powered Summarization: The ability of our AI models to break down lengthy lecture notes into concise summaries provides significant value to students.

User-friendly Interface: Creating a smooth and intuitive user interface where students can easily interact with the app during and after lectures was a key accomplishment.

What we learned

Throughout the development process, we learned:

NLP Techniques: We deepened our understanding of natural language processing and how to train models for summarization and question-answering tasks.

Real-time System Design: Building applications that handle real-time data, such as speech input and live transcription, requires careful consideration of system architecture and performance.

User-Centric Design: We learned the importance of building features that directly address the needs of the target users—students in this case—and ensuring the tool fits seamlessly into their learning experience.

What's next for notescope

In the future, we aim to expand Notescope with additional features such as:

Multi-language Support: Enabling transcription and summarization in multiple languages to make it accessible to a global audience. Advanced AI Capabilities: Integrating deeper AI functionalities, such as personalized study recommendations based on notes and automatic generation of quizzes or flashcards. Mobile App: Developing a mobile version of Notescope to provide flexibility and accessibility for students who prefer taking notes on their smartphones or tablets. Offline Mode: Adding offline support so users can continue taking notes and receive AI-powered assistance without an internet connection

Log in or sign up for Devpost to join the conversation.