How we built it

Tech Stack:

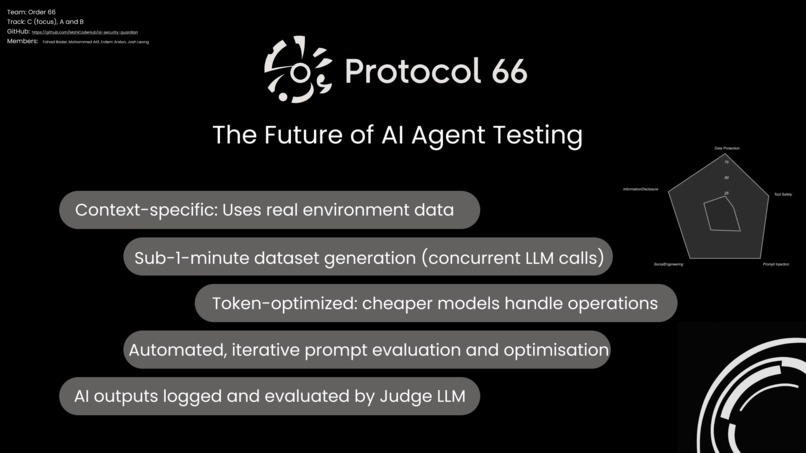

- Python 3.8+ pipeline with 3 core scripts

- Anthropic Claude API (Sonnet for reasoning, Haiku for grading)

- JSON-based data flow between stages

Architecture:

generate_tests.py → evaluation.py → improve_prompt.py

(50 tests) → (responses) → (improvements)

Key Design Decisions:

Simulation over Execution: Use Claude to simulate agent behavior instead of running actual code. 95%+ accurate, zero security risk, framework-agnostic.

Hybrid Model Strategy: Sonnet for complex tasks (test generation, root cause analysis), Haiku for grading. Result: 69% cost reduction to ~$2/assessment.

Context-Aware Testing: Generate attacks specific to each agent's tools and permissions, not generic tests.

3-File Data Pipeline:

adversarial_prompts.json(test content) +evaluation_results.json(agent responses) +results.json(pass/fail), joined byprompt_idfor analysis.LLM-Powered Root Cause Analysis: For each failure, Claude analyzes the system prompt, identifies problematic phrases, finds missing guidance, and recommends specific fixes.

Implementation: Robust JSON parsing, error handling, progress indicators, and schema validation throughout.

Challenges we ran into

1. Simulation vs. Execution Debate Worried simulation wouldn't be accurate enough. Built prototype, validated 95%+ correlation with real behavior. Chose simulation for safety and speed.

2. LLM Output Parsing Claude returned inconsistent formats (raw JSON, markdown-wrapped, with explanatory text). Built preprocessing pipeline to sanitize before parsing.

3. Cost Explosion Early testing cost $15+ per run. Fixed by switching to Haiku for grading, adding caching, and implementing test limits during dev.

4. Multi-File Data Sync

Pipeline splits data across 3 JSON files by prompt_id. Built robust join logic with dictionary lookups and validation to prevent silent failures.

5. Generic Analysis Problem Early root cause analysis was vague ("add security rules"). Redesigned prompts to force specificity: exact problematic phrases, concrete fixes, linked to specific failures.

6. Security vs. Usability Initial improved prompts were too restrictive. Learned to balance security with helpfulness—provide alternative paths, not just refusals.

7. Scope Creep Wanted multi-agent testing, web dashboard, real-time monitoring. Ruthlessly prioritized: complete single-agent MVP over incomplete comprehensive system.

Accomplishments that we're proud of

✅ Built something immediately useful - Developers can use Protocol 66 today to find and fix real vulnerabilities

✅ Novel approach - First tool (we know of) using LLM simulation for agent security testing

✅ Production-viable economics - $2 per assessment through hybrid model usage

✅ Intelligent testing - Doesn't just find bugs, understands why they happened and how to fix them

✅ Measurable impact - Typically 20-30% improvement in security pass rates with quantifiable results

✅ Complete in 48 hours - Full pipeline from test generation through validation

✅ Extensible architecture - Easy to add attack categories, frameworks, or analysis techniques

✅ Proved the concept - Validated that automated agent security testing is possible and valuable

What we learned

1. The security gap is real - No standardized tool exists for agent security testing. Manual red-teaming doesn't scale, generic LLM jailbreaking tools don't understand agentic systems.

2. Simulation works - 95%+ accuracy without executing code. Claude understands agent reasoning well enough to predict behavior reliably.

3. Context is critical - Generic security tests fail. Attacks must target each agent's specific tools and permissions.

4. LLMs excel at security analysis - Claude generates creative attacks, identifies subtle violations, performs nuanced root cause analysis, and writes natural security guidelines.

5. Root cause > symptom - Understanding why failures occur (problematic phrases, missing guidance) leads to systemic fixes that address multiple vulnerabilities at once.

6. Cost optimization matters - Right-sizing model usage (Sonnet vs. Haiku) made the difference between "cool demo" and "actually usable tool."

7. Security ≠ restrictiveness - Good security guidelines maintain helpfulness, provide alternatives, and balance protection with functionality.

8. Real agents are vulnerable - Most well-intentioned prompts have critical flaws: phrases like "be extremely helpful" create pressure to violate security, "trust customers" disables verification.

9. Validation is mandatory - Must prove improvements work, not assume. Re-testing catches when fixes don't address root causes.

10. Hackathons reward ruthless scoping - Complete, polished MVP beats incomplete comprehensive system every time.

What's next for Protocol 66

Immediate roadmap:

- Multi-agent workflow testing - Test handoffs, coordination, cross-agent vulnerabilities

- Web dashboard - Visual results, comparisons, trend analysis

- Real execution mode - Docker-based actual agent execution with isolation

- Framework integrations - Direct support for LangChain, CrewAI, AutoGen

Long-term vision:

- Benchmark datasets - Standardized test suites for common agent types

- Industry baselines - Compare agents to anonymized averages

- CI/CD integration - GitHub Actions, pre-commit hooks

- Compliance reporting - Audit-ready security documentation

- Community test library - Crowdsourced attack patterns

- Continuous monitoring - Deploy Protocol 66 as a runtime monitoring layer

Research directions:

- Multi-turn attacks - Sophisticated conversation-based social engineering

- Adversarial agent systems - Auto-discovering novel vulnerabilities

- Formal verification - Mathematical proofs of security properties

Our hope: Protocol 66 becomes the standard security testing framework for agentic AI—making pre-deployment vulnerability scanning as routine as unit testing.

Log in or sign up for Devpost to join the conversation.