For MLH Reviewer - To explore full site or for demo visit the website or watch this showcase video

Inspiration

Many cars I see on a daily basis are semi-autonomous vehicles, with fully-autonomous and unattended vehicles as a reality in the coming years. Our team was really eager to learn, create and contribute to this evolving field, especially since all of us our interested in becoming future engineers and making an impact.

What it does

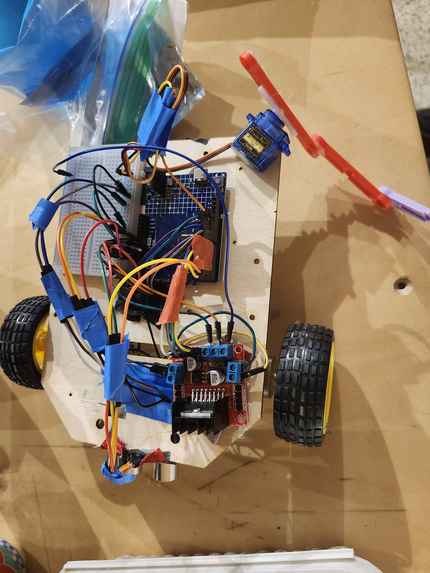

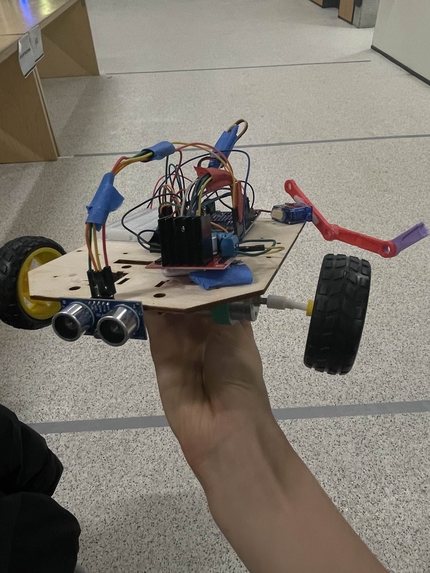

We built both an Autonomous Car MVP and interactive website to showcase our prototype. Our car features an IR sensor and a color sensor to increase trace tracking precision by 67%. On top of that, we include ultrasonic sensor to dodge obstacles and the new generation Arduino R4 to accurately control servo claw.

- The website implements Eleven Labs for accessibility and uses an energetic announcer to convey our car, ROG-G67's unique history

- We also implemented Gemini API for our "pit stop" assistant which will help users exploring our car to know more about it

How we built it

- We used Arduino IDE that runs on C++ to power the RC Car

- We built the website using React with react-three fibre for our 3D design

Challenges we ran into

- Our car constantly experienced broken wheels that required us to perform wheel changes

- The claw mechanism we had to DIY because the given items were incompatible

- There were bugs constantly both with the Arduino code and on the website, so we required constant iterations and calibrations before it worked

Accomplishments that we're proud of

- It was many of our first time building any form of autonomous vehicle, and hardware in fact.

- None of us had much experience with C++ and primarily used Python so we quickly advanced our coding knowledge

What we learned

- We learned how to use Github Copilot to enhance our code quality and efficiency

What's next for ROG G67

Implement computer vision for human detection and improve spatial awareness.

Log in or sign up for Devpost to join the conversation.