Inspiration

As AI-generated images, videos, and audio become more realistic, they are increasingly used for scams, impersonation, and misinformation. We were inspired by how difficult it has become for everyday users to quickly determine whether a piece of media can be trusted.

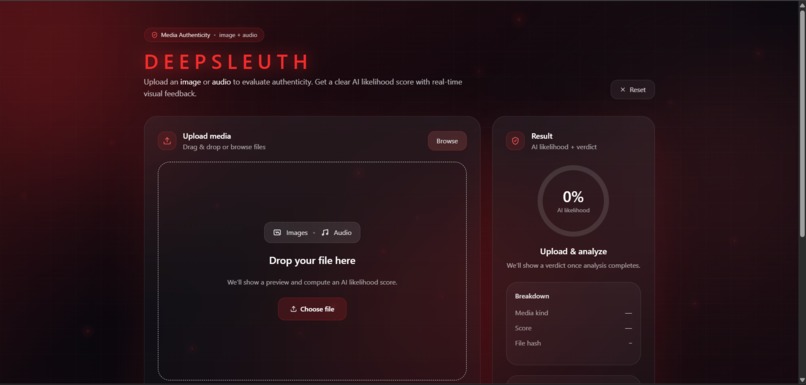

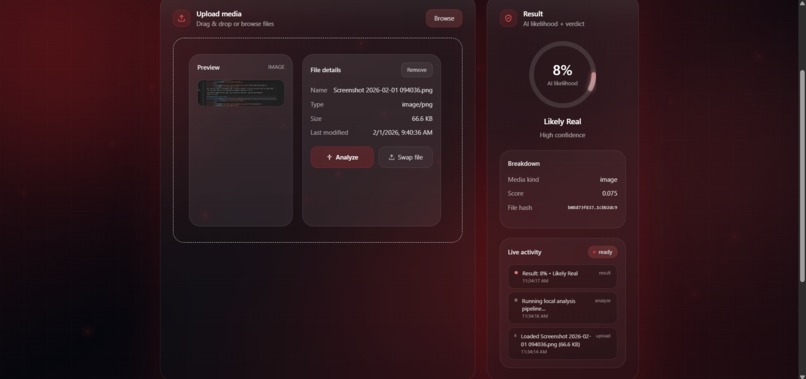

What it does

DeepSleuth analyzes images, videos, and audio to estimate the likelihood that the media was AI-generated. It combines results from multiple detection APIs into a single confidence score and risk assessment.

How we built it

We built a full-stack web application using a lightweight HTML and Tailwind CSS frontend with a express backend. The backend routes media through the Reality Defender AI content detection API, normalizes the output, and aggregates the result into a score report.

Challenges we ran into

The Real Defender detection API produced different output formats, confidence scales, and latency, which made aggregation nontrivial. Balancing accuracy while avoiding false positives across different media types was also a challenge.

Accomplishments that we're proud of

We successfully integrated the Reality Defender API into a single, cohesive detection pipeline that supports images, videos, and audio. We are also proud of delivering a clean, intuitive interface that communicates risk clearly without overwhelming users.

What we learned

We learned that media authenticity is not binary and that combining multiple detection signals leads to more reliable results. We also gained experience building resilient full-stack systems under tight time constraints.

What's next for DeepSleuth

We plan to improve detection accuracy by analyzing multiple frames or audio segments instead of single samples. Future work includes adding user reporting, confidence explanations, and support for real-time media streams.

Built With

- css

- express.js

- html

- javascript

- lucide

- react

- tailwind

- typescript

- vite

Log in or sign up for Devpost to join the conversation.