Inspiration

It all started when our friends asked us a simple question: "How does ChatGPT work?" While we tried to explain it, we realized how difficult it can be to convey something complex to someone with limited experience in a field. And as college students, we've all conducted research before, and see many of our peers going into research. But from our own experience, we've seen how papers use complex jargon and technical concepts that make it difficult to understand, especially when most students lack the required experience.

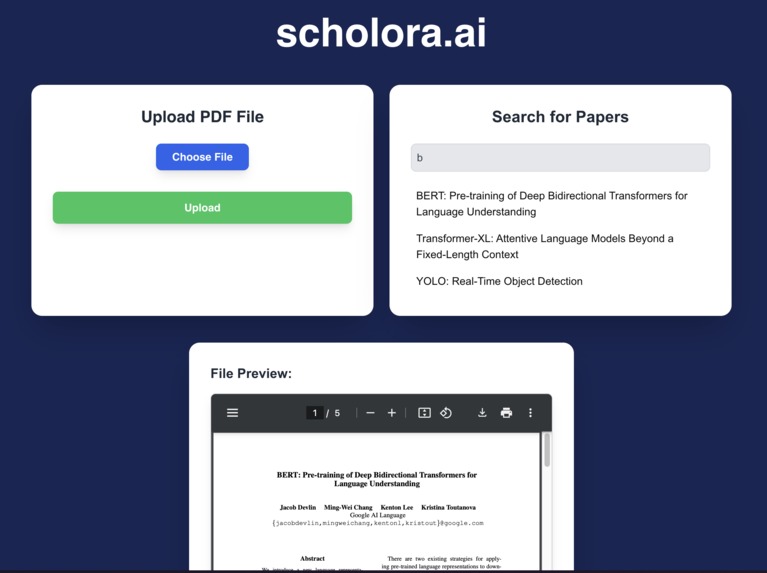

Therefore, we propose scholora.ai—a tool that can explain these complex topics as if we were talking to an elementary schooler. Using visual diagrams generated by the Manim Library and a Voice Agent for real-time conversations, students can understand research papers in a fun, engaging way.

What it does

First up, we have the Prerequisite Learner. Ever opened a research paper and realized you need to understand five other concepts first? Our Prerequisite Learner has got your back. It provides curated YouTube links that cover the basics you need before diving into the methods of the paper. Think of it as your guided roadmap to make sure you're ready before the real journey begins.

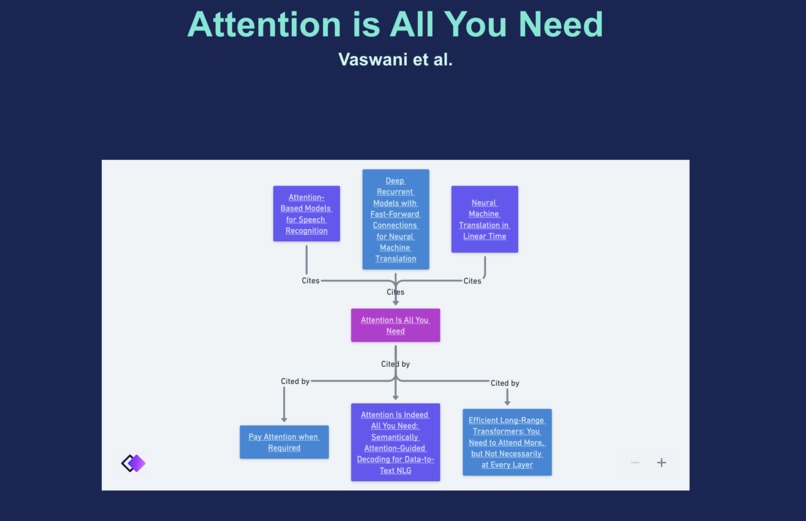

Next, there's the Knowledge Graph. Research papers don’t exist in isolation—they build upon each other in a web of ideas. Our Knowledge Graph shows you which papers influenced the current one, giving you more context and a deeper understanding of how the field evolved. It also suggests papers that reference the one you're reading, opening doors for further exploration.

Understanding complex ideas is much easier when you can visualize them. That’s why we’ve included Manim animations that break down the key concepts. Instead of struggling through dense equations, watch as the theory comes alive in engaging, step-by-step visualizations.

To keep you on track and ensure you're really grasping the content, we've added a Quiz feature. After each section of the paper, you'll be prompted with questions to test your understanding before moving on. It’s like having a friendly checkpoint that makes sure the journey isn’t just about moving forward, but also truly comprehending.

And finally, our Voicebot is here to make learning conversational. Got a question about the paper? Just ask the Voicebot, and it'll give you an easy-to-understand summary. No more struggling with jargon or feeling stuck—the Voicebot turns those confusing sections into a quick, friendly explanation.

How we built it

For the frontend, we utilized Next.js and React to craft a responsive, dynamic user interface. We leaned on Tailwind for streamlined, modern styling, and used a blend of CSS, HTML, and JavaScript to piece together the UI layout in a way that is not only functional but visually engaging. The goal was to ensure users had a seamless, enjoyable experience navigating the platform.

On the backend, things got even more exciting. We integrated Google Gemini's API to handle paper summarization and provide curated YouTube links for prerequisite learning. This helped make sure users could understand the key concepts before diving in too deep.

We leveraged Chroma's API for a powerful semantic search. By analyzing papers in similar categories on arXiv, it identifies both the foundational papers that influenced the current one, as well as subsequent work that was influenced by it. This provided the backbone for our Knowledge Graph feature, allowing users to explore the interconnected world of academic research.

To bring visualizations to life, we used the Hyperbolic API paired with Llama 3.1 to generate Manim code that breaks down key concepts in the paper. This approach allows us to transform theoretical ideas into animations that are easier to grasp and far more engaging.

For the interactive voicebot, we used Vapi.ai’s API alongside Groq's Llama 3.1 405b-reasoning as the backend model, with Cartesia for voice synthesis. This allowed users to get summaries about a section, ask questions, and receive easy-to-understand answers—all through a conversational interface. To enhance user control, we also implemented Flask to connect a simple "start" and "stop" button for activating and deactivating the Vapi.ai voicebot.

Challenges we ran into

We ran into challenges with generating the Manim code, as we had to make the Manim code generate diagrams for a large variety of concepts. We eventually solved this through using the Hyperbolic API to generate Manim Code based on a scene description.

Accomplishments that we're proud of

We're proud of integrating the Vapi API, as users can communicate directly with the voice agent. We're also happy that we could create a visual knowledge graph that allows users to see the history and related works of the paper.

What we learned

We learned a lot about integrating LLMs into full-stack web applications, and styling our web app using Tailwind.js.

What's next for scholora.ai

We hope to add a slider that varies responses based on the user's skill level.

Log in or sign up for Devpost to join the conversation.