Inspiration

Accessibility is a crucial part of our society. My uncle (who passed away) was a mute person with a big heart. He was the inspiration for this project. We wanted a way to give mute people a voice and deaf people a way to communicate effectively with hearing and speaking people. Our goal is to bridge the gap between these groups of people so that everyone can become more connected with each other and have conversations without accessibility barriers.

What it does

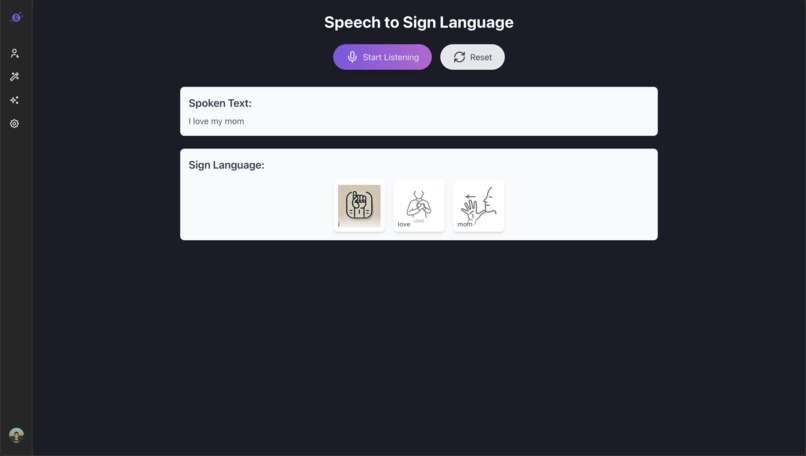

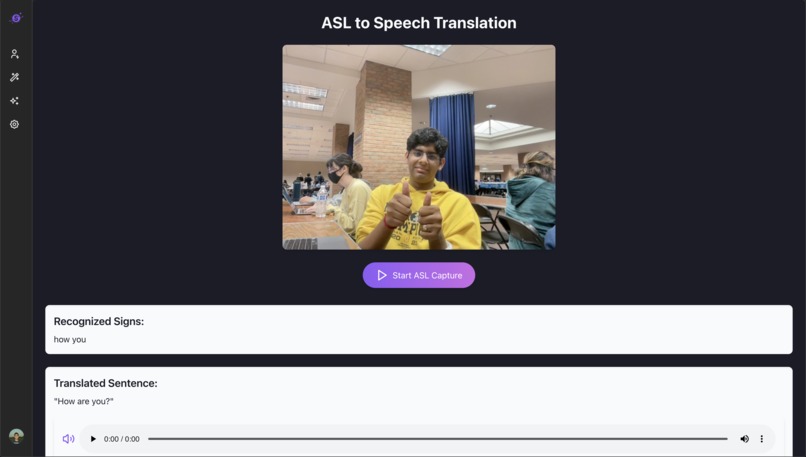

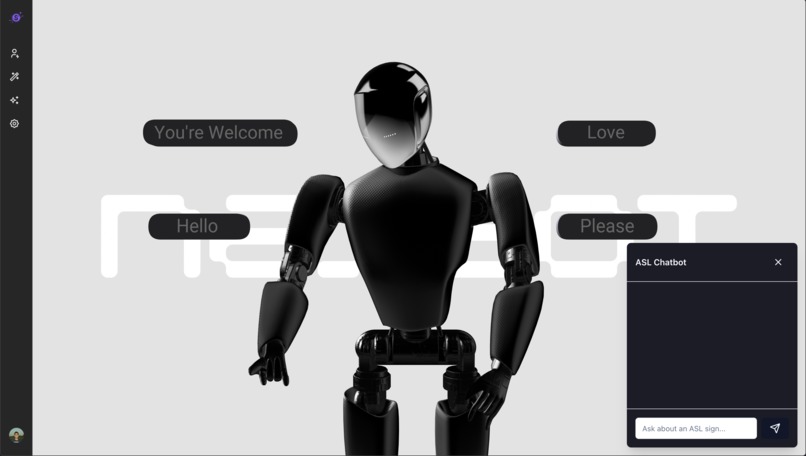

SignVerse bridges the communication gap for the Deaf community by offering bi-directional translation between speech/text and American Sign Language (ASL) and an AI-powered 3D robot tutor designed to teach ASL interactively. Our platform makes ASL accessible, empowering users to communicate seamlessly and learn efficiently.

How we built it

We used React and Next.js to build the front end, incorporating Shadcn and Aceternity UI for design purposes. We utilized Clerk for user authentication and authentication flows, including email verification and Google OAuth. The dashboard contains an AI 3D modeled tutor which was built using Spline with custom animations and a generative ML chatbot that gives advice and guidance on ASL. We created a custom Roboflow workflow to train a custom model on ASL sign signals so that we could run our OpenCV model to recognize ASL signals in real-time and translate them. This translated ASL is also passed through text-to-speech, giving people a real voice in the conversation. We also included bidirectional translation, allowing people to speak into the mic, transcribing the text in real time and converting those texts into ASL signals through a custom mapping of hand signal icons to common English words.

Challenges we ran into

Most datasets and APIs online only contain alphabet-based ASL, which is not sufficient to translate ASL practically, since it is signed at the word and sentence level. To combat this, we needed to find very large datasets with hundreds of classes for unique words and phrases, which made it much harder to train the classification model. Additionally, these datasets were outdated with invalid links and hard to process since they're made up of videos instead of images. Creating the custom animations for the 3D Spline-based AI Tutor was difficult, since it was our first time working with 3D modeling. This journey was tough, but taught us a lot about software development and web development.

Accomplishments that we're proud of

- To combat the limited datasets, we used multiple Roboflow workspaces, combining them by using the merge option. We then exported the custom yolov8 model and trained it to fit our datasets. We ran our CV model through Google Colabs and trained the model to output custom weights that were used in real-time ASL translation

- We created a custom animated 3D model for sign langauge tutoring using Spline that taught us lots about modern, robust 3D web development.

- We also learned some ASL along the way, and we are able to sign various words and phrases, which was super cool.

What we learned

We learned so much about software in this process. We learned 3D development, sign language, computer vision modeling and training machine learning models, etc. We also learned a lot about generative AI and creating custom data inputs to make LLMs more robust and efficient.

What's next for SignVerse

Our team plans on continuing the project and working with other models, such as Google MediaPipe, to improve accuracy and process videos, instead of trying to parse through individuals frames of videos. We want to add more words and phrases to our project, and possibly even expand to other sign languages such as BSL.

Log in or sign up for Devpost to join the conversation.