What it does

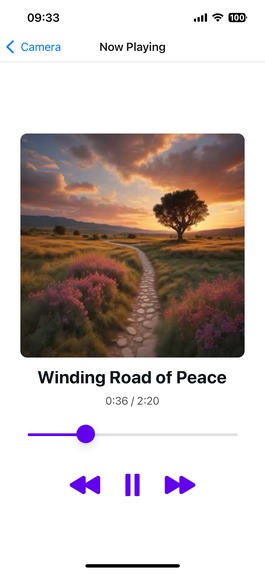

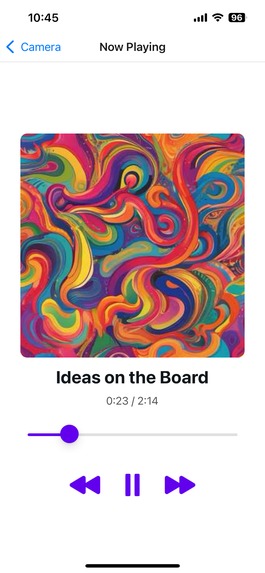

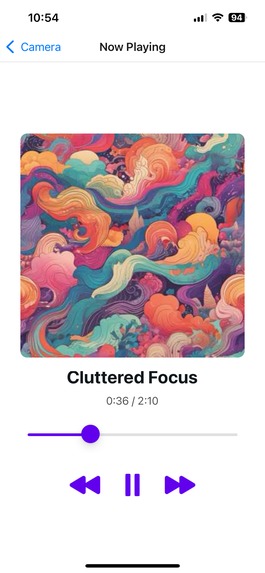

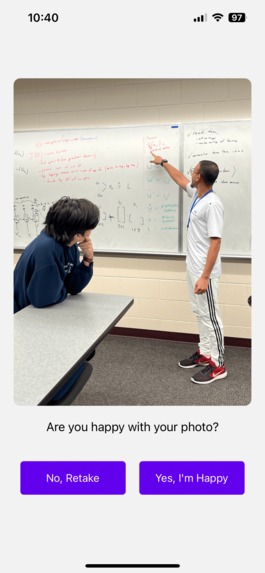

SnapTracks is a mobile app where users can take images of their current surroundings and generate custom-made music based on the image and other parts of their environment. The image is analyzed using Google's Vision AI tools. That analysis is then taken together with other data, such as location, time, and weather, to create an instrumental track as background music for the user's surroundings. SnapTracks' user interface is a simple, easy-to-use mobile app that users can start generating songs with in as little as three clicks.

How we built it

We used the Expo, a framework built on React Native, to make a mobile application so users could take pictures and listen to music from anywhere. Our backend was a Flask server where we communicated between our front end and the services we used. We used Gemini 1.0 Pro-vision-001 for image analysis, location/weather APIs to get other relevant data, ChatGPT 4o-mini to generate a prompt for music generation using given parameters, and Suno-AI-v2 to create songs perfectly tailored to the input information.

Challenges we ran into

One issue we had was having Google Vision hallucinate and invent aspects of the image it was analyzing that did not exist. We overcame this challenge by experimenting with our prompting and switching to a more powerful vision model. We also had issues with Suno's API. It returns JSON in less than 10 seconds, but the .mp3 link the payload returns isn't functional until 90+ seconds after the original API call. We couldn't reduce that time, but we installed robust logging and error handling so that our loading page and app continue functioning while it waits for the music.

Accomplishments that we're proud of

We are proud of the fact that it works. The songs sound excellent, and they capture the vibes of the user's environment well. Our UI, although simple, is intuitive and clean.

What we learned

We learned that mobile development presents many unique challenges, especially when integrating other components in your tech stack. Using a simulator for a mobile application doesn't always perfectly replicate the application on real hardware. We learned how important it is to test constantly on an actual, physical device to ensure our application worked as expected for end users.

What's next for SnapTracks

One feature that we would love to implement in the future would be the option for users to generate playlists. Generating one song based on the user's current activity is impressive, but it'd be even better for us to come up with a whole playlist's worth of songs to fit the vibe the user is looking for so that the vibe never stops.

Built With

- chatgpt

- expo.io

- flask

- gemini

- python

- suno

- typescript

Log in or sign up for Devpost to join the conversation.