Inspiration

In 2023, SF Chronicles published an article where they mentioned that the average emergency (911) request response time in San Francisco is at 9 minutes. This got us thinking about the need for a system that can help reduce this time and subsequently, help the people in need. As we researched more on this, we realised that the average time is not 9 minutes but much more. We saw numerous people complaining about this on social media apps. One horrifying story we came across was of a man who had an accident in the middle of the freeway and called the emergency services but couldn't connect to them for 2 hours.

These stories and our resolve to help the people in need inspired us to create an AI-based solution which will help minimize the emergency request response time.

What it does

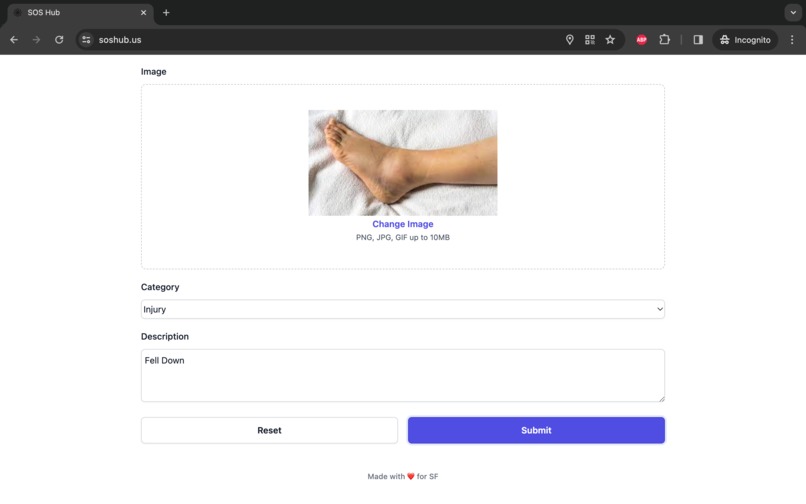

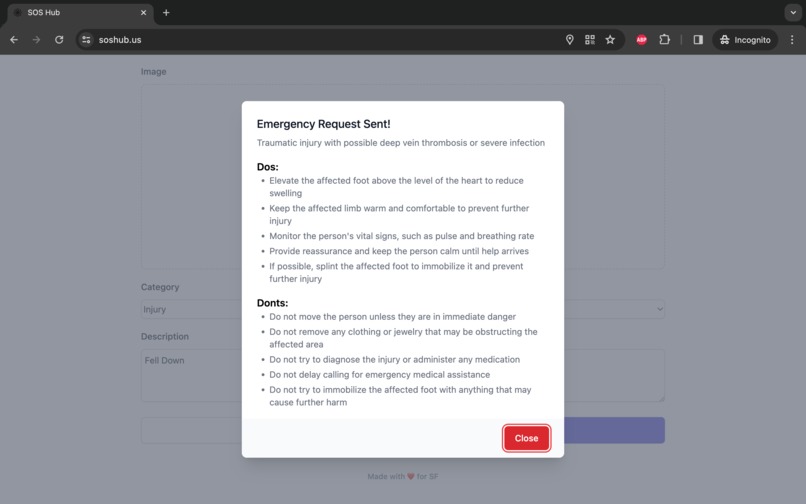

SOS-Hub is an AI-powered emergency response platform that leverages the power of computer vision and natural language processing to assist first responders and emergency services. With SOS-Hub, users can upload images of an emergency situation, and our AI models will analyze the image to identify the type of emergency, assess its severity, and recommend appropriate first responders (e.g., police, firefighters, EMTs, paramedics).

Additionally, SOS-Hub offers a user-friendly interface where individuals can input their emergency category and description. We also capture their location information. Our AI models then compares this information with the analysis from the visual data, ensuring consistency and providing tailored recommendations for immediate action. These recommendations include a list of "dos" and "don'ts" for those affected or nearby, empowering them to take appropriate steps while awaiting the arrival of professional assistance.

How we built it

SOS-Hub is a web application built using a combination of technologies. The frontend is developed using React, the backend is powered by Flask and integrated seamlessly with our AI models.

For the AI component, we are using the capabilities of the OpenAI API and the Fireworks AI platform. The firellava-13b model from Fireworks is being used for the initial image analysis, providing a detailed description of the emergency situation. This output is then processed by the llama-v2-70b-chat model, which structures the information into a concise JSON format, compares it with user input, and generates tailored recommendations.

To maintain data continuity and optimize storage, we've incorporated Neurelo into the SOS-Hub framework. By using Neurelo's API, we're able to securely house analysis outcomes and insights within the Neurelo database, thereby eliminating the intricacies associated with database programming and enhancing developer productivity.

Challenges we ran into

One of the primary challenges we faced was accurately interpreting and analyzing the images in real-time. Images of emergency situations can be complex and can be unique at times. Making it difficult for an AI model to identify and analyze the situation. We overcame this challenge by fine-tuning our models and applying prompt engineering techniques. This ensured that the AI models could accurately recognize patterns and extract relevant information.

Another challenge was comparing the AI analysis with user-provided input. In some cases, there may be discrepancies between the AI's interpretation of the image and the user's description of the emergency. We addressed this by implementing a comparison mechanism that flags potential mismatches and provides clear guidance to users.

Accomplishments that we're proud of

We are immensely proud of creating a user-friendly and intuitive platform that combines the power of AI with human input to provide accurate emergency response recommendations in real time. We were able to seamlessly integrate multiple AI models and leverage cutting-edge technologies like LLM's to develop a solution that can potentially save lives.

Furthermore, we are proud of the scalability and adaptability of our platform. SOS-Hub can be easily expanded to incorporate additional AI models to handle various types of emergencies.

What we learned

Throughout the development of SOS-Hub, we learnt about the intricacies of AI model integration, real-time data processing, and user experience design. We learned the importance of model fine-tuning to ensure accurate and reliable results, especially in critical emergency situations.

Additionally, we used new cloud-based database solutions like Neurelo and how it can be used for seamless data storage operations for applications with high concurrency and scalability requirements.

What's next for SOS-Hub

Our immediate goal is to further refine our AI models and expand our dataset to encompass a wider range of emergency scenarios. This will ensure comprehensive coverage and improved accuracy. We also want to use Voice-to-Text technologies and give users the option to send in audio recordings. Finally, we will be adding the feature where users can attach videos and not just images.

Currently, we are assuming that all the requests being submitted are real emergency situations but in future, we will include fake request detection using reinforcement learning and human flagging.

Built With

- ai

- api

- computer-vision

- fireworks

- flask

- godaddy

- headless-ui

- heroku

- javascript

- llm

- machine-learning

- mongodb

- neurelo

- openai

- prompt-engineering

- python

- react

- rest

- tailwind

- vercel

Log in or sign up for Devpost to join the conversation.