Inspiration

Eliminate the need to locate and interact with a specific button or screen, especially when a device is out of reach or when you're occupied with other tasks. By minimizing physical interaction with their device, users can maintain better focus on their primary task, whether it's studying, working, or simply enjoying their music without interruptions.

What it does

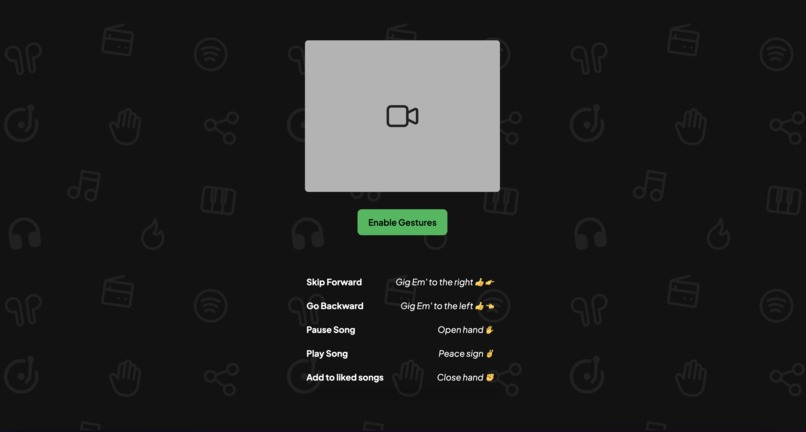

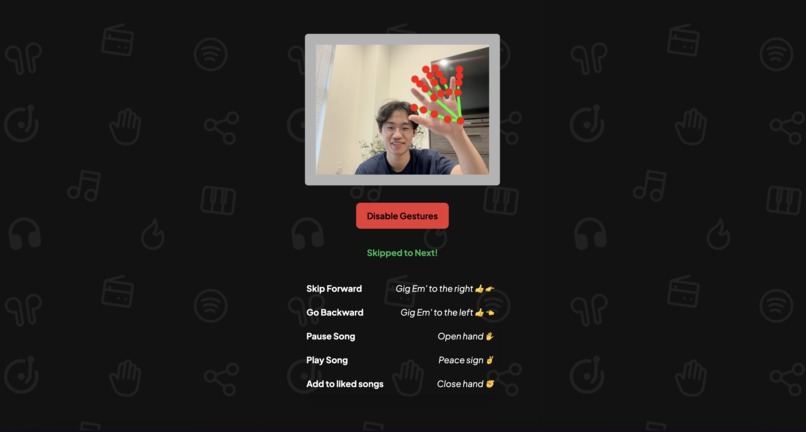

Provide more unique and engaging ways to interact with music. A user can log in to their Spotify account and enable gestures to play, pause, skip, and save songs.

How we built it

We utilized MediaPipe's computer vision model to recognize gestures, the Spotify API to control music, and SvelteKit and TypeScript for the web application.

Challenges we ran into

We had trouble with the math calculations to optimize the gesture recognition for the model, and had some issues with authorizing into the Spotify API as well.

Accomplishments that we're proud of

Range of features and gestures that are recognized, from skip forward to add to Liked songs. It truly is a new and unique way to interact with your music.

What we learned

How to use computer vision models for the first time and integrate them with Spotify API in a web application. This was a huge learning experience for all of us!

What's next for SpotifyAIR

Train specialized models for improved accuracy and personalized gesture recognition.

Built With

- mediapipe

- spotify

- svelte

- typescript

Log in or sign up for Devpost to join the conversation.