🏋️♂️ Inspiration

Our inspiration came from Nathan Wu, one of our team members and an avid gym-goer. Nathan often noticed how intimidating and unsafe it can feel for beginners to learn proper lifting form without guidance. With squat form being one of the most critical and injury-prone movements in strength training, we set out to build a tool that could make gym training more accessible, safer, and smarter using real-time computer vision and feedback.

🔍 What it does

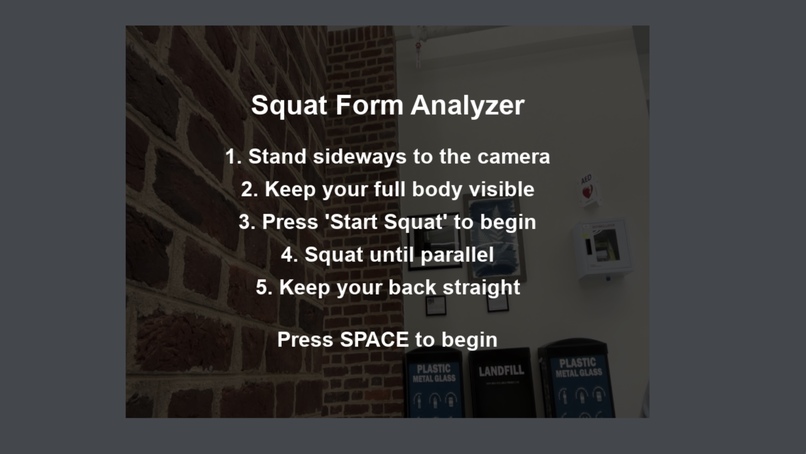

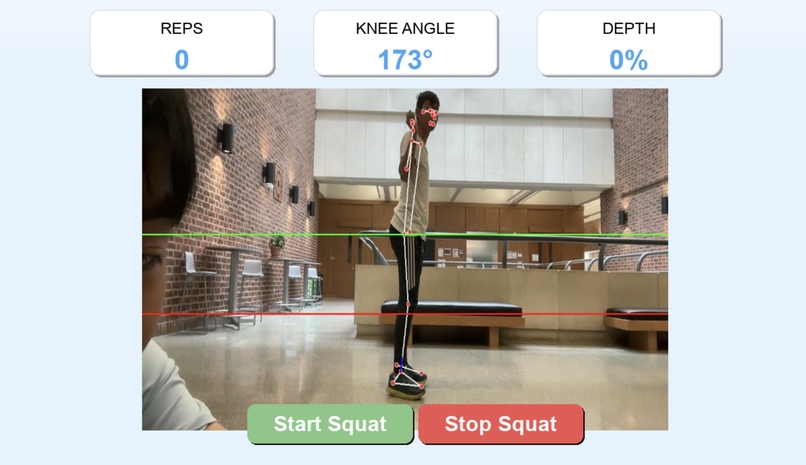

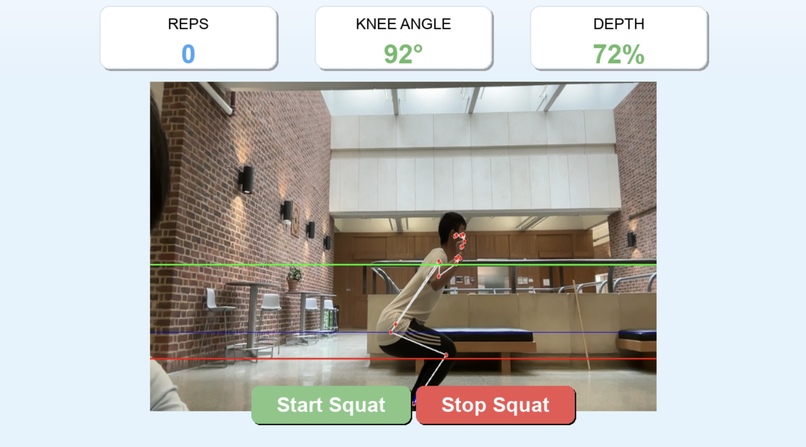

SquatPRo is a real-time squat analysis tool that uses computer vision to help users perfect their form. It detects squat depth, counts reps, tracks movement metrics, and provides instant visual and audio feedback. At the end of each session, users receive a detailed summary of their performance.

🛠️ How we built it

We built the app in Python using:

- OpenCV for video capture and image processing

- Mediapipe for pose estimation and joint tracking

- Pygame for visual overlays and real-time interface

- NumPy for calculations and metric tracking

We fine-tuned the system to detect key moments in the squat motion, calculate depth, and maintain rep accuracy—all with a webcam and no wearables. We also included setup guidelines to improve accuracy, such as camera height and lighting conditions.

🚧 Challenges we ran into

- Pose estimation variability: Rapid motion and joint occlusion made it difficult to consistently track hip and knee positions.

- Lighting sensitivity: Mediapipe’s detection suffered in low-light conditions, so we had to recommend setup best practices.

- Camera positioning: We found that consistent form detection relied heavily on the user’s orientation and distance from the camera.

🏆 Accomplishments that we're proud of

- Building a fully functional prototype that accurately tracks squats in real time

- Implementing dynamic form feedback to guide users mid-rep

- Creating a complete session summary dashboard with actionable performance metrics

📚 What we learned

We gained hands-on experience with pose estimation, real-time video processing, and human-computer interaction. We also learned how to design interfaces and systems that provide intuitive, actionable feedback from noisy sensor input.

🔮 What's next for SquatPRo

We plan to:

- Add support for additional exercises like deadlifts and lunges

- Integrate voice-based coaching and AI-powered Q&A

- Improve form grading using machine learning models trained on expert-labeled data

- Launch a mobile-friendly version so users can train anywhere with just their phone

Log in or sign up for Devpost to join the conversation.