Inspiration

The dance dance revolution competition and our knowledge of hardware inspired us to use the wrnch API to build our own, native version of DDR.

What it does

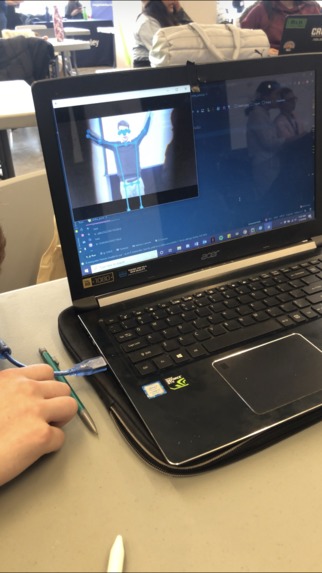

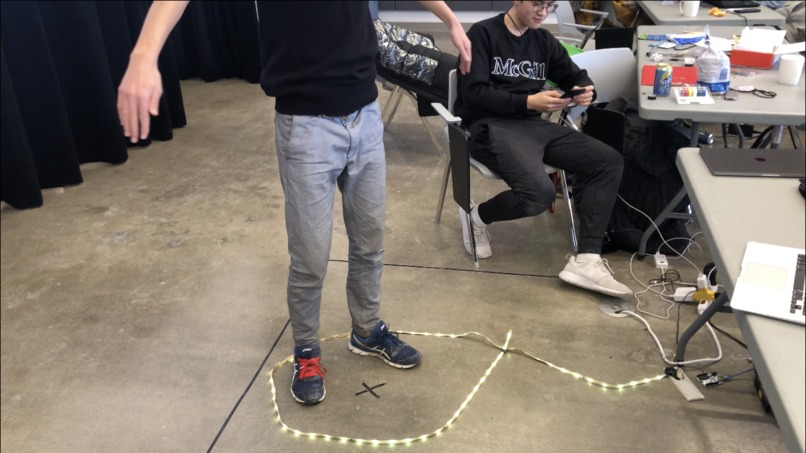

It uses the webcam from a laptop to watch for when dancers will move and form certain positions (like DDR!)

How we built it

We developed a model to detect patching dance motions that took the data given by the real-time wrnchAI Edge SDK to give each pose a similarity score. The similarity score takes pairs of unit vectors and compares how similar they are, and then returns a number in the range [0,2]. This score is then used to control the Arduino lights

Challenges we ran into

Setting up the real-time Edge SDK, hardware limitations, and finding a way to piece multiple programming components together

Accomplishments that we're proud of

Our program can distinguish between a correct and an incorrect pose, and will not give users of different sizes (arm length, height, etc) different scores for the same pose - it is subject size independent

What we learned

The importance of version control (we almost lost a working version) How to use real-time Edge SDK How to piece together multiple project sections When and how to use examples to test algorithm

What's next for strike_pose

Adding support for multiple moves Increasing the refresh rate for the moves, so it can analyze more moves faster

Known Bugs

Model fails when someone else is in the frame

Log in or sign up for Devpost to join the conversation.