SDG10 Reduce Inequalities

Inspiration

Our team has friends who are part of the deaf and hard-of-hearing community and through their anecdotes and experience, we wanted to tackle the disabling world in this context by helping people, especially hearing people (the responsibility being put on the disabling world rather than the deaf and hard-of-hearing community) learn sign language in a effective manner. In addition, our entire team is multi-lingual and know how important immersion and comprehensible input is, so we wanted to develop an app that helps people learn unconsciously by repetition and association. We also watched Marvel's Echo TV series which had a scene with a futuristic pair of eye contacts that does some of what our app does, we did extensive research to see if real-time captioning and sign language with 3D gestures exists, it didn't, so we took it upon ourselves to make it a reality.

What it does

Sublynk is an AR Quest3 App that displays subtitles (English & Signed Exact English) over the head of who is speaking in real time.

We are SubLynk and we help deaf people, their family and friends learn and communicate simplified ‘SEE’ sign language using real time 3D interpretation and captioning.

How we built it

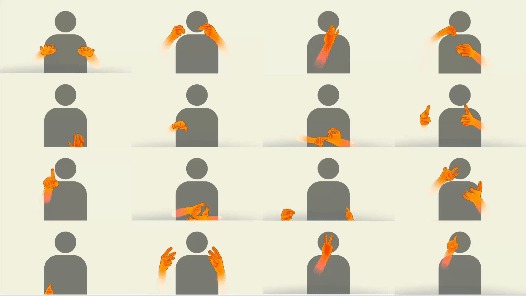

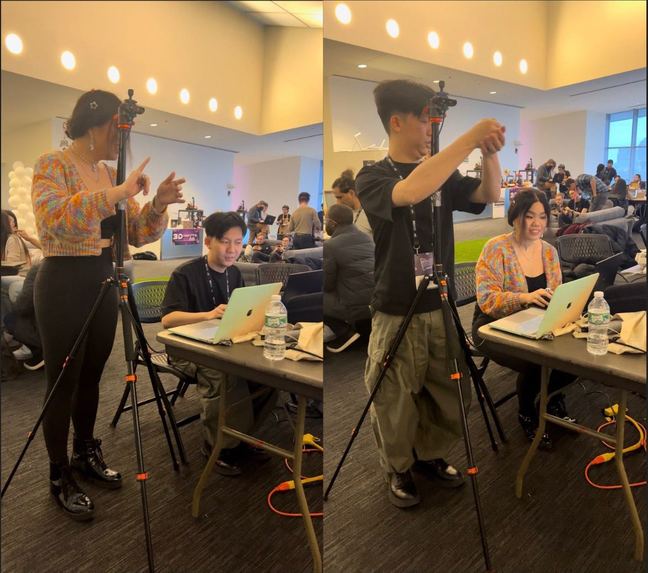

This project is created using Unity 2022.3.18f1, Microsoft's Speech API (for desktop) / Meta Voice SDK dictation (for mobile), MediaPipe's BlazeFace model, and the Leap2 Motion Controller (for mocap capture of the signs).

We also designed SubLynk with accessibility in mind, accounting for accessible colors and size and places of captioning.

Challenges we ran into

Real time voice dictation is a challenge in a space without stable internet for cloud processing so we were limited on what libraries we could use. We couldn't access the camera render texture feed of Meta's passthrough so we attached a cheap regular USB webcam from Amazon to the top of the headset for our face detection. And finally, converting 3D animation to 2D space (video plane) and coding the maths for perspective correction was a very big challenge but we've gotten it in a workable state.

We've had to continuously ask ourselves who the target audience for and how this could help others and how we can make ourselves stand out to what has already been invented and we're pleased to say we're the only SEE real-time dictation app, let alone the fact it's in AR and can be eventually be built for multiple platforms.

Accomplishments that we're proud of

This team should have never on paper worked, but we found our groove and really love and appreciate one another. One programmer smushing libraries together and implementing the project, two designers learning mocap for the first time, one researcher, and one storyteller. We managed to make a working open-source project that conveys our vision of a future where everyone is passively learning and knows some sign language.

What we learned

With consultation with the community we learned of SEE (signed exact english) and its effectiveness in transferring to ASL (American Sign Language) and easier learning curve.

What's next for SubLynk

To improve the accuracy of the audio speech to text we're going to buy an aux powered boom mic to reduce any ambient sound input. Additionally, if we were able to get access to the leap we'd build out the library of recorded SEE sign language animations to include more of the dictionary based on frequency and refactor our codebase to run even faster and on more platforms and devices. Imagine an AR phone filter that does this for snap or sparkAR in an even lighter form factor.

Our solution can scale to two-way interpretation, multiplayer, and network. Based on existing research, SEE can also help children who are severely, moderately or profoundly intellectually disabled, aphasic, cerebral palsied or autistic. It also has the potential to be used by teachers who want to incorporate sign language as an enrichment tool in their regular curriculum with songs and storytelling. Facial expression and conveying emotions is important in sign language. So implementing a combination of facial and mouth movement recognition in the future will add valuable features to our technology. We are hoping that in the long run we can make it possible for everyone in the deaf and hard of hearing community to be able to communicate with each other regardless of the language. Utilizing captioning and interpretation using the database of all languages, they will be able to interact by using SubLynk.

Furthermore, using the Leap Motion 2, we were able to create an initial database of 3D hand gestures for sign language. Moving forward, we would like to create an open source library of SEE so that other developers can also use this open source library for projects in XR, web, mobile and more to make the world just a little less dis-abling.

We will stay in touch and hope to get the resources to expand upon this further.

Further Notes

We are SubLynk and we help deaf people, their family and friends learn and communicate simplified ‘SEE’ sign language using real time 3D interpretation and captioning.

There are approximately 2 million deaf people in the U.S. In a perfect world, everyone would know sign language. But, A majority of deaf children have a hard time dealing with life because their parents struggle to learn sign language. ASL is its own language and structure that requires you to stop thinking in straight English. This is an example of a sentence in English and the interpretation of it, in ASL: English: “I am working at the store where I stocked items on the shelves all day” ASL gloss: ALL-DAY ME WORK OVER-THERE STORE DO?(rhet.) SHELF-SHELF-SHELF PUT-AWAY-on-shelves”

It takes time to get familiar with it and the available resources can be overwhelming. For example, Gail mentioned on an online forum that she started learning ASL but struggled in the process. She said: “Eventually I dropped out, in tears. I had never dropped out of any class before, had always been a very good student, and had studied 5 languages - but none of that had helped me at all with ASL.”

There are so many resources to learn ASL, but our research shows that classes help learn the basic foundation, and apps and websites are great for a quick reference, but if you want to learn sign language, nothing beats real life experiences and unintentional learning.

With Sublynk, we offer constant unintentional learning, not in ASL, but in SEE, Signing Exact English. We acknowledge that the problem is not ASL or the hearing disability, but our disabling world. So, to help hearing people to get better at learning, we investigated the problem. We found grounded research that showed SEE, the word-by-word interpretation, helps deaf children to learn better with the quality of education they get. SEE is also easier to learn for hearing people since it is closer to the English language structure, compared to ASL that’s not intuitive for hearing people. The problem is that SEE educational programs are limited. The current technological solutions are also super expensive, and some even downgrade the importance of learning sign language.

By embracing such solutions, we can create environments where deaf individuals feel comfortable and included, transforming their experiences in places they may have otherwise felt marginalized (SDG 10: Reduce Inequalities).

Built With

- leap-motion

- mediapipe

- metaquest

- unity

- windows-speech

Log in or sign up for Devpost to join the conversation.