Inspiration

We wanted to bridge the communication gap between the Deaf community and the hearing world using modern web technologies. Real-time translation tools are rare and often inaccurate. Our goal was to create a fast, browser-based ASL detection platform that’s actually usable, accessible, and open-source.

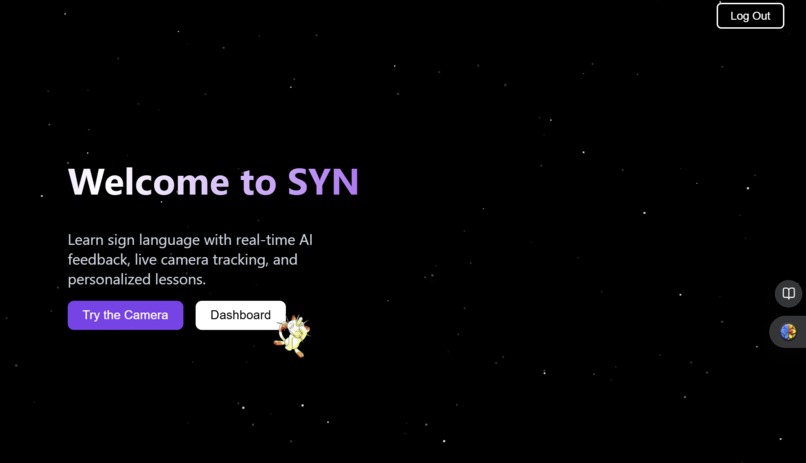

What it does

Our web app detects ASL hand signs through your webcam and translates them into text in real time. Users simply sign in front of the camera, and the system outputs the corresponding letter or word. It's lightweight, works entirely in the browser, and requires no external software or installation.

How we built it

We used React for a clean, responsive frontend, and Node.js for the backend. The real magic happens on the client side, where we leverage TensorFlow.js and a custom-trained Convolutional Neural Network (CNN) to classify hand gestures. We trained the model on a labeled dataset of ASL images, augmented it for better generalization, and then exported it for real-time inference in the browser.

Challenges we ran into

We faced challenges such as training the model, configuring the camera, choosing the right architecture, and finding suitable datasets. Each required troubleshooting such as tuning hyperparameters, ensuring camera integration, balancing model performance, and preparing data through cleaning or augmentation. Overcoming these obstacles took research, experimentation, and persistence throughout the project.

Accomplishments that we're proud of

We’re really proud that SYN works fully in the browser with no installs, no downloads, just open and go. The model runs in real time, even on slower devices, and actually gives usable results. We built everything from scratch, including the UI, and made sure it felt smooth, simple, and clean without being boring. Seeing the camera, the predictions, and the interface all working together was such a good feeling, especially after how much trial and error it took. It honestly turned out way more polished than we expected, and it’s cool knowing we built the whole thing ourselves from the ground up.

What we learned

This project pushed us to level up in so many ways. We had to figure out everything from training and tweaking our model to dealing with all the React bugs and getting the webcam to play nice. We learned how to actually connect machine learning with a live UI, how much of a difference good preprocessing and data cleaning makes, and how hard it is to make something look clean and work smoothly. We also learned how to work through stuff that just straight-up wouldn’t cooperate with A LOT of trial, error, and many “okays let’s try something elses”. Honestly, it taught us a ton about building under pressure, problem-solving, and not giving up when things get messy.

What's next for SYN

We are excited to add support for full words and sentence;level predictions, making SYN more useful for everyday conversation. We also want to improve the model’s accuracy and speed, expand the dataset, and make the platform mobile-friendly. Another goal is to include a learning mode, where users can practice signs and get real-time feedback ! like a personal ASL tutor. Since the project is open-source, we hope others can build on it, contribute new features, and help grow SYN into a truly helpful tool for accessibility.

Features

Live camera input with hand tracking Real-time ASL predictions A clean dashboard to view results Login and signup system Modern, responsive UI Smooth animations and interactions

Log in or sign up for Devpost to join the conversation.