Inspiration

This is a project crafted from the hearts of the creators; all four group members have found themselves in the situation of not knowing English and being thrown into America, and we all struggled having come from El Salvador, India, South Korea, and Nigeria. We see the vision, and we hope you do too.

What it does

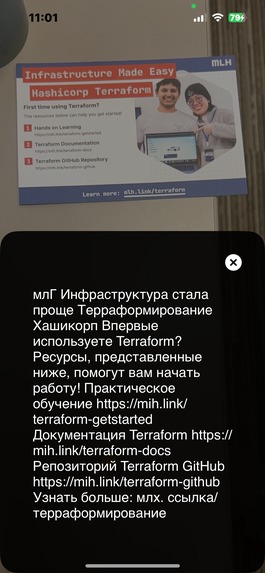

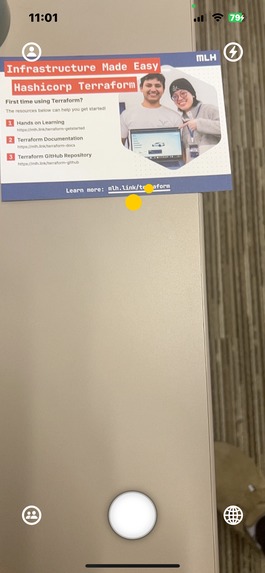

Transl8 is a portable IOS app capable of projecting a language translation to the phone screen using Augmented Reality in real-time paired with Computer Vision and Natural Language Processing.

How we built it

Leveraging the power of various IOS Frameworks such as SwiftUI, CoreImage, ARKit, SceneKit, Vision, and Foundation, we created an idea to piece together and aid foreign individuals. By grabbing images from ARKit and processing the data, we are able to then isolate the words out the picture. These words are then fed into a Google Cloud API, where Google's neural machine returns the translated text. From there, the rest is up to ARKit to do the heavy augmented reality work.

Challenges we ran into

Our primary challenge lied in figuring out such a large amount of new technologies; we lacked a member with extensive mobile development skills, and had one engineer with knowledge of text extraction. Despite this, we are very proud with what we've done.

Accomplishments that we're proud of

Despite the heavy lack of expertise in the mobile development space, we were able to come together and create something not only close to our hearts, but also something truly useful for a majority of others.

What we learned

We learned about IOS, and more generally, mobile development. We also learned about using OpenCV and Natural Language Processing!

What's next for Transl8

Our primary objectives include support for more individuals, meaning expanded language and device support. Furthermore, an implementation of a test mode could prove useful, as a major area lacking something like this is in ESL classes. One thing that we couldn't get around to quick enough was doing a more "live" version of the translation, where the user could point their camera at text while traveling on foot quickly, and have it translate meaningful info. We also wanted to integrate a speech translator, which would repeat what the user says in another language of choice. These are ideas that would lead to the "ultimate" user aid.

Built With

- arkit

- mobiledev

- natural-language-processing

- opencv

- swift

- xcode

Log in or sign up for Devpost to join the conversation.