Inspiration

VitalVoice was developed through a story of connection and empathy. While talking to the competition mentors, one of our team members realized she shared almost the exact same journey with Cushing’s disease and the difficult surgery and recovery. Whether it was from medical negligence or a language barrier, they both shared the same fears from having their lives in the hands of others.

VitalVoice is the solution to equity in healthcare. We believe no patients should feel distrust, uncomfort, or fear while conversing with a medical practitioner in a non-native language. VitalVoice uses cutting-edge technology to ease these burdens off of patients, facilitate meaningful and transparent conversation between both parties, and prioritize patient safety through decreasing the potential for misunderstood directions and misdiagnoses.

What it does

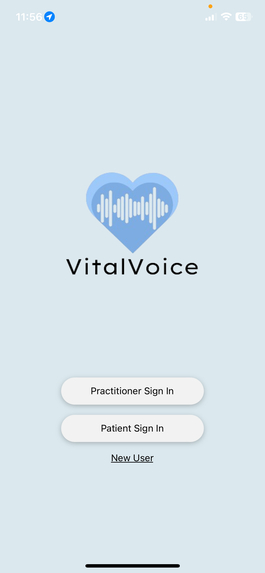

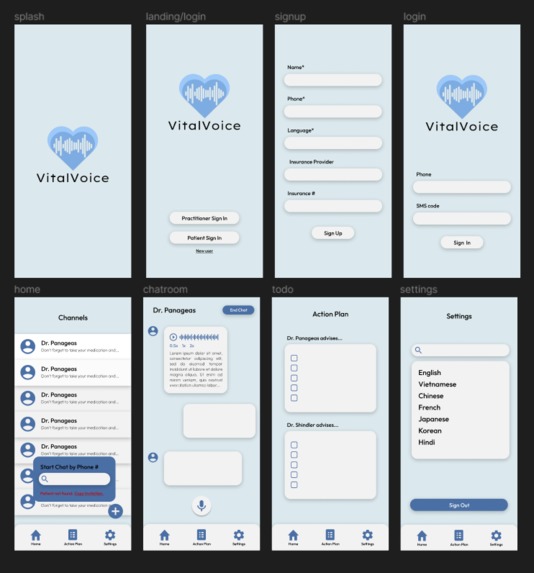

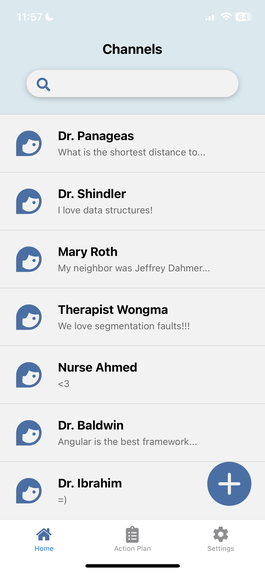

VitalVoice overcomes language barriers through the use of real-time translation, recording, and transcription. Medical practitioners will open channels (chat rooms) with their patients after standard office visits. Here, they will record any follow-up advice, recovery directions, etc., for their patient. This audio is automatically transcribed into the patient’s specified language in addition to generating an audio file that can be listened to at any moment. Patients can then ask inquiries or clarifications, and a conversation ensues seamlessly.

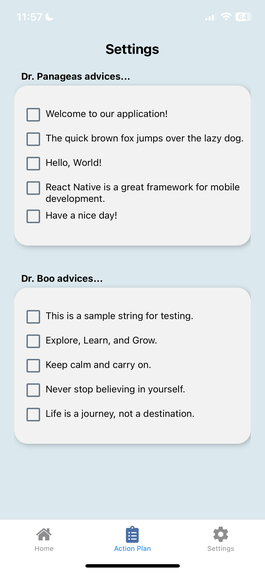

The most powerful feature of VitalVoice is the Action Plan that is generated after a conversation ends between patient and practitioner. Patients can view a concise check-list of all the tasks they were advised to follow in one place, from all of their practitioners. On the other hand, practitioners will receive a weekly status update on how their patient is doing, and can then make decisions to follow up with priority cases. In theory, these statuses are integrated different with the practitioners' electronic databases for ease of use.

How we built it

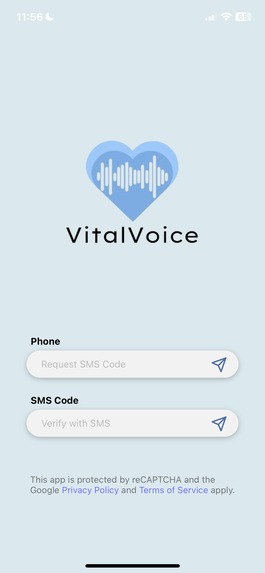

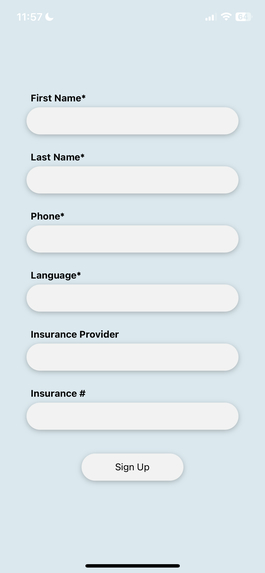

VitalVoice was built in Typescript using React Native and Expo framework. Authentication was done through Firebase and reCAPTCHA. The backend consists of a PostgreSQL database and Firebase Cloud Storage to store text, audio, and media files. We used Python to make calls to the Deepgram API for audio transcription, Cloud Translation API for translation, and OpenAI GPT4 for the action plans. Some prompt engineering was done to establish the context and ensure the summaries were at a lower reading level. Flask was used to communicate between frontend and backend.

Challenges we ran into

- Firebase phone authentication with recapcha took us hours to configure

- We were not familiar with connect our databases to a React Native frontend

Accomplishments that we're proud of

- Our advanced tech stack and our willingness to learn and work with new technologies

What we learned

- React Native libraries and packages are super messy to work with

- Storing different media types (text, audio files, images) required more than one database

What's next for VitalVoice

We hope that the value of VitalVoice in transforming communication between patients and their medical practitioners is recognized. We hope that this tool can be used to regain trust in the medical field and bring equity to all patients regardless of the languages they speak.

Log in or sign up for Devpost to join the conversation.