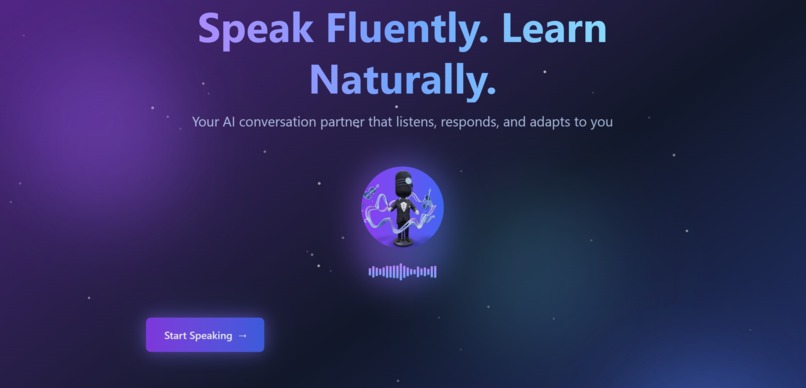

Voice Learning Coach: Your AI-Powered Speaking Partner

💡 Inspiration As a computer security student balancing multiple languages (English, Arabic, French, and Russian), I've experienced firsthand the frustration of practicing pronunciation alone. Apps give you text exercises, but real fluency comes from conversation—and finding a patient speaking partner at 2 AM is impossible. Late-night Duolingo streaks helped with vocabulary, but I couldn't practice speaking. Traditional language apps are one-sided; tutors are expensive; and peer practice requires coordination. We asked: What if your AI coach could actually talk to you? That's when ElevenLabs + Google Cloud AI clicked: combine human-like voice with intelligent responses to create an always-available conversation partner.

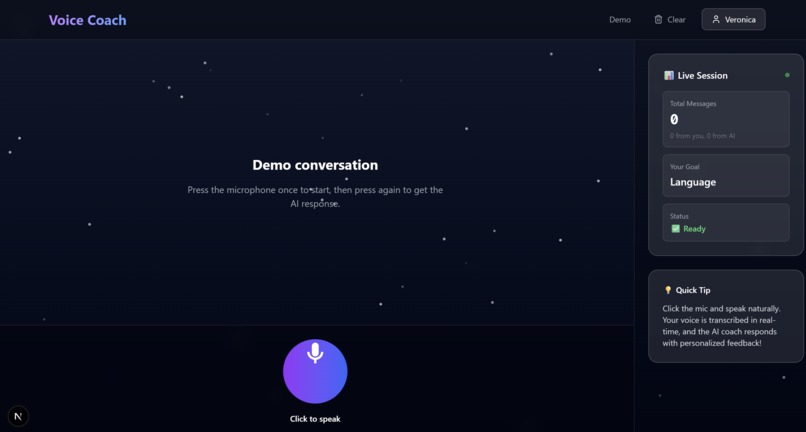

🎯 What it does Voice Learning Coach transforms language practice through natural conversation:

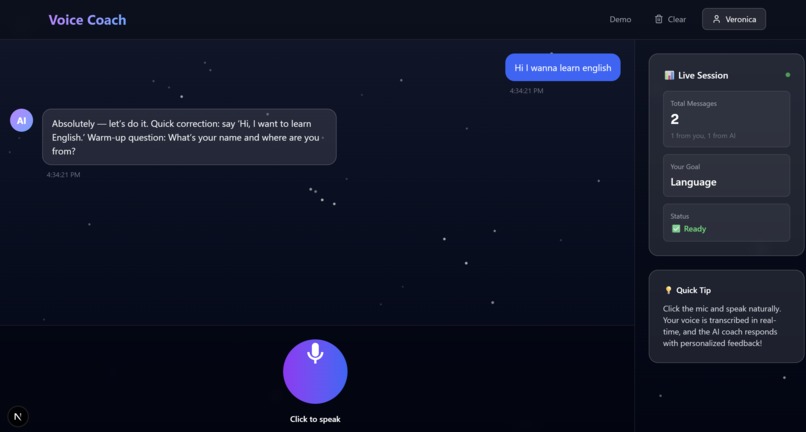

🎤 Voice-First Interface: *Hold to speak—no typing required. Natural conversation flows like talking to a real person. *🧠 Intelligent Responses: Google Gemini AI understands context, adapts to your level, and responds naturally like a supportive coach. 🔊 Realistic Voice: ElevenLabs generates human-quality speech that feels like talking to an actual tutor, not a robot. ✍️ Real-Time Corrections: Pronunciation issues highlighted inline with gentle feedback—no harsh grading, just improvement. 📈 Adaptive Difficulty: AI notices your progress and adjusts conversation complexity automatically. 💬 Context Awareness: Remembers conversation history to maintain natural flow across exchanges.

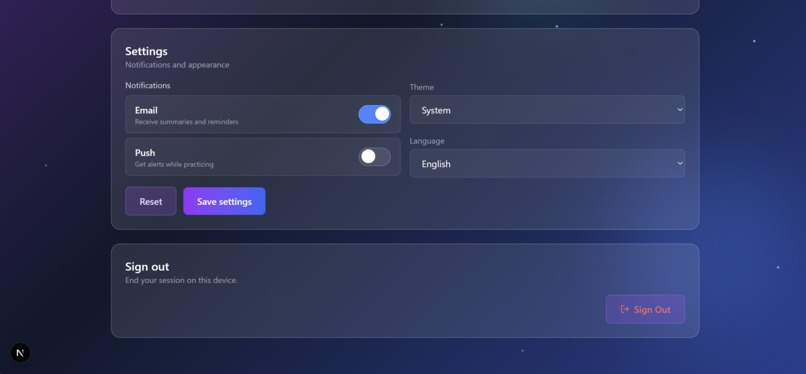

🛠️ How we built it Frontend: Next.js 14 (App Router), TypeScript, Tailwind CSS, Framer Motion animations, Lucide React icons Voice Processing: ElevenLabs API for Speech-to-Text and Text-to-Speech—dual pipeline for listening and speaking AI Intelligence: Google Gemini Pro for conversational responses, corrections analysis, and difficulty adaptation *Authentication: * Clerk for secure user management and session handling

*Audio Handling: * Web Audio API for browser-based recording, optimized blob compression for API calls

Architecture: User Speech → ElevenLabs STT → Gemini AI (context + corrections) → ElevenLabs TTS → User Hears Response Built API-first with four core routes: /api/speech-to-text, /api/ai-response, /api/text-to-speech, /api/process-speech (orchestrator)

🚧 Challenges we ran into Time Crunch: Entered hackathon 6 hours before deadline while juggling startup work (MINDU) and TryHackMe Advent challenges—ruthless prioritization saved us.

API Integration Complexity: Initially planned Google Cloud Speech-to-Text separately, simplified by using ElevenLabs for both STT/TTS—reduced integration points from 4 to 3.

Real-Time Voice Processing: Balancing latency across three sequential API calls (STT → AI → TTS). Optimized by processing in parallel where possible and adding loading animations to mask delay.

Audio Format Compatibility: Browser records WebM, ElevenLabs expects specific formats. Added format detection and conversion logic in API middleware.

Gemini Response Parsing: AI sometimes returned prose instead of JSON. Implemented fallback parsing with regex to extract structured data from freeform responses.

Character Limits: ElevenLabs free tier gives 10k chars/month. Optimized AI prompts to generate concise responses (under 50 words) to maximize demo sessions.

🏆 Accomplishments that we're proud of ✨ Shipped functional voice AI in 6 hours: Full pipeline from recording → transcription → intelligence → voice synthesis 🎨 Polished UX: Smooth animations, waveform visualizations, pulsing mic button—feels professional, not rushed 🧠 Intelligent Corrections: Not just transcription—actual pronunciation feedback with encouragement 🔊 Natural Conversations: Gemini maintains context across turns, making it feel like chatting with a real tutor ⚡ Optimized Performance: Sequential API calls feel seamless with smart loading states 🔐 Production-Ready Auth: Clerk integration means secure user sessions from day one

📚 What we learned Technical:

- Voice AI pipeline architecture (STT → NLP → TTS)

- Managing async API orchestration with error handling

- Audio blob handling and format conversion

- Prompt engineering for conversational AI with structured outputs

- Base64 audio streaming for client playback

APIs:

- ElevenLabs voice quality customization (stability, similarity_boost)

- Gemini Pro context management and response formatting

- Clerk middleware for route protection

Design:

- Voice UX patterns (hold-to-talk vs click-to-record)

- Loading state psychology—animations reduce perceived latency

- Waveform visualizations create tangible feedback for invisible audio

Prioritization:

- Cut real-time streaming and WebSocket complexity

- Focused on core loop: speak → hear response → see corrections

- "Working demo beats perfect features"

🚀 What's next for Voice Learning Coach Short-term:

- Mobile-responsive design for practice anywhere

- Multiple language support (Spanish, French, Mandarin)

- Conversation topics/scenarios (job interviews, casual chat, debates)

Medium-term:

- Progress tracking dashboard with pronunciation improvement metrics

- Voice emotion detection for presentation practice

- Group practice rooms for peer learning

Long-term:

- Custom AI personalities (formal tutor, casual friend, debate opponent)

- Integration with university language programs

- Gamification with streaks and achievements (leveraging my Duolingo motivation style)

- Accent training modes for specific dialects

Language barriers shouldn't stop anyone from speaking confidently. We're building that future, one conversation at a time. 🎤

Built With

- clerk

- gemini

- google-cloud

- next.js

- react

- three.js

- tts

Log in or sign up for Devpost to join the conversation.