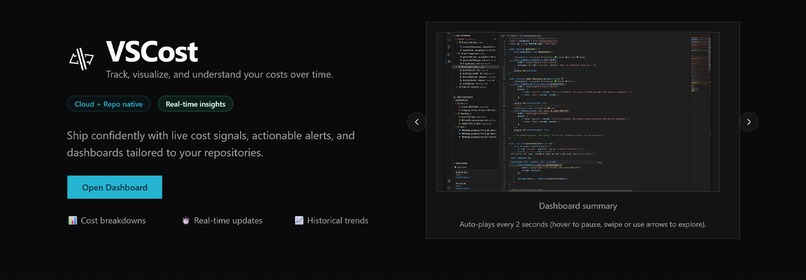

🧠 About the Project — vscost

In a world where LLMs are growing in usage, having insight into your usage before you burn through the cash your generous investors gave you is no longer a nice-to-have it’s a necessity.

💡 Inspiration

As developers, we love how easy it is to plug LLMs into our workflows. A few API calls here, a smarter feature there — and suddenly your app feels magical.

But there’s a catch: LLMs are opaque when it comes to cost.

Most teams only realize how expensive their AI usage is after the bill arrives. We wanted to flip that dynamic.

vscost was inspired by a simple question:

“Why don’t we have observability tools for LLM costs the same way we do for CPU, memory, or cloud spend?”

So we built one — directly where developers live: VS Code, GitHub, and the web.

🛠️ How We Built It

vscost is a multi-surface tool designed to fit naturally into modern developer workflows:

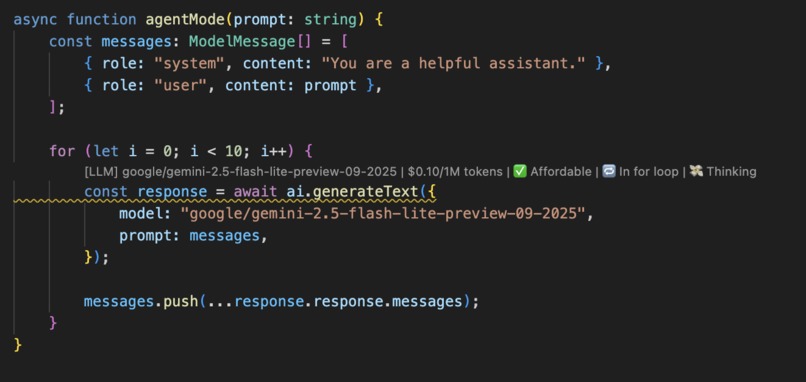

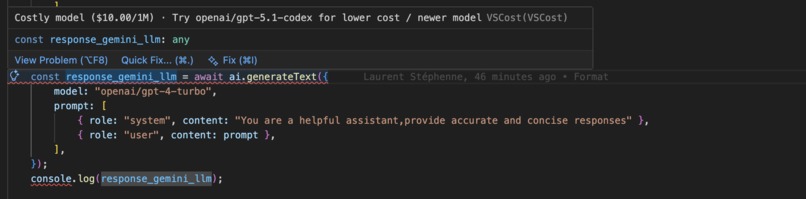

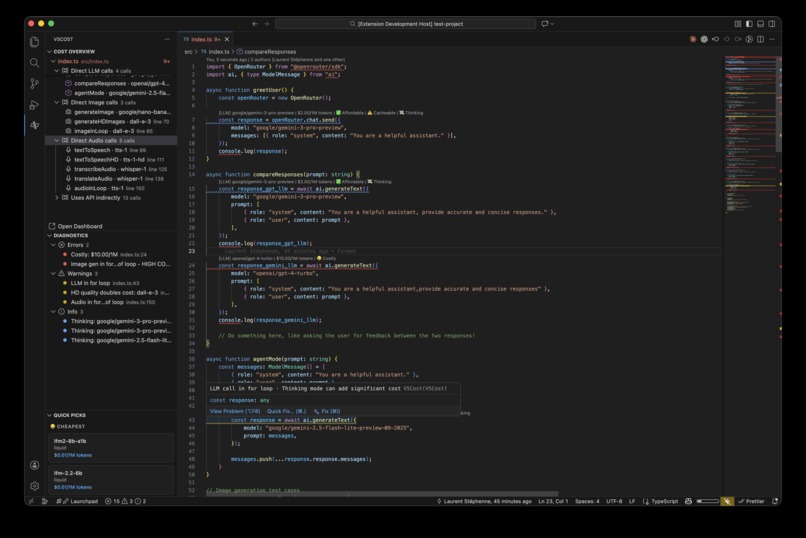

VS Code Extension

- Analyzes your repository locally

- Surfaces estimated LLM API usage directly in the editor

- Flags potentially expensive patterns before they ship

GitHub Integration

- Analyzes commit history and code evolution

- Tracks how LLM usage (and cost) changes over time

- Makes cost regressions visible in PRs

Web Dashboard

- Gives a high-level overview of your project’s AI spend

- Compares models and usage patterns

- Recommends cheaper or more efficient alternatives

Under the hood, we statically analyze code paths that interact with LLM APIs, estimate token usage, and model cost using provider pricing formulas such as:

$$ \text{Cost} = \sum (\text{input tokens} \times p_{\text{in}} + \text{output tokens} \times p_{\text{out}}) $$

This lets us reason about cost before code runs not after.

📚 What We Learned

Building vscost taught us a lot about:

- Static code analysis for dynamic, prompt-driven systems

- The surprising ways LLM usage grows organically in a codebase

- How difficult it is to estimate cost when prompts, context windows, and models vary

- Designing developer tools that are helpful without being noisy

We also learned that cost awareness changes behavior. When developers see the price of a prompt, they naturally optimize it.

⚠️ Challenges We Faced

Estimating tokens without executing prompts

Prompts evolve, inputs vary, and context can explode — making accurate estimation tricky.Balancing accuracy vs. usability

We had to decide when “good enough” estimates were better than perfect but slow analysis.Multi-platform consistency

Keeping insights aligned across VS Code, GitHub, and the web required careful design.LLM pricing volatility

Models change, prices drop, and new providers appear — the system had to stay flexible.

🚀 Why vscost Matters

LLMs are becoming core infrastructure.

But infrastructure without cost visibility is dangerous.

vscost helps teams stay fast, informed, and financially sane BEFORE the invoice hits.

Built With

- convex

- vscode

Log in or sign up for Devpost to join the conversation.