EnCORE

The Institute for Emerging CORE Methods in Data Science

Applications are now open for EnCORE Visitors Program

NEWS

1st Edition of EnCORE Magazine

High School Visit Day

2025 IEEE Computer Society W. Wallace McDowell Award

Workshop on Theoretical Perspectives on LLMs

Workshop on Meta-Complexity as the Bridge Between Learning and Cryptography

Workshop on Defining Holistic Private Data Science for Practice

Professor Rajiv Gandhi featured in NSF CISE Newsletter "Faces of CISE"

NSF Site Visit 2024

EnCORE Industry Day 2024

Barna Saha Celebrated As Harry E. Gruber Endowed Chair at UC San Diego

Raghu Meka's work featured in Quanta as Biggest Breakthroughs in Math

Rising Stars in Data Science 2023

Empowering Young Minds: Robotic Arts and Craft Workshop for Elementary School Kids

EnCORE: Call for Extended Research Visits & Workshops

Surprise Computer Science Proof Stuns Mathematicians

Understanding Self Distillation in the Presence of Label Noise

U.S. census data vulnerable to attack without enhanced privacy measures

A Mathematical Breakthrough by the EnCORE Faculty Raghu Meka

Expand Foundations of Data Science

New $10M NSF-Funded Institute Will Get to the CORE of Data Science

UCLA Part of New $10M NSF Data Science Research Center, EnCORE

$10M NSF-Funded Institute Will Get to the CORE of Data Science

NSF Awards

Data Science Theory

Data Science Theory

Computation+Compression

Computation+Compression

Masters of Data Science Online

Masters of Data Science Online

New Book Announcement:

General Chair of ICML

Chaudhuri will be the General Chair of ICML 2022.

First cohort of Ph.D. students in Data Science

HDSI

Congratulations to Professor Yusu Wang

General Chair of STOC 2023

Saha will be the General Chair of STOC 2023.

I am Data Science

Mazumdar featured in I am Data Science series.

Learn MoreThe EnCORE Workshop: New Horizons for Adaptive Robustness focuses on foundational challenges and emerging opportunities in the design and analysis of algorithms and systems that remain reliable under adaptive and adversarial conditions. The goal of the workshop is to bring together researchers working on different aspects of adaptive robustness to share recent developments, explore emerging connections across areas such as streaming, data structures, differential privacy, and cryptography, and foster new collaborations.

We’re excited to invite you to the upcoming EnCORE Collaboration Workshop, taking place on Monday, September 8th, 2025, from 10:00 AM to 4:25 PM (PT). The workshop will be held in person at the EnCORE space (UC San Diego, Atkinson Hall, 4th Floor) and will also be accessible via Zoom for remote participants.

Read More >>

We’re thrilled to kick off the Advanced FinDS program, created to help high school students deepen their understanding of data science by building a strong foundation in mathematics. This program introduces key concepts in data science in an engaging and accessible way, while also strengthening the critical thinking and problem-solving skills that are essential for success in STEM fields.

EnCORE is pleased to announce a summer camp for students who are interested in enhancing their understanding of algorithms. This camp aims to provide a concise overview of the algorithmic knowledge students are expected to learn at the University of California, and more broadly, in a standard four-year college curriculum. This knowledge is key to a successful academic journey exploring computing related majors at four-year colleges.

Join us for an EnCORE presentation on “Revisiting PAC Learning” on Monday, April 7, 2025 at 11AM (PT). The talk will be held at UC San Diego, Atkinson Hall, 4th Floor – EnCORE space, and will also be accessible via Zoom.

Workshop on Theoretical Perspectives on LLMs

Join EnCORE from March 3 – 5, 2025, for a three-day workshop on Theoretical Perspectives on Large Language Models (LLMs) explores foundational theories and frameworks underlying the architecture, learning mechanisms, and capabilities of large language models. This workshop brings together researchers to discuss recent advancements, theoretical challenges, and emerging concepts in understanding and predicting LLM behavior, efficiency, generalization, and alignment with human intent.

EnCORE 2-Day Tutorial

Join us for an EnCORE Tutorial on “Forster-Warmuth Counterfactual Regression: A Unified Learning Approach” and “Doubly Robust and Efficient Calibration of Prediction Sets for Censored Time-to-Event Outcomes” from February 24 – 15, 2025, at 11 AM (PT). The talks will be held at UC San Diego, Atkinson Hall, 4th Floor – EnCORE space, and will also be accessible via Zoom.

Towards Interpretable Deep Learning

Join us for an EnCORE presentation on “Towards Interpretable Deep Learning” on Thursday, February 13, 2025, at 10 AM (PT). The talk will be held at UC San Diego, Atkinson Hall, 4th Floor – EnCORE space, and will also be accessible via Zoom.

Workshop on Meta-Complexity as the Bridge Between Learning and Cryptography

Join EnCORE from February 3 – 7, 2025, for a five-day workshop that explores the fascinating intersection of meta-complexity, cryptography, and computational learning , where experts will share breakthroughs, set future goals, and spark collaborations. With research talks, tutorials, and open problem sessions, this invitation-only event promises impactful discussions and innovative insights.

Workshop on Defining Holistic Private Data Science for Practice

Join EnCORE from January 8–10, 2025, for a three-day workshop exploring the intersection of theoretical advancements and practical applications of privacy-preserving tools in data science. Discussions will cover privacy needs, cost evaluations, assessment methods, and strategies for real-world implementation of privacy technologies.

Collaboration Workshop

Join us for the EnCORE Collaboration Workshop! Participants will present their work, exchange ideas, and explore collaborative opportunities. This mandatory workshop is scheduled for December 4, 2024, from 9:30 AM to 11:00 AM (PT) in the EnCORE space and via Zoom.

Drifting Games and Online Learning

Join us on Thursday, November 21, 2024 at 11 AM (PT) for a talk on “Drifting Games and Online Learning” presented by Yoav Freund. The talk will be held at UC San Diego, Atkinson Hall, 4th Floor – EnCORE , and will also be accessible via Zoom.

How Do Algorithms See the World? Computing and High-Stakes Decision-Making

Join us on Friday, November 8, 2024 at 11 AM (PT) for EnCORE’s first Public Lecture presented by Jon Kleinberg, “How Do Algorithms See the World? Computing and High-Stakes Decision-Making.” The talk will be held at UC San Diego, HDSI Multipurpose Room, and will also be accessible via Zoom.

Deep Learning: a Non-parametric Statistical Viewpoint

Join us on Thursday, October 24, 2024, at 10 AM (PST) for a presentation on “Deep Learning: a Non-parametric Statistical Viewpoint.” This talk will be held at UC San Diego and Zoom.

EnCORE Student Seminar

Join us on Monday, October 28, 2024, at 10 AM (PST) for a student seminar featuring Chhavi Yadav (UCSD) and Natalie Collina (UPenn). The speakers will present “FairProof : Confidential and Certifiable Fairness for Neural Networks” AND “Tractable Agreement Protocols via Calibration .” The seminar will be held via Zoom.

Learning from Noisy Labels and Imperfect Teachers

Datasets used in machine learning and statistics are huge and often imperfect, e.g., they contain corrupted data, examples with wrong labels, or hidden biases. Existing approaches often produce unreliable results when the datasets are corrupted, are computationally inefficient, or come without any theoretical/provable performance guarantees. In this talk, I will discuss the design of learning algorithms that are computationally efficient and provably reliable, and then present an application in knowledge distillation.

NSF Site Visit

Site visits are one of several monitoring tools to provide oversight of NSF’s research award portfolio. • Site visits assess an organization’s capacity for award administration; compliance with administrative regulations, public policy requirements, and award terms and conditions, including those contained in the NSF program announcement/solicitation and grant or cooperative agreement; and the extent to which the organization maintains a control environment within which awards are likely to be administered in compliance with federal financial and administrative regulations and NSF agreement provisions.

Industry Day

This all-day event showcased exciting research in foundations of data science, ML systems and AI, with contributions from leading experts in industry and academia. Attendees had the opportunity to network with entrepreneurs, industry researchers, faculty and peers from leading institutions such as UCLA, UT Austin, and UPenn. The event introduced a dynamic exploration of cutting-edge advancements and collaborative opportunities centered around machine learning and AI

Reclaiming Data Agency in the Age of Ubiquitous Machine Learning

This talk introduces the concept of data agency, which empowers individuals to control how and whether their data is used in machine learning (ML) models. As ML models grow in size and require more data, individuals face risks like privacy and intellectual property violations. While many existing solutions focus on mitigating these risks through methods like differential privacy, encrypted model training, and federated learning, data agency takes a different approach by giving users the ability to know and manage their data’s use in ML systems. The talk proposes technical tools to trace or disrupt data use, addresses potential limitations, and discusses how these tools can complement current privacy protections while amplifying efforts to regulate data use in ML.

Rising Stars in Data Science 2024: Applications and Info Session

The Rising Stars in Data Science workshop, hosted by the University of California San Diego in collaboration with the University of Chicago and Stanford University, aims to celebrate and accelerate the careers of exceptional data scientists at a pivotal point in their professional journey. This workshop supports the transition to roles such as postdoctoral scholar, research scientist, industry researcher, or tenure-track faculty. Over the past four years, we have proudly hosted over 130 Rising Stars from nearly 40 institutions.

EnCORE’s Spring 2024 Collaboration Workshop

EnCORE students have teamed up to collaborate on various topics, such as augmented algorithms and large language models!

This workshop will take place on Monday, June 3, 2024, at 9:00 am (PST) at Atkinson Hall – 4th Floor – EnCORE room at UCSD and via Zoom.

TEAMS: Teaching Enriched Algorithmic Topics to Middle School Students

This program offers middle school students an exciting introduction to algorithms and coding. Participants will explore the “Good, Bad, and Ugly” algorithms and learn shortest path algorithms through engaging worksheets, such as planning routes from one place to another. The session also highlights coding basics, using Google Maps as a fun, practical example to illustrate key concepts.

The Puzzle of Dimensionality and Feature Learning in Neural Networks and Kernel Machines

Remarkable progress in AI has far surpassed expectations of just a few years ago. At their core, modern models, such as transformers, implement traditional statistical models — high order Markov chains. Nevertheless, it is not generally possible to estimate Markov models of that order given any possible amount of data. Therefore these methods must implicitly exploit low-dimensional structures present in data.

Generalization and Stability in Interpolating Neural Networks

Neural networks are renowned for their ability to memorize datasets, often achieving near-zero training loss via gradient descent optimization. Despite this capability, they also demonstrate remarkable generalization to new data. We investigate the generalization behavior of neural networks trained with logistic loss through the lens of algorithmic stability. Our focus lies on the neural tangent regime, where network weights move a constant distance from initialization to solution to achieve minimal training loss. Our main finding reveals that under NTK-separability, optimal test loss bounds are achievable if the network width is at least poly-logarithmically large with respect to the number of training samples.

Old Questions and New Directions in Theory of Clustering

EnCORE is hosting a 3-day workshop to reinvigorate collaboration between the approximation and computational geometry communities.

Deep Generative Models and Inverse Problems for Signal Reconstruction

Alex Dimakis, a professor at UT Austin and the co-director of the National AI Institute on the Foundations of Machine Learning, will be presenting the talk “Deep Generative Models and Inverse Problems for Signal Reconstruction” on April 1, 2024, as a part of the Foundations of Data Science – Virtual Talk Series.

Characterizing and Classifying Cell Types of the Brain: An Introduction for Computational Scientists

Join the 2-day EnCORE tutorial “Characterizing and Classifying Cell Types of the Brain: An Introduction for Computational Scientists” with Michael Hawrylycz, Ph.D., Investigator, Allen Institute.

This tutorial will take place on March 21 – 22, 2024 at UC San Diego in the Computer Science and Engineering Building in Room 1242. There will be a Zoom option available as well.

Bounded Weight Edit Distance

Join EnCORE’s talk on “Theoretical Exploration of Foundation Model Adaptation Methods” with Kangwook Lee, an assistant professor in the Electrical and Computer Engineering Department and the Computer Sciences Department at the University of Wisconsin-Madison.

EnCORE: Call for Extended Research Visits & Workshops

Workshop on New Horizons for Adaptive Robustness

Workshop on Fine-Grained Complexity

Workshop on Theoretical Perspectives on LLMs

Workshop on Meta-Complexity as the Bridge Between Learning and Cryptography

Workshop on Foundations of Fairness and Accountability

Workshop on Defining Holistic Private Data Science for Practice

Old Questions and New Directions in Clustering

Follow us on X

Subscribe to our YouTube Channel

Follow us on LinkedIn

EnCORE: The institute for Emerging CORE Methods in Data Science

The Institute for Emerging CORE Methods in Data Science, or EnCORE, is led by the

University of California San Diego in collaboration with the University of California, Los

Angeles; University of Pennsylvania; and The University of Texas at Austin. EnCORE brings

together scientists from multiple disciplines such as statistics, mathematics, electrical

engineering, theoretical computer science, machine learning and health science, among others.

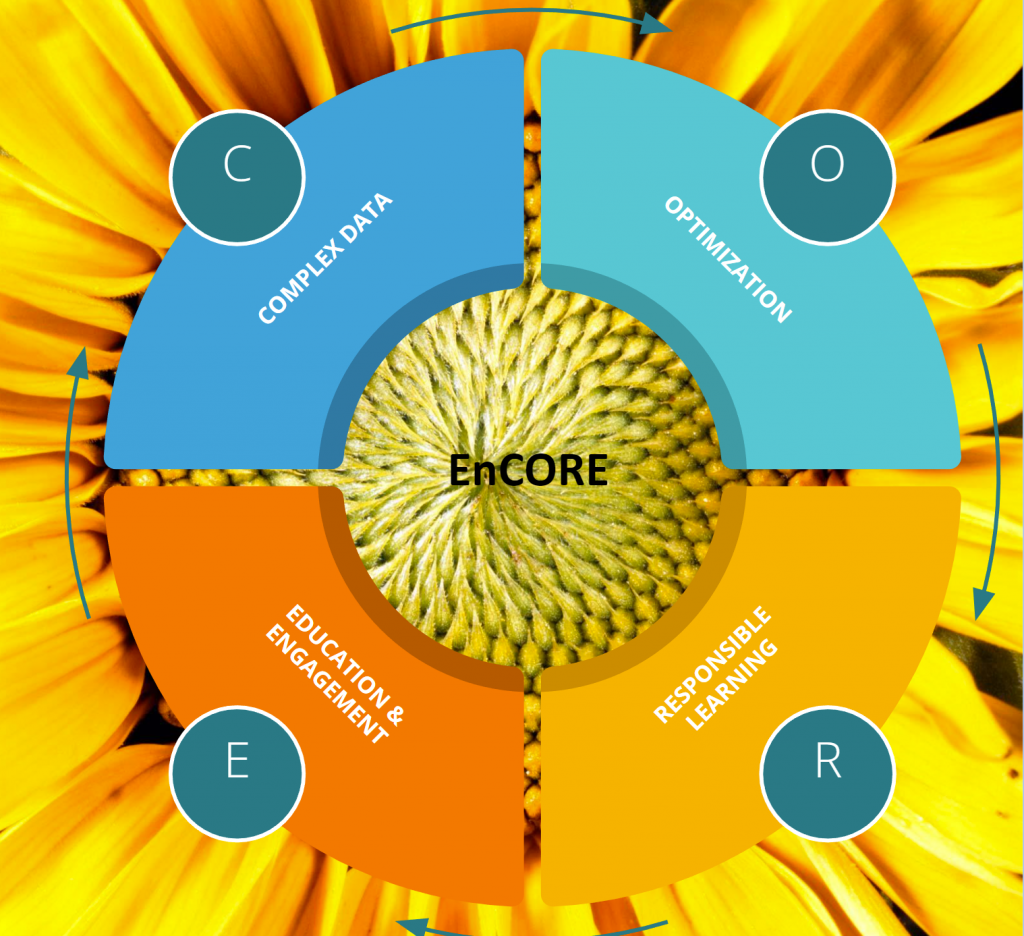

EnCORE’s team will focus on the four CORE pillars of data science: C for complexities of

data, O for optimization, R for responsible learning, and E for education and engagement.

The institute is fostering a plan for outreach and broadening participation by fostering supportive environments at all levels, from K-12 to postdocs and junior faculty. The project aims to reach a wide demography of students by offering collaborative courses across its partner universities and a flexible co-mentorship plan for multidisciplinary research.To bring theoretical development into practice, EnCORE will work with industry partners and domain scientists and will forge strong connections with other NSF Harnessing the Data Revolution Institutes across the nation.

NSF’s Harnessing the Data Revolution (HDR) Big Idea is a national scale activity to enable new modes of data-driven discovery that will allow fundamental questions to be asked and answered at the frontiers of science and engineering. HDR TRIPODS aims to bring together the electrical engineering, mathematics, statistics, and theoretical computer science communities together through integrated research and training activities.