Managing cloud rendering expenses can feel complicated for 3D animation studios across Central Europe. Every project demands high-performance computing, but unchecked resource usage and hidden fees often inflate costs. By applying accurate tracking methods like measured service consumption, studio owners gain control over cost breakdowns and resource allocation. This guide also highlights practical automation and monitoring strategies that help transform cloud rendering from a budget headache into a streamlined, predictable workflow.

Quick Summary

| Key Point | Explanation |

|---|---|

| 1. Assess current usage and costs | Review cloud usage reports to identify spending patterns and areas for potential savings. |

| 2. Optimize resource allocation | Match server specifications to project demands to reduce waste and improve performance. |

| 3. Implement automated cost controls | Set spending limits and automate tracking to prevent budget overruns and ensure consistent monitoring. |

| 4. Monitor and verify savings | Establish a baseline to measure actual savings and optimize future spending based on data insights. |

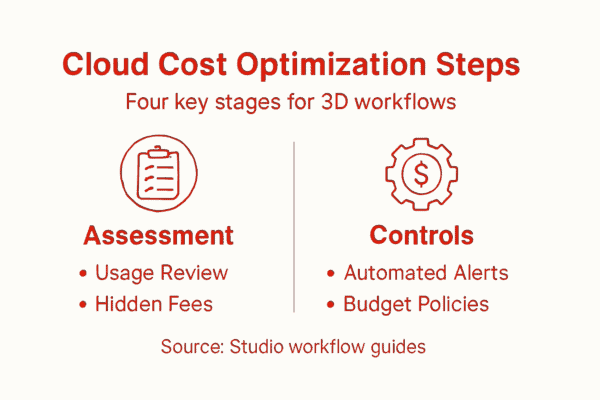

Step 1: Assess current cloud server usage and costs

Before optimizing anything, you need a clear picture of what you’re currently spending and how your cloud resources are being used. This foundational step prevents guesswork and reveals where real savings opportunities actually hide.

Start by pulling your cloud usage reports and cost breakdowns from your provider. Most providers offer detailed dashboards showing resource consumption over time. You’re looking for patterns – which projects consume the most GPU hours, which render jobs peak during certain months, and where idle time drains your budget unnecessarily.

Document these key metrics:

- GPU and CPU core hours consumed per project or department

- Average cost per render frame or job

- Peak usage periods and off-peak periods

- Monthly spending trends over the last three months

- Instances running without active jobs (idle costs)

- Data transfer and storage charges beyond compute

According to NIST cloud measurement strategies, tracking measured service consumption accurately enables organizations to control and optimize cloud spending effectively. Most Central European studios underestimate their idle time costs – servers kept running between projects add up quickly.

Next, compare your per-frame costs across different server configurations you’ve used. If you’ve rendered with both CPU and GPU setups, calculate the cost difference. This reveals whether your team is using the most economical resources for each workflow type.

The gap between your expected costs and actual costs often points directly to optimization opportunities worth 20-30% savings.

Check for hidden charges too. Data egress fees, API calls, or storage overages frequently surprise studios when they review line-by-line bills. Understanding transparent pricing structures helps you predict costs accurately before scaling up.

Here’s a quick reference comparing common cloud hidden charges that can impact total costs:

| Hidden Charge Type | Typical Source | Business Impact |

|---|---|---|

| Data egress fees | Large file exports | Unexpected high monthly bills |

| API call overages | Automated scripts, frequent queries | Accumulated costs over time |

| Storage overages | Backups, retained render files | Costs rise with unused data |

| Idle compute charges | Unused but running instances | Paying for nonproductive resources |

| Network transfer costs | Cross-region or external requests | Higher expenses for global projects |

Pro tip: Export three months of historical data into a spreadsheet and calculate your average weekly spending, then break it down by render type – this baseline becomes your optimization benchmark and makes savings visible when you implement changes.

Step 2: Configure resource allocation for optimal workloads

Now that you understand your current spending, it’s time to align your server resources with actual workload demands. Smart resource allocation prevents overpaying for unused capacity while ensuring your render jobs complete reliably.

Start by categorizing your typical workloads. Do you handle lightweight compositing tasks, heavy 3D animation renders, or a mix of both? Each type has different CPU and GPU requirements. A single static configuration wastes money on overprovisioned resources for lighter jobs and creates bottlenecks for demanding ones.

Match server specifications to workload types:

- Light compositing and post-production: Smaller GPU instances, fewer cores

- Mid-range 3D animation: Balanced CPU and GPU with moderate memory

- High-complexity simulations: Maximum GPU memory, multi-GPU configurations

- Batch processing overnight jobs: Larger instance pools for parallel execution

Research in elastic resource allocation shows that dynamically adjusting resources based on actual workload demands significantly improves both performance and cost efficiency. Rather than running the same server size for everything, adjust your instance specifications to match each project’s actual requirements.

Implement progressive scaling within your workflow. Start render jobs on smaller instances, then scale up only if performance monitoring shows bottlenecks. This approach catches inefficiencies early without overcommitting resources upfront.

Allocating resources by workload type, not guesswork, typically reduces waste by 25-40% while improving render reliability.

Consider dynamic resource adjustment mechanisms that respond to real-time performance. Some providers allow mid-job scaling adjustments – if a render suddenly demands more compute, resources expand automatically. This prevents failed jobs and unnecessary reruns.

Test configurations with pilot renders before committing your full pipeline. Run a single complex project on your proposed setup, measure actual resource consumption, then refine allocations based on real data rather than assumptions.

Pro tip: Create a simple resource allocation matrix listing your 3-5 most common project types with their optimal server configurations, then save it as your team’s reference guide to eliminate decision-making overhead and ensure consistent choices across projects.

Step 3: Implement automated cost controls and policies

Manual monitoring of cloud costs burns time and misses optimization opportunities. Automated cost controls work continuously, preventing budget overruns while enforcing consistent spending policies across your entire team.

Start by setting clear spending limits. Define monthly budgets for each department or project, then configure your provider to alert you when usage approaches 50%, 75%, and 90% of those limits. Most cloud providers offer built-in budget management tools that require minimal setup.

Configure these essential automated controls:

- Monthly spending caps that halt new resource provisioning when reached

- Daily cost alerts sent to finance and technical leads

- Automatic shutdown of idle instances after specified inactivity periods

- Scheduled resource scaling that matches your known peak usage windows

- Tag-based cost tracking to monitor spending by project, client, or department

Automation frameworks for cost policy enforcement help cloud environments monitor usage in real-time and trigger alerts or corrective actions automatically. Rather than reviewing bills after the month ends, your systems actively prevent wasteful spending as it happens.

Implement usage caps on instance types known to drain budgets. Prevent your team from accidentally launching expensive GPU clusters when cheaper options would suffice. These guardrails protect against costly mistakes without micromanaging individual decisions.

Automated controls typically prevent 15-25% of unexpected overspend before it becomes a problem.

Set up automated reporting dashboards showing cost trends, resource utilization rates, and efficiency metrics. Your team should see cost data weekly, not yearly. Transparency drives accountability and identifies wasteful patterns quickly.

The table below summarizes how automated cost controls mitigate common cloud budgeting risks:

| Automated Control | Mitigated Risk | Resulting Benefit |

|---|---|---|

| Spending caps | Exceeding project budgets | Prevents runaway expenses |

| Idle shutdowns | Paying for unused servers | Cuts waste, increases efficiency |

| Real-time cost alerts | Late detection of overspending | Immediate corrective action |

| Tag-based cost tracking | Lack of cost transparency | Improved accountability |

| Scheduled scaling | Peak load oversizing | Matches resources to actual need |

Consider cost optimization strategies integrated with automation that adjust resource allocation dynamically. Some advanced systems reduce energy consumption and costs simultaneously by balancing workload distribution across off-peak periods.

Pro tip: Set up automated cost alerts at 50%, 75%, and 90% of your monthly budget threshold, then schedule a weekly 15-minute team review of the cost dashboard to catch spending anomalies before they balloon.

Step 4: Verify savings through cost monitoring and reporting

Optimization only matters if you can prove it’s working. Solid cost monitoring and reporting transforms assumptions into hard data, showing exactly where you saved money and where opportunities remain.

Start by establishing a baseline. Compare your current monthly spending against the same period last year or your initial assessment from Step 1. This baseline becomes your reference point for measuring all future improvements.

Track these critical metrics:

- Cost per render frame before and after optimization

- Monthly spending trends with month-over-month percentage changes

- Resource utilization rates showing how fully you use provisioned capacity

- Cost by project or client to identify which workflows benefit most

- Idle time costs and instances shut down through automation

- Peak versus off-peak spending patterns

Using comprehensive monitoring and reporting tools ensures you capture accurate cost data and maintain transparency into resource consumption. Real-time dashboards beat quarterly reviews for catching trends early and validating that your cost-control measures actually stick.

Create weekly reports showing cost trends alongside performance metrics. Your team should see not just spending, but also render times, job success rates, and resource efficiency. This prevents false economy where cost cutting damages output quality.

Documented cost verification prevents scope creep and justifies continued investment in optimization efforts.

Implement structured cost data capture and sensitivity analysis to ensure your measurements hold up to scrutiny. This approach supports auditable reports and reliable decision-making on future cloud investments.

Conduct monthly reviews comparing projected versus actual savings. Did automation shut down idle instances as planned? Are resources truly scaling down during off-peak periods? Gaps between planned and actual savings reveal configuration problems worth fixing.

Pro tip: Export your baseline month and current month side-by-side into a spreadsheet, calculate the percentage savings by category, then share that visual comparison with stakeholders monthly – concrete numbers build credibility and support for ongoing optimization.

Maximize Savings with Smart Cloud Render Solutions

Struggling with unpredictable cloud server costs and inefficient resource allocation is a common challenge in 3D workflows. This guide outlined the importance of assessing real usage patterns, aligning GPU and CPU configurations with actual render demands, and implementing automated cost controls to prevent wasteful spending on idle or oversized compute resources. Idle instances, hidden transfer fees, and overprovisioned servers quietly erode margins when not actively monitored.

Cost optimization becomes sustainable when infrastructure is structured around rendering economics rather than generic cloud billing models. Predictable performance, transparent pricing, and measurable cost per frame allow studios to plan production budgets with greater confidence.

MaxCloudON combines dedicated GPU and CPU cloud servers with its automated rendering software, RenderSonic, which provides structured job submission and upfront cost estimation before rendering begins. Whether working with Blender, 3ds Max, Maya, or other 3D tools, this model enables consistent performance and clearer financial visibility for demanding render workloads.

Ready to revolutionize your workflow and slash your cloud spend today? Explore our Blender resources for workflow tips or visit our main site at MaxCloudON to get started with automated cloud rendering that puts cost control at the center of your 3D production pipeline.