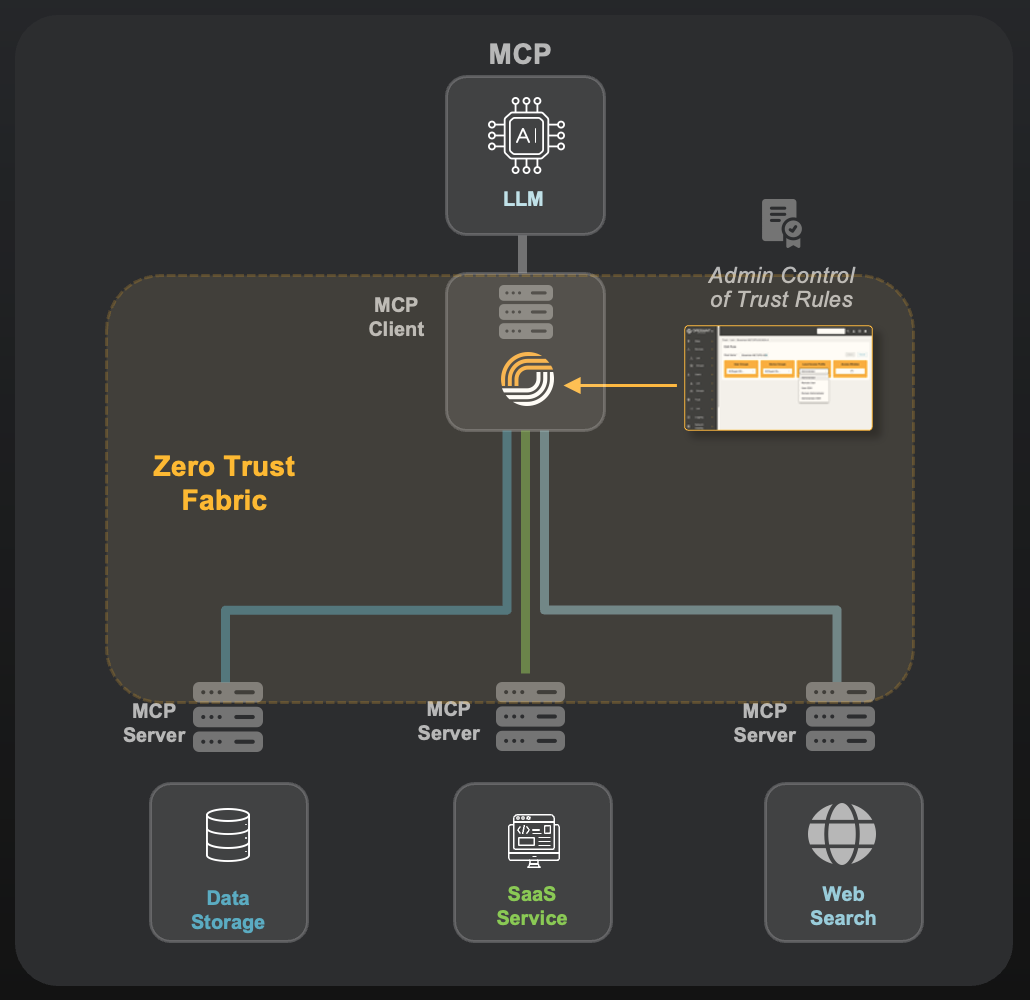

Securing Agentic AI & MCP Workflows

The Challenge

AI agents increasingly chain tools, invoke APIs, and interact with enterprise systems autonomously. These workflows often rely on static credentials, implicit trust, and assumptions about agent behavior.

Why Traditional Controls Fail

IAM authenticates at login. Network security assumes stable endpoints. Neither governs what an agent is allowed to do per action.

How Operant Helps

Operant enforces protocol-gapped, per-action trust for agentic workflows and Model Context Protocol (MCP) connections. Each agent action is evaluated in real time, authorized with least-privilege credentials, and governed independently of agent logic or prompts.

Outcomes

-

Secure agent autonomy without embedding secrets

-

Reduced blast radius and lateral misuse

-

Faster, safer deployment of agent-based systems

Privacy-Aware AI Over Sensitive Data

The Challenge

AI systems can misuse sensitive data without breaching systems through inference, recombination, or unintended propagation across contexts.

Why Traditional Controls Fail

Privacy policies live in documents. DLP reacts after data has already moved. Neither enforces how data is used at runtime.

How Operant Helps

Operant enforces privacy controls directly in the data path, governing what data is exposed, transformed, or returned to AI agents based on context and purpose, not just access rights.

Outcomes

-

Enforced purpose limitation and data minimization

-

Reduced regulatory and reputational risk

-

Technical privacy guarantees, not policy promises

Audit-Ready AI for Regulated Enterprises

The Challenge

Security, privacy, and legal teams struggle to explain or prove how AI systems accessed and used data especially during audits, incidents, or regulatory reviews.

Why Traditional Controls Fail

Logs are fragmented, mutable, and designed for human activity, not autonomous systems operating continuously.

How Operant Helps

Every AI interaction governed by Operant generates signed, tamper-evident evidence at the network layer. This creates a unified, provable record of AI behavior across agents, tools, and data sources.

Outcomes

-

Faster security, legal, and compliance approvals

-

Reduced friction in audits and investigations

-

Clear accountability for AI-driven decisions

Security Leaders (CISOs & Architects) -

AI Platform & Engineering Teams -

Privacy, Legal, and Compliance Teams -

securing AI-driven access and reducing enterprise risk

deploying agentic systems over real data

enforcing data governance technically, not manually

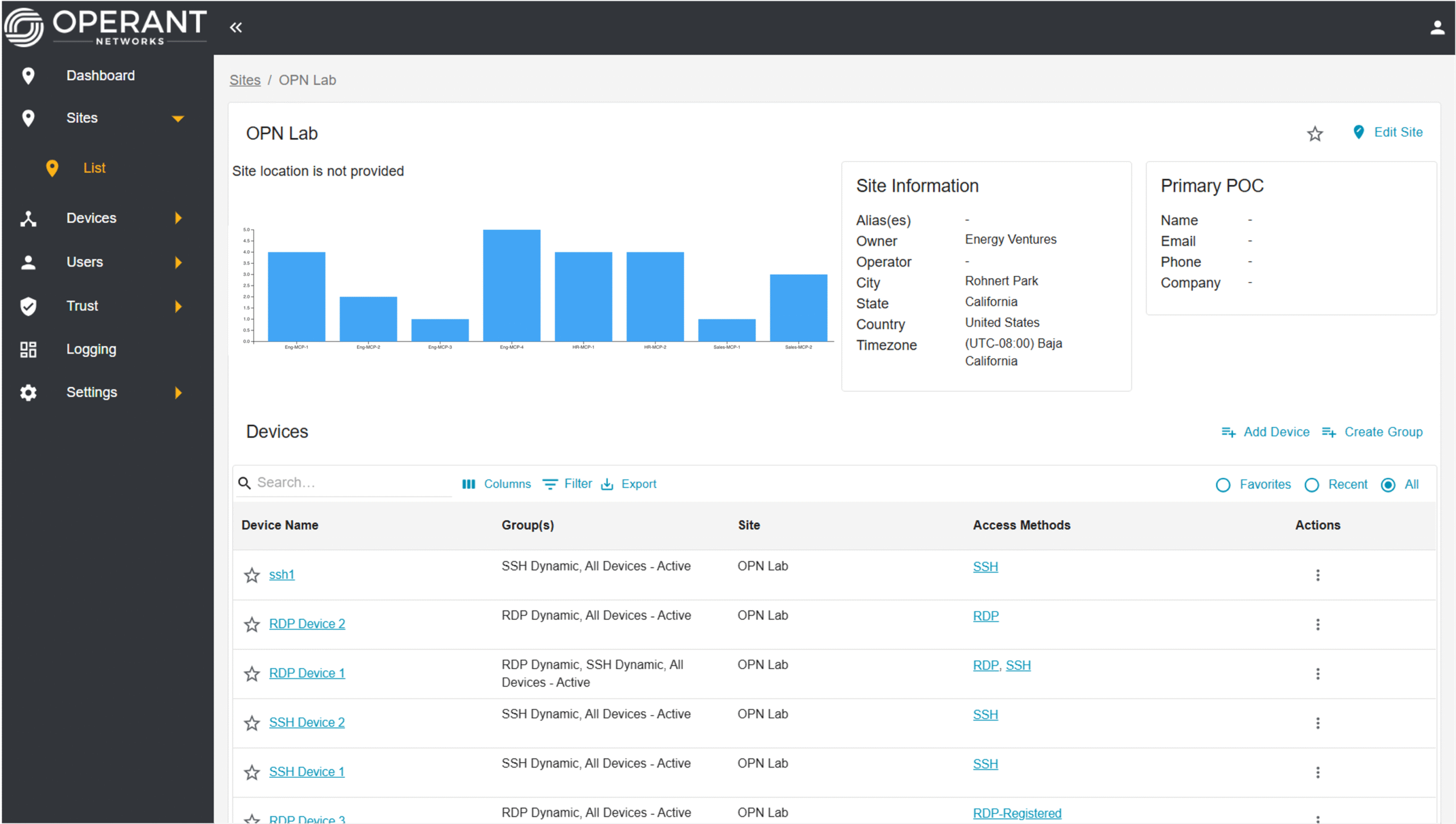

Situational Awareness

Visibility

CyberSecurity

Data Privacy

Production Ready

Want to know more?

Get in touch and we'll get back to you as soon as we can. We look forward to hearing from you!