"Michael Kennedy's Thoughts on Technology": DevOps Python Supply Chain Security

In my last article, “Python Supply Chain Security Made Easy” I talked about how to automatepip-auditso you don’t accidentally ship malicious Python packages to production. While there was defense in depth with uv’s delayed installs, there wasn’t much safety beyond that for developers themselves on their machines.

This follow up fixes that so even dev machines stay safe.

Defending your dev machine

My recommendation is instead of installing directly into a local virtual environment and then running pipaudit, create a dedicated Docker image meant for testing dependencies with pip-audit in isolation.

Our workflow can go like this.

First, we update your local dependencies file:

This will update the requirements.txt file, or tweak the command to update your uv.lock file, but it don’t install anything.

Second, run a command that uses this new requirements file inside of a temporary docker container to install the requirements and run pip-audit on them.

Third, only if that pip-audit test succeeds, install the updated requirements into your local venv.

The pip-audit docker image

What do we use for our Docker testing image? There are of course a million ways to do this. Here’s one optimized for building Python packages that deeply leverages uv’s and pip-audit’s caching to make subsequent runs much, much faster.

Create a Dockerfile with this content:

This installs a bunch of Linux libraries used for edge-case builds of Python packages. It takes a moment, but you only need to build the image once. Then you’ll run it again and again. If you want to use a newer version of Python later, change the version inuv venv --python 3.14 /venv. Even then on rebuilds, the apt-get steps are reused from cache.

Next you build with a fixed tag so you can create aliases to run using this image:

Finally, we need to run the container with a few bells and whistles. Add caching via a volume so subsequent runs are very fast:-v pip-audit-cache:/root/.cache. And map a volume so whatever working directory you are in will find the local requirements.txt:-v \"\$(pwd)/requirements.txt:/workspace/re

Here is the alias to add to your.bashrcor.zshrcaccomplishing this:

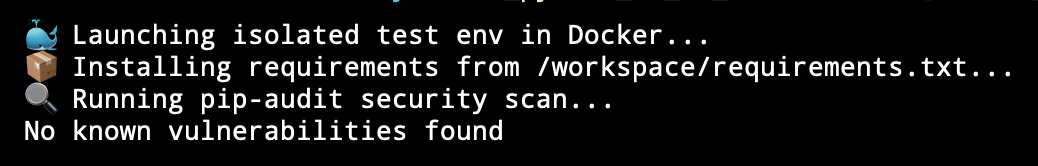

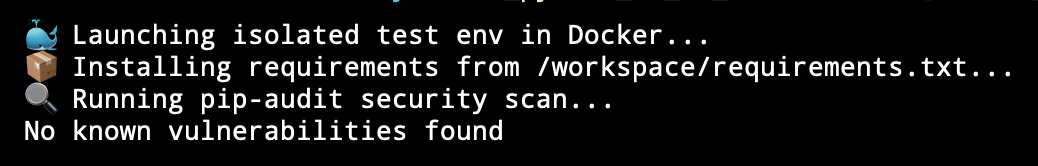

That’s it! Once you reload your shell, all you have to do is type ispip-audit-projwhen you’re in the root of your project that contains your requirements.txt file. You should see something like this below. Slow the first time, fast afterwards.

Protecting Docker in production too

Let’s handle one more situation while we are at it. You’re running your Python appINDocker. Part of the Docker build configures the image and installs your dependencies. We can add a pip-audit check there too:

Conclusion

There you have it. Two birds, one Docker stone for both. Our first Dockerfile built a reusable Docker image namedpipauditdockerto run isolated tests against a requirements file. This second one demonstrates how we can make ourdocker/docker compose buildcompletely fail if there is a bad dependency saving us from letting it slip into production.

Cheers

Michael

https://mkennedy.codes/posts/devops-python-supply-chain-security/