I actually enjoy setting up a new coding project from scratch - those first couple of hours where you go from nothing to something, even if it’s just poorly laid out HTML and CSS, or a bunch of console.log statements.

Recently though, I’ve found myself unable to approach any new task without AI’s help.

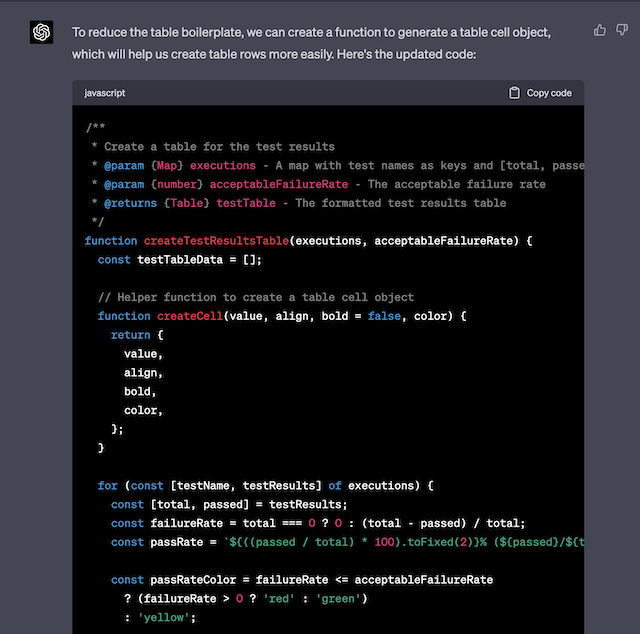

Like an addiction, it became a trap. I couldn’t break free from the cycle of constant stimulation and instant gratification these tools provided. Instead of being a useful helper, AI became the process itself - I stopped starting with code and using AI to work through ideas, and instead started with AI and used it to generate the implementation.

How it started

When they first became available, AI coding agents felt novel and exciting - like a new tool in my toolbox that helped me realise ideas in completely new domains or languages, at a speed I wasn’t capable of before.

One example is Teskooano - my personal project to build a 3D space engine, something I’d wanted to do for years but never found time to realise. I discovered I could go from idea to working prototype in hours, achieving things that seemed beyond my reach. It became “just one more turn” - I’d see opportunities to add features, and instead of waiting for my slow meatbrain to implement them, they’d be done in minutes.

But now it’s a complex mess. Even today, I still haven’t actually learned how to write WebGL shaders or understood the inner workings of ThreeJS.

Yes, AI helped me build the project but it robbed me of the joy of learning, and the satisfaction of finally understanding how things work. In less than two years, I’ve become more dependent on AI than I could have imagined.

How it’s going

I now struggle to connect dots that previously weren’t a problem in my day-to-day work. No one forced me to do this - I have no top-down executive orders mandating AI use. This situation is wholly of my own making.

But companies have spoken. Many of us now work in environments where we’re constantly expected to deliver new features while being told there’s no budget to grow teams (in fact, we have to cut engineers). AI creates a mirage of productivity - it feels like it’s helping us ship solutions faster.

Software development used to feel like pottery to me. You take a piece of clay and start shaping it into something - its final form truly unknowable. From that initial spark, the clay takes shape through the connection between human mind and hands, imagination being manifested into physical reality to create something that didn’t exist before. Maybe not novel in the sense that it’s a cup, plate, or vase - but no two pieces are ever the same, because no two people, or pieces of clay, are the same. This is especially true for abstract ideas. We call this art.

In this new age of AI agents, it feels like we’re heading somewhere dark. That spark of creation from the human mind is being replaced with a wholly mechanical process - one where every ‘piece of clay’ is the same, and every AI agent produces the same mechanical movements, with no connection to that mental space where truly novel ideas are born.

Code has moved from art to assembly line, from unique to mass-produced commodity - perhaps ironic considering my employer , whose entire business model is based on volume production.

What can be done?

We now exist in a weird liminal space - where software development is both easier than it’s ever been, and yet somehow harder to actually do.

I don’t believe the solution is to abandon using AI entirely - for some of us that ship has already sailed, and from experience - there can be genuine value in these tools when used thoughtfully. Instead, I’m trying to recalibrate my relationship with them.

I’m learning to recognise the difference between productive use and dependency. I’ve decided that when I catch myself reaching for AI before I’ve even thought through the problem, I need to stop. Sometimes the slow, frustrating process of figuring things out yourself is exactly the point.

I’m going to force myself to write the first version of anything by hand - even if it’s messy, incomplete, or wrong. The AI can step in to help optimise, or explain concepts I don’t understand. But that initial act of creation, that struggle with the blank page, needs to stay human.

I’m also being more intentional about what I don’t offload. If I’m working in a domain I want to actually understand - like graphics programming - I want make myself read the documentation, follow tutorials, make mistakes - thats the way we as humans truly learn and grow.

The craft of software development has always been about problem-solving and learning. AI should amplify those things, not replace them. We need to remember that reality is messy and at times inefficient and that the process of learning isn’t something to be optimised - it’s the point.