Discover how you can clarify license plates and other static details in noisy CCTV by integrating multiple frames into a single, clearer image with Amped FIVE’s Frame Averaging filter. You’ll learn how to pick the right frames, stabilize footage, refine edges, and compare your enhanced results with the original.

Hello again folks and welcome to the second article in our new series “Learn and solve it with Amped FIVE“. As mentioned in our previous article, this series is focused on how to solve common challenges related to video evidence using Amped FIVE.

This week we are going to look at the advantages of integrating multiple frames in a video together. Also, we’re going to discuss how this technique can assist in reducing noise and enhancing detail.

What is Frame Integration?

As we know, a video is a sequence of still images (which we refer to as frames) that, if logically linked together and reproduced fast enough, will give us the illusion of movement. Video works with us mere humans (!) because our eyes do not perceive the flicker between the frames. This phenomenon is called visual persistence: the last projected image remains impressed for a small period of time (a fraction of a second) on the retina even when removed from our vision.

Courtesy of Animation History Museum

The beauty of visual persistence is that when dealing with a video containing noise, our brain will gradually “deregister” it and will focus more on the static detail within (as shown below).

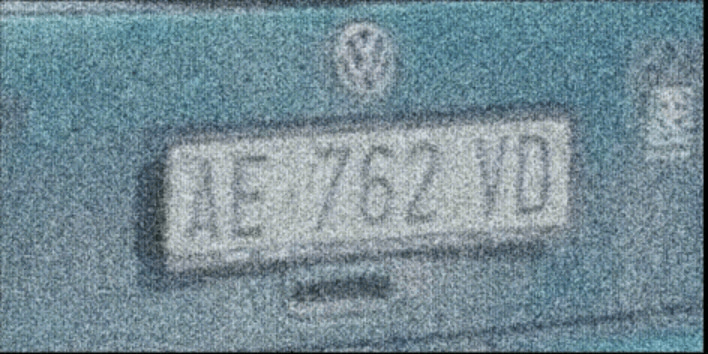

But how does this help us clarify a license plate or a person’s face? When you integrate multiple frames, transient noise averages out while static features remain.

When we have multiple frames in a low-quality video containing details of interest, we can combine them all together by computing the average value of every pixel across all the frames to create one single and much clearer image. This process, called frame integration, clarifies the image since noise and artifacts in the video are mathematically averaged out because they change rapidly throughout the various available frames (whereas the detail does not).

Preparing for Frame Integration

Before we integrate frames together to clarify detail, we must first ensure that:

- We use an adequate (but not so excessive) number of good quality frames available showing an undisturbed view of our target (using an appropriate filter from the Select Frames group).

- Our target is static throughout the selected frames (using an appropriate filter from the Stabilization group if it isn’t).

First, drag your video into Amped FIVE. Then, move the play head to the relevant portion showing an undisturbed view of the target. At this stage, select a good number of frames using the Range Selector. Sparse Selector and Remove Frames can also be used to discard bad-quality frames. The I-Frames Selector can be used to select the best quality frames in a temporally compressed video. Adjust the Levels to obtain the best possible contrast of pixel values in the target area.

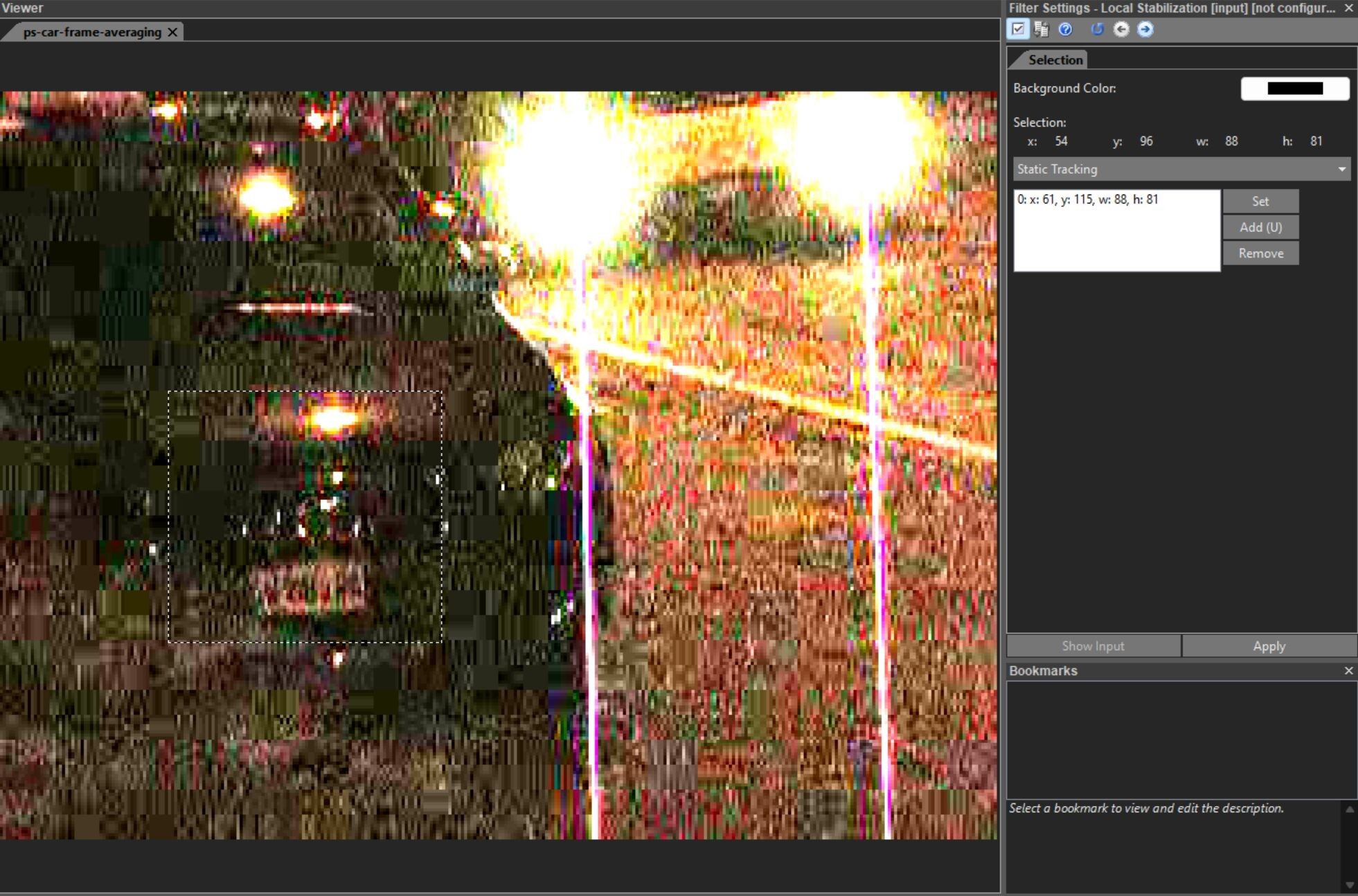

If the target is not completely static, maybe due to a shaky handheld camera, use the Local Stabilization filter to stabilize it throughout the selected frames. Please note that if the target is moving towards or away from the camera or if it’s changing its angle/perspective towards the camera, then the Perspective Stabilization filter may be required.

Applying the Frame Averaging Filter

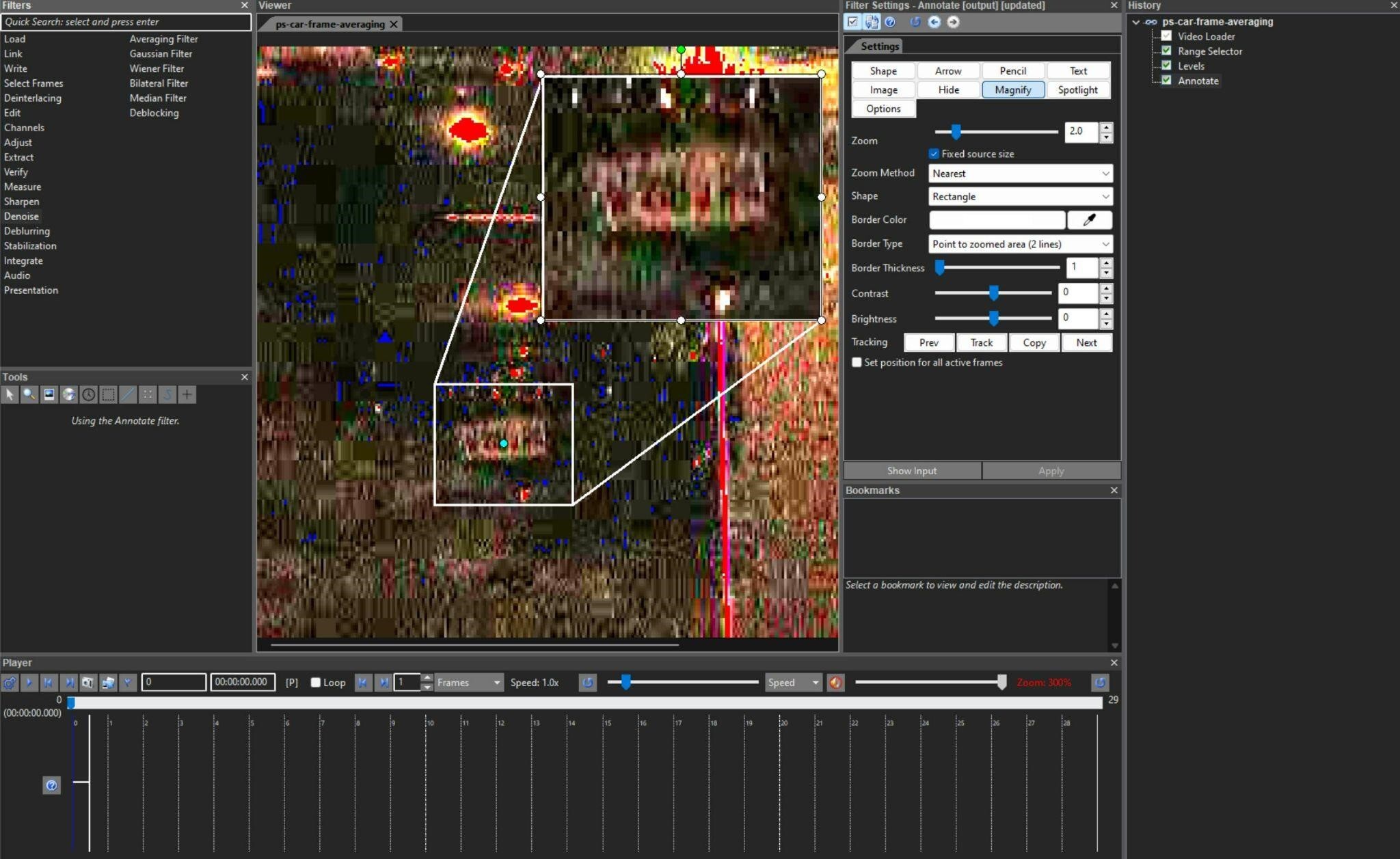

Once you are satisfied that the frames selected are adequate and that the target is static throughout the selected frames, apply the Frame Averaging filter from the Integrate group.

The filter does not have any configurable settings as it will simply overlay all the available frames from the previous stage in the chain and output one single averaged image. Pretty cool, right?

You should hopefully now be able to get a much clearer representation of the target. And at this stage, you could apply refinement filters such as Unsharp Masking, Blind Deconvolution or Turbulence Deblurring to increase the contrast of detail around the edges of the target.

This would also be a good time to Crop the image to the area of interest. Then, enlarge it using a Resize filter with bicubic interpolation. Finally, apply a Compare Original to showcase the improvement you have obtained between the original video and the enhanced image.

Watch how we integrate multiple frames step-by-step in Amped FIVE. To learn more about the Frame Averaging filter check out this video on our YouTube channel:

Conclusion

That’s all for today! We hope you’ve found this issue of the “Learn and solve it with Amped FIVE” series interesting and useful! If you need to integrate multiple frames, try the Frame Averaging workflow and the refinement tips we covered.

Stay tuned and don’t miss the next article coming out next Tuesday!

Don’t forget to share this blog post with your friends and colleagues on LinkedIn, YouTube and X.

FAQs – Integrate Multiple Frames with Frame Averaging in Amped FIVE

Integrating multiple frames means combining several consecutive video frames into a single still image. Random noise tends to vary across frames, while the subject of interest remains static. By averaging the frames, noise is reduced and important details, such as license plates or faces, become clearer.

There is no fixed number, but you should select enough good-quality frames to reduce noise without introducing motion artifacts. Too few frames may leave visible noise; too many can introduce blur, especially when the scene changes. In Amped FIVE, you can use frame selection tools to isolate only the most suitable frames.

Yes, but only after stabilization. If the camera and/or subject moves, you should first stabilize the target area using filters such as Local Stabilization or Perspective Stabilization in Amped FIVE. Once the target is stabilized across the selected frames, frame averaging can be applied without smearing important details.

No. Frame integration (frame averaging) primarily reduces noise and enhances visibility by averaging multiple frames into one image. Super resolution, on the other hand, uses multiple frames to reconstruct a higher-resolution image by exploiting small sub-pixel shifts between frames. Both use multiple frames, but the goals and algorithms differ.

After frame averaging, you can refine the result with filters such as Unsharp Masking, Blind Deconvolution, or Turbulence Deblurring to enhance edges and contrast. Then crop to the region of interest, resize if needed, and use Compare Original to visually demonstrate the improvement over the original video.