Key Takeaways

- Most Accurate Detector: Multiple global studies rank Copyleaks as the most accurate AI content detector available.

- Validated Across Use Cases: Research confirms Copyleaks’ effectiveness across languages, education levels, industries, and content types.

- Adaptable & Evolving: Copyleaks uses advanced machine learning to stay current with emerging LLMs like GPT-4, Gemini, Claude, and more.

- Trusted & Reliable: Whether you’re detecting unedited AI text or content rewritten to evade detection, Copyleaks delivers consistent results.

As AI-generated content becomes increasingly sophisticated, organizations require reliable tools to verify authenticity and maintain content integrity. Independent third-party researchers continuously evaluate Copyleaks’ AI Detector, and the results speak for themselves.

From academic papers to industry reviews, Copyleaks consistently ranks as one of the most accurate tools for identifying AI-generated text. Its ability to detect content created by leading models (including ChatGPT, Gemini, Claude, DeepSeek, and more), as well as heavily edited or translated material, sets it apart in an evolving landscape.

In July 2023, four researchers from around the world published a study on the Cornell Tech-owned arXiv, declaring Copyleaks AI Detector the most accurate for detecting text generated by Large Language Models (LLMs).

For the study, the researchers collected 124 submissions from computer science students written before the creation of ChatGPT. They then generated 40 ChatGPT submissions and used the data to evaluate eight publicly available LLM-generated text detectors.

Here were their key findings:

| Detectors | Human Data | ChatGPT Data |

|---|---|---|

| CopyLeaks | 99.12% | 95.00% |

| GPT2 Detector | 98.25% | 95.00% |

| CheckForAI | 98.25% | 95.00% |

| GLTR | 82.46% | 95.00% |

| GPTKit | 100.00% | 75.00% |

| OriginalityAI | 93.86% | 70.00% |

| AI Text Classifier | 94.74% | 60.00% |

| GPTZero | 54.39% | 45.00% |

That was just the beginning.

Below is a roundup of notable independent studies evaluating the performance of Copyleaks. We’ll continue to update this list as new research emerges.

Study: An exploratory study on the effectiveness of AI detection tools in identifying AI-generated articles

Authors: R. Grillo, L.S.C. Neto, L. Bottura, A.H. Llanos, S. Samieirad, F. Melhem-Elias

Published: January 31st, 2026

Key Findings: This study evaluates the performance of eight free AI detection tools in distinguishing between human-written scientific articles and those generated by AI models (such as ChatGPT, DeepSeek, Gemini, and Copilot) within the field of oral and maxillofacial surgery.

Best Performer: Copyleaks achieved the highest accuracy in identifying AI-generated content, with a mean detection score of 99.6/100.

| JustDone | 98.0 | 81.0 | 84.0 | 82.4 | 85.0 | 83.0 | 72.5 | 90.2 | 80.4 | 87.5 | 88.9 | 90.8 |

| DupliChecker | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 100.0 | 0.0 | 0.0 | 84.6 | 5.0 | 0.0 | 11.2 |

| Sapling | 100.0 | 100.0 | 100.0 | 59.5 | 100.0 | 100.0 | 99.6 | 87.7 | 100.0 | 100.0 | 100.0 | 100.0 |

| QuillBot | 100.0 | 70.0 | 100.0 | 100.0 | 100.0 | 100.0 | 79.3 | 95.9 | 29.0 | 100.0 | 100.0 | 100.0 |

| Copyleaks | 100.0 | 100.0 | 98.5 | 100.0 | 100.0 | 100.0 | 100.0 | 100.0 | 100.0 | 96.8 | 100.0 | 100.0 |

| ZeroGPT | 96.5 | 19.4 | 95.6 | 82.1 | 91.2 | 96.0 | 86.9 | 58.0 | 97.3 | 87.1 | 100.0 | 98.2 |

Analysis of the 12 AI-generated articles. The numbers represent the mean percentage score (0–100) across all articles in the category.

Conclusion: The study highlights Copyleaks’ superior performance with a 99.6% detection rate for AI-generated text, establishing it as the most reliable tool for identifying AI-generated scientific studies in the oral and maxillofacial surgery field

Study: Precision of Academic Plagiarism Detection: A Descriptive Analysis of Artificial Intelligence Verifiers

Editors: Luis Ebano Amor Oliva, Erika Guadalupe May Guillermo

Published: January 2026

Key Findings:

This study aims to identify the accuracy of various AI detection tools and how it can distinguish between original human literature and text generated by ChatGPT. The two researchers compared 4 different AI detection tools (Copyleaks, Content at Scale, Scribber, ZeroGPT) and found that Copyleaks was by far the most accurate detection tool.

The study found that:

-

Copyleaks achieved a 99% accuracy rate and a .2% false-positive rate in a study that analyzed 50 samples of human written literature and 50 samples of AI generated literature.

-

Copyleaks is able to provide a clear answer to userswith binary results of either, “This is human text” or “AI content detected” using the free version of the tool.

Graphs/Diagrams

Only one out of the 50 samples was mislabeled as Original Human made literature.

| Software | Copy Leaks | Content at Scale | Scribber | ZeroGPT |

|---|---|---|---|---|

| F1 Score | 99% | 79% | 25% | 69% |

| AUROC | 0.99 | 0.76 | 0.5288 | 0.85 |

AUROC (Area Under the Receiver Operating Characteristic Curve) – This figure measures a tool’s ability to distinguish between two groups i.e. “Human vs. AI”. The closer the score value is to 1, the higher the predictive capacity of the detector.

F1 Score – A balanced assessment of a detector’s precision and sensitivity. The higher the score, the more accurate the detector.

Conclusion: The study confirms that Copyleaks’ high level of accuracy and reliability ensures the AI detection tool’s value to educators, editorial houses, and enterprises. Read the full study here.

Study: Evaluating the Performance of AI Text Detectors, Few-Shot and Chain-of-Thought Prompting Using DeepSeek Generated Text

Editors: Hulayyil Alshammari, Praveen Rao

Published: March 2025

Key Findings:

This independent academic study evaluated the accuracy and reliability of AI content detectors, including Copyleaks, across various scenarios, including AI-generated texts, legacy human-written scientific papers, and other original works, with a focus on DeepSeek-generated content and adversarial attacks such as paraphrasing and humanization.

The study found that:

-

Copyleaks demonstrated consistent and reliable performance. It achieved near-perfect recognition rates for original and paraphrased DeepSeek text, while other tools—particularly AI Text Classifier and GPT-2—struggled to show consistent detection performance.

-

In detecting human-written text, Copyleaks achieved the highest score of 100%, followed by AI Text Classifier at 98.4%, GPTZero at 98.2%, and Content Detector AI at 55.2%.

-

Copyleaks continued to excel in detecting DeepThink-generated text, attaining an accuracy score of 99.7%, outperforming QuillBot (95.4%) and GPTZero (94.1%).

-

Researchers highlighted that Copyleaks minimized false positives more effectively than competing solutions, with Copyleaks and QuillBot being the only detectors that did not present false positive cases.

Conclusion:

Copyleaks continues to be recognized by academic experts as a trusted solution for AI content detection. In the evolving landscape of generative AI, this research reinforces Copyleaks’ reputation for delivering reliable, consistent, and responsible detection across diverse text types and adversarial conditions, making it a preferred choice for educational institutions, publishers, and enterprises seeking to uphold content integrity. The study also underscores the importance of human oversight in conjunction with AI detectors and the potential of advanced prompting techniques for improved accuracy.

Read the full study here.

Study: The Age of Generative Artificial Intelligence — Evaluating AI Content Detectors

Editors: Ömer Aydın, Enis Karaarslan

Published: July 16th, 2025

Key Findings:

This independent academic study evaluated the accuracy and reliability of AI content detectors, including Copyleaks, across various scenarios—AI-generated texts, legacy human-written scientific papers, and other original works. The study found that while many AI detectors struggle with misclassifications, Copyleaks demonstrated consistent and reliable performance. Researchers highlighted that Copyleaks minimized false positives and false negatives more effectively than competing solutions.

The study also underscored the risks of relying solely on AI detectors without human oversight, emphasizing Copyleaks’ ability to support evidence-based decisions rather than acting as a standalone judge.

Conclusion:

Copyleaks continues to be recognized by academic experts as a trusted solution for AI content detection. In the evolving landscape of generative AI, this research reinforces Copyleaks’ reputation for delivering reliable, consistent, and responsible detection, making it a preferred choice for educational institutions, publishers, and enterprises seeking to uphold content integrity.

Read the full study here.

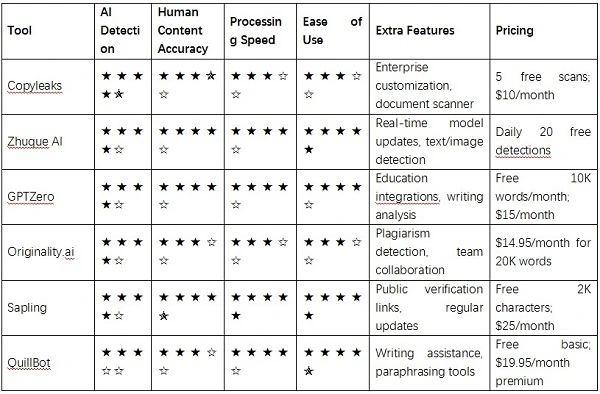

Study: Leading AI Content Detectors for the Needs

Author: GetNews Editorial Team

Published: June 30, 2025

Key Findings:

- After extensive testing using content from 12 AI models and human writers across 5 topic areas, Copyleaks was named “Best for highest AI detection accuracy with enterprise features.”

- Copyleaks was the only tool to correctly identify AI-generated content across all models and topics—including advanced models like GPT-4.5, Gemini 2.5, DeepSeek V3, Claude 4 Opus, and more.

- It also performed exceptionally well on heavily modified or mixed-content samples, where other detectors struggled.

Conclusion:

Copyleaks is the go-to tool for organizations seeking the most advanced and accurate detection across the full spectrum of modern AI content, especially in enterprise, publishing, and education.

Read the full study here.

Study: Generative AI in Assessment: AI Detectors and Implications for Practice

Authors: Jingyi Liu, Youyan Nie, Bee Leng Chua

Published: June 27, 2024

Key Findings:

Copyleaks was the only detector to achieve 100% accuracy across all three tested text types: AI-generated, human-written, and mixed (AI-generated and human-written). The study also found Copyleaks maintained over 90% consistency in its classification results, highlighting its reliability in real-world academic use cases.

| AI Text Classifier | GPTZero | Copyleaks | Content at Scale | |

|---|---|---|---|---|

| AI-generated texts | 95.24% | 90.84% | 100% | 100% |

| Human written texts | 90.84% | 100% | 100% | 100% |

| Mixed texts | 90.48% | 95.24% | 100% | 100% |

| Average | 92.07% | 95.24% | 100% | 100% |

Conclusion:

For educators concerned about AI usage in student submissions, Copyleaks stands out as a highly accurate and dependable solution for detecting a wide range of text types.

Read the full study here.

Article: 3 Of The Best AI Detection Tools Available (According To Our Own Testing)

Author: Emma Street

Published: December 20, 2024

| Tool | Non-AI Factual Text | Non-AI Fiction Text | AI Factual Text | AI Fiction Text |

|---|---|---|---|---|

| QuillBot | 100% human | 100% human | 34% human | 7% human |

| Sapling | 100% human | 100% human | 100% AI | 26% AI |

| Smodin | 0% AI | 0% AI | 96% AI | 16% AI |

| Copyleaks | 100% human | 100% human | 100% AI | 100% AI |

| Winston AI | ? | ? | 85% AI | 100% AI |

| GPTZero | 0% AI | 59% human | 98% AI | 58% AI |

| Undetectable AI | 100% human (all texts) | 100% human (all texts) | 100% human | 100% human |

| Merlin AI | 40% AI | 97% AI | 78% AI | 45% AI |

Conclusion: While some detectors struggled with more creative or blended content, Copyleaks proved itself to be one of the most consistent and dependable tools, making it a top contender for professionals who require accuracy over gimmicks.

Read the full article here.

Study: Comparing ai detectors: evaluating performance and efficiency

Author: Jeremie Busio Legaspi, Roan Joyce Ohoy Licuben, Emmanuel Alegado Legaspi, and Joven Aguinaldo Tolentino.

Published: July 18, 2024; International Journal of Science and Research Archive

Key Findings:

In a comparative evaluation of three AI detection tools—GPTZero, Writer AI, and Copyleaks—Copyleaks demonstrated strong reliability, correctly identifying all human-written and AI-generated essays. Unlike Writer AI, which misclassified several AI-generated samples as human-written, Copyleaks and GPTZero showed significantly higher consistency and classification accuracy.

Conclusion:

The study reinforced Copyleaks’ effectiveness as a trusted detection tool, capable of distinguishing between authentic human work and AI-generated content with greater precision than many alternatives, especially in academic contexts.

Read the full study here.

Study: AI-Wolf in Sheep’s Clothing: Distinguishing between Swedish Humans and AI Wannabes

Author: Adam Landberg

Published: 2024

Key Findings:

In a large-scale study comparing AI-generated and human-written news articles in both Swedish and English, Copyleaks emerged as the most accurate AI content detector.

- Copyleaks achieved 100% accuracy on English texts, correctly identifying every AI- and human-written sample with zero false positives.

- On Swedish texts, Copyleaks demonstrated 95% overall accuracy, with 100% specificity (no human-written articles were incorrectly flagged as AI) and 90% sensitivity (9 out of 10 AI-generated texts were correctly detected).

Smodin, another detector tested, performed well on English (95% accuracy) but struggled more with Swedish content (74% accuracy) and showed higher false positive rates.

Conclusion:

Copyleaks is the most reliable AI detector for multilingual use, especially in sensitive settings like education. It was the only tool in the study to avoid false accusations of human writers, making it a trusted solution for distinguishing AI-written from human-authored content, particularly in under-resourced languages like Swedish.

Read the full study here.

Study: Automatic Detection of AI-Generated Source Code in Programming Courses

Author: Erik Pirntke, Wictor Rindebrant, Linnaeus University

Supervisor: Johan Hagelbäck

Published: Spring Semester 2024

Key Findings:

- Effective AI Detection via Copyleaks: Copyleaks was selected as the most accurate and reliable AI content detection tool for source code, outperforming other options in four separate academic studies. It was uniquely capable of identifying AI-generated programming assignments while supporting multiple key languages, including Python, Java, JavaScript, and C#.

- Seamless GitLab Integration: The study successfully implemented an automated detection system using GitLab CI/CD pipelines that connected directly to the Copyleaks API. This setup allows for effortless integration into existing programming course workflows with results clearly displayed for both teachers and students within GitLab.

- High Accuracy and Versatility: Copyleaks consistently exceeded the 80% accuracy requirement set by educators. Its broad language support and ability to process and return results through a robust API made it the most suitable tool for academic use.

- Educator-Driven Design: The prototype was built around real teacher feedback from Linnaeus University, and the final implementation fulfilled 6 out of 7 key educator-defined requirements. Teachers appreciated the automation, transparency, and simplicity of the system.

Conclusion:

This study confirms that Copyleaks is a best-in-class solution for detecting AI-generated source code in educational environments. By integrating Copyleaks with GitLab’s CI pipelines, the authors created a powerful, automated system that saves teachers time, maintains academic standards, and provides transparent, actionable results. The tool’s accuracy, flexibility, and developer-friendly API position it as a leading option for institutions looking to future-proof their programming courses against misuse of generative AI. With minor enhancements, the prototype could serve as a scalable model for universities worldwide.

Read the full study here.

Study: AI tools vs AI text: Detecting AI-generated writing in foot and ankle surgery

Author: Steven R. Cooperman DPM, MBA, AACFAS, Roberto A. Brandão DPM, FACFAS

Published: February 2024

Key Findings:

- Copyleaks Led All Detectors in Accuracy: Among six publicly available AI detection tools tested, Copyleaks achieved the highest raw accuracy, correctly identifying 10 out of 12 abstracts (83%). It outperformed all other tools with 0% false positives and only a 33% false negative rate.

- Overall Tool Performance Was Mixed: While Copyleaks stood out, the average accuracy across all six detectors was 63%, with some tools—like Writer and Scribbr—failing to detect any AI-generated abstracts at all. ZeroGPT had the highest false positive rate, flagging 83% of human-written abstracts as AI-generated.

- Tools Struggled with Reworded AI Text: When AI-generated abstracts were reworded using ChatGPT 3.5 to “avoid AI detection,” detection rates fell sharply. Detection accuracy dropped by 54.83%, illustrating the difficulty of catching lightly modified AI-generated content.

- Improved Sensitivity Over Time: A follow-up test three months later showed that GPTZero had improved in identifying AI-generated text (from 51.33% to 75.83%) and reduced false positives. However, even then, performance remained inconsistent compared to Copyleaks.

- Highlighting the Threat of AI-Evasion Tactics: The ability of ChatGPT to disguise AI-generated writing via simple rewording emphasizes the importance of using high-performing, research-validated detection tools like Copyleaks in editorial and academic workflows.

Conclusion:

This first-of-its-kind study in the field of foot and ankle surgery reveals the growing complexity of identifying AI-generated writing in scientific literature. While most AI detection tools show inconsistent performance, Copyleaks clearly stood out as the most accurate and reliable solution, with zero false positives and the best overall success rate in identifying AI-written abstracts. As generative AI continues to evolve, the findings underscore the urgent need for academic journals to adopt robust, research-backed tools like Copyleaks to protect the integrity of scientific publishing. Copyleaks’ proven performance positions it as a key ally in the ongoing effort to uphold ethical research standards in the age of AI.

Read the full study here.

Study: Boston University AI Task Force Report on Generative AI In Education and Research

Author: BU AI Research & Teaching Taskforce

Published: April 5, 2024

Key Findings:

- Critical Embrace of AI: BU recommends embracing GenAI in education and research rather than banning it, encouraging AI literacy, ethical awareness, and adaptability.

- Flexible Policy Frameworks: Faculty retain autonomy to set GenAI policies in syllabi, but must clearly communicate usage rules and cite tools used.

- Detection with Caution: GenAI detectors should not be used in isolation to determine academic misconduct. Instead, they should support a broader evidence base.

- Training and Resources: The report urges BU to provide faculty and students with robust training, resources, AI facilitators, and seed funding to responsibly integrate GenAI into coursework and research.

- Privacy and Security: Strong caution is advised against uploading sensitive data to commercial GenAI tools, with clear policy recommendations and local model alternatives encouraged.

- Creative Collaboration: GenAI tools can enhance creative processes in writing, arts, and design when used with transparency and human oversight.

Conclusion:

Boston University’s AI Task Force underscores a balanced, forward-thinking approach to integrating generative AI in academia. Rather than restricting its use, BU advocates for education, responsibility, and transparency—arming students and faculty with the skills to thrive in the GenAI era. In recognizing the nuanced role AI detectors play in academic integrity, BU’s study found Copyleaks to be the most trustworthy detection solution, reinforcing its value as an essential tool for higher education institutions committed to both innovation and fairness.

Read the full study here.

Study: How Sensitive Are the Free AI-detector Tools in Detecting AI-generated Texts? A Comparison of Popular AI-detector Tools

Author: Sujita Kumar Kar, Teena Bansal, Sumit Modi, and Amit Singh, Indian Journal of Psychological Medicine

Published: Online: May 11, 2024; Indian Journal of Psychological Medicine, Volume 47, Issue 3, May 2025

Key Findings:

- Among 10 popular free AI-detection tools tested, Copyleaks was one of only five tools to detect AI-generated content with 100% accuracy.

- Copyleaks also accurately identified AI-assisted paraphrased text generated by two different rewriting tools (Grammarly and ChatGPT), demonstrating strong sensitivity even when content was altered to appear more humanlike.

- Tools were tested using a controlled prompt to ChatGPT-3.5, followed by paraphrasing through three separate tools. Copyleaks consistently ranked among the most accurate detectors.

- Copyleaks’ performance matched findings from other peer-reviewed studies (e.g., Walters et al.), reinforcing its reliability and consistency in high-stakes academic use cases.

Conclusion:

As AI-generated content becomes more prevalent in academic writing, effective detection tools are critical to preserving integrity. This study highlights significant variation in detection accuracy among free tools. Copyleaks stands out as a leading solution, accurately identifying both original and paraphrased AI-generated content with high precision. Its strong performance across multiple evaluations makes it a dependable choice for institutions aiming to safeguard against AI misuse. Periodic validation and updates to AI-detection tools like Copyleaks are essential as generative models evolve.

Read the full study here.

Study: Accuracy Pecking Order – How 30 AI detectors stack up in detecting generative artificial intelligence content in university English L1 and English L2 student essays

Author: Chaka Chaka, University of South Africa

Published: April 2024, Journal of Applied Learning & Teaching, Vol. 7(1): 1–13

Key Findings:

- Copyleaks and Undetectable AI were the only two out of 30 AI detectors tested that correctly classified all human-written student essays across four diverse datasets (English L1 and English L2, second- and third-year university modules).

- These two tools achieved perfect accuracy (100%), zero false positives, and maximum specificity and true negative rates, ranking #1 overall for reliability.

- In stark contrast, nine detectors completely failed, misclassifying all essays as AI-generated.

- The remaining 19 tools showed inconsistent results, misclassifying essays to varying degrees, often displaying inflated confidence on their landing pages not matched by real-world performance.

- Crucially, the study found no systemic bias among the 30 tools between English L1 (native speakers) and English L2 (non-native speakers), countering prior claims about detector bias in some studies.

Conclusion: This extensive comparative analysis demonstrates that Copyleaks stands out as a top-performing AI detection tool, particularly in high-stakes academic environments. In contrast to many widely available free AI detectors, which often fail to distinguish human-written work from AI-generated content reliably, Copyleaks delivers accurate, fair, and bias-free classification. As institutions navigate rising concerns about academic integrity and the use of GenAI, Copyleaks offers a dependable and trusted solution. The study cautions against overreliance on AI detectors that do not consistently perform or lack transparency, underscoring the need for evidence-based detection tools like Copyleaks in educational settings.

Read the full study here.

Study: The Effectiveness of Software Designed To Detect AI-Generated Writing: A Comparison of 16 AI Text Detectors

Author: William H. Walters, Manhattan College

Published: October 2023, Open Information Science, Volume 7, Issue 1

Key Findings:

- The study evaluated 16 publicly available AI text detectors using 126 undergraduate essays: 42 generated by ChatGPT-3.5, 42 by ChatGPT-4, and 42 written by students.

- Only three detectors demonstrated very high accuracy across all three content types (GPT-3.5, GPT-4, and human-written): Copyleaks, TurnItIn, and Originality.ai.

- Copyleaks and TurnItIn were the only tools to perfectly identify all documents as either AI- or human-generated, with 0% false positives or false negatives.

- Copyleaks stood out for its consistent and decisive classifications, even with GPT-4-generated content, which proved challenging for most other detectors.

- Most detectors performed well with GPT-3.5 content but struggled with GPT-4, which is more difficult to distinguish from human writing.

- Paid or premium status was not a reliable predictor of accuracy. Several free or freemium tools underperformed significantly.

- Detectors with high false positive or false negative rates, such as Crossplag, Writer, and SEO.ai, should be used cautiously in academic settings.

Percentage of all 126 documents for which each detector gave correct, uncertain, or incorrect responses.

Conclusion: This independent peer-reviewed study confirms that Copyleaks is among the most accurate and trustworthy AI text detectors available today, demonstrating flawless classification across all evaluated document types, including the highly nuanced GPT-4-generated texts. While many tools fail to meet the rising challenge of advanced generative AI, Copyleaks has clearly kept pace, providing institutions, educators, and enterprises with a reliable solution for upholding content integrity.

The findings underscore the importance of choosing robust, research-validated tools like Copyleaks when addressing AI use in academic and professional environments. As generative AI becomes more sophisticated, detectors like Copyleaks will play a pivotal role in maintaining trust, transparency, and academic fairness.

Read the full study here.

Study: How Hard Can It Be? Testing the Reliability of AI Detection Tools

Author: Daniel Lee, PhD – University of Adelaide, School of Education, Unit of Digital Learning and Society

Published: September 2023, presented at The Future is Now, HERGA Conference, Flinders University

Key Findings:

- The study evaluated the performance of five free online AI detection tools—Copyleaks, ZeroGPT, Writer, Content at Scale, and SEO.AI—on a range of simulated academic tasks generated by ChatGPT and Bing.

- Copyleaks consistently outperformed the other tools, showing the most reliable results across multiple tests, including:

- 99.9% detection of pure AI-generated text (Stage 1 movie critique)

- Strong resilience to adversarial inputs such as paraphrased or modified AI text

- Most accurate in essay detection, returning a 75.2% AI probability vs. Turnitin’s 100% in the unmodified version and 73.1% in the human-modified version, significantly outperforming Writer (3% and 0%, respectively)

- Effective in paragraph manipulation and Bing essay tests, offering detection scores (e.g., 81.6%) that support further investigation and protect academic integrity

- 99.9% detection of pure AI-generated text (Stage 1 movie critique)

- Other tools, especially Writer and ZeroGPT, were more easily tricked by modifications and showed lower detection reliability.

- Turnitin’s AI detection feature missed some AI-modified texts and was unable to produce results in some tests.

AI Detection Results After Human Modifications

| Turnitin | Copyleaks | ZeroGPT | Writer | Content at Scale | SEO.AI | |

|---|---|---|---|---|---|---|

| ChaptGPT Essay on Academic Integrity | 100% | 75.2% | 39.35% | 3% | 22% | 59% |

| Human Modified Essay | 39% | 73.1% | 17.73% | 0% | 7% | 28% |

Conclusion:

Copyleaks was the most reliable AI detection tool among those tested, consistently identifying AI-generated and subtly modified texts with higher accuracy. While no tool is entirely foolproof, Copyleaks proved to be the most dependable solution in this independent study. Educators are encouraged to supplement Turnitin results with Copyleaks—especially in cases requiring further investigation—due to its strong performance in nuanced scenarios and its ability to distinguish AI-generated content with confidence.

Read the full study here.

Study: Detecting AI Content in Responses Generated by ChatGPT, YouChat, and Chatsonic: The Case of Five AI Content Detection Tools

Author: Chaka Chaka, University of South Africa

Published: July 2023, Journal of Applied Learning & Teaching, Volume 6(2), Pages 1–11

Key Findings:

- The study tested five AI content detectors—Copyleaks, GPTZero, OpenAI Text Classifier, Writer.com, and GLTR—on AI-generated responses from ChatGPT, YouChat, and Chatsonic using English prompts related to applied English language studies.

- Copyleaks AI Content Detector was the top-performing tool, accurately detecting more AI-generated content than any of the others across multiple scenarios.

- When tested on translated responses (ChatGPT output translated via Google Translate into German, French, and Spanish), Copyleaks still performed best, correctly identifying:

- 3 of the German-translated responses

- 5 of the French-translated responses

- All of the Spanish-translated responses as AI-generated

- 3 of the German-translated responses

- GPTZero misclassified all of the translated responses as human-written, highlighting Copyleaks’ superior multilingual detection capabilities.

- The study also emphasized that while no tool is yet perfect, Copyleaks provided the most accurate and nuanced results—particularly when identifying AI-generated content in multilingual or modified formats.

Conclusion:

Among the five AI detection tools evaluated, Copyleaks proved to be the most effective and adaptable, especially in identifying translated and nuanced AI-generated content. The study affirms Copyleaks’ leadership in AI content detection, showcasing its value in supporting academic integrity even across languages and content types.

Read the full study here.

Across all these third-party evaluations, Copyleaks has emerged as the gold standard for AI content detection. Whether you’re monitoring academic integrity, verifying authorship in professional content, or ensuring trust in high-stakes environments, Copyleaks delivers unmatched accuracy, consistency, and transparency.